Abstract

Attractor networks are an influential theory for memory storage in brain systems. This theory has recently been challenged by the observation of strong temporal variability in neuronal recordings during memory tasks. In this work, we study a sparsely connected attractor network where memories are learned according to a Hebbian synaptic plasticity rule. After recapitulating known results for the continuous, sparsely connected Hopfield model, we investigate a model in which new memories are learned continuously and old memories are forgotten, using an online synaptic plasticity rule. We show that for a forgetting timescale that optimizes storage capacity, the qualitative features of the network’s memory retrieval dynamics are age dependent: most recent memories are retrieved as fixed-point attractors while older memories are retrieved as chaotic attractors characterized by strong heterogeneity and temporal fluctuations. Therefore, fixed-point and chaotic attractors coexist in the network phase space. The network presents a continuum of statistically distinguishable memory states, where chaotic fluctuations appear abruptly above a critical age and then increase gradually until the memory disappears. We develop a dynamical mean field theory to analyze the age-dependent dynamics and compare the theory with simulations of large networks. We compute the optimal forgetting timescale for which the number of stored memories is maximized. We found that the maximum age at which memories can be retrieved is given by an instability at which old memories destabilize and the network converges instead to a more recent one. Our numerical simulations show that a high degree of sparsity is necessary for the dynamical mean field theory to accurately predict the network capacity. To test the robustness and biological plausibility of our results, we study numerically the dynamics of a network with learning rules and transfer function inferred from in vivo data in the online learning scenario. We found that all aspects of the network’s dynamics characterized analytically in the simpler model also hold in this model. These results are highly robust to noise. Finally, our theory provides specific predictions for delay response tasks with aging memoranda. In particular, it predicts a higher degree of temporal fluctuations in retrieval states associated with older memories, and it also predicts fluctuations should be faster in older memories. Overall, our theory of attractor networks that continuously learn new information at the price of forgetting old memories can account for the observed diversity of retrieval states in the cortex, and in particular, the strong temporal fluctuations of cortical activity.

1 More- Received 3 December 2021

- Revised 31 August 2022

- Accepted 6 December 2022

DOI:https://doi.org/10.1103/PhysRevX.13.011009

Published by the American Physical Society under the terms of the Creative Commons Attribution 4.0 International license. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Physics Subject Headings (PhySH)

Focus

Focus

Memories Become Chaotic before They Are Forgotten

Published 27 January 2023

A model for information storage in the brain reveals how memories decay with age.

See more in Physics

Popular Summary

Memories in the brain are learned and forgotten through experience. The popular theory of “attractor networks” proposes that a memory is stored as a specific pattern of brain activity (an attractor state) that persists over time. However, aspects of the theory are at odds with experiments: When memories are retrieved in the classical theory, neuronal activity is constant, whereas in some experiments the activity is highly irregular. Here, we show that there are roles for both constant and irregular activity in a network where new memories are learned continuously and old memories are forgotten.

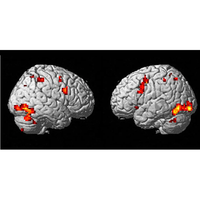

In such a network, we find that most recent memories are retrieved as stationary attractors, whereas older memories are retrieved as chaotic attractors, characterized by strong heterogeneity and temporal fluctuations. The combination of strong neural fluctuations and stable encoding of memories during chaotic retrieval states qualitatively resembles the neural dynamics observed experimentally.

We develop a theory to analyze these age-dependent network dynamics. Our theory predicts that the network presents a continuum of statistically distinguishable memory states, where chaotic fluctuations appear abruptly above a critical age and then increase gradually until the memory disappears. This is confirmed using simulations of large networks.

Our theory qualitatively holds in more biologically realistic networks, and it provides testable predictions for memory experiments with aging memoranda. In particular, it predicts faster and higher degrees of temporal fluctuations in retrieval states associated with older memories.

Focus

Focus