Abstract

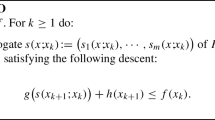

This manuscript presents a derivative-free quadratic regularization method for unconstrained minimization of a smooth function with Lipschitz continuous gradient. At each iteration, trial points are computed by minimizing a quadratic regularization of a local model of the objective function. The models are based on forward finite-difference gradient approximations. By using a suitable acceptance condition for the trial points, the accuracy of the gradient approximations is dynamically adjusted as a function of the regularization parameter used to control the stepsizes. Worst-case evaluation complexity bounds are established for the new method. Specifically, for nonconvex problems, it is shown that the proposed method needs at most \({\mathcal {O}}\left( n\epsilon ^{-2}\right)\) function evaluations to generate an \(\epsilon\)-approximate stationary point, where n is the problem dimension. For convex problems, an evaluation complexity bound of \({\mathcal {O}}\left( n\epsilon ^{-1}\right)\) is obtained, which is reduced to \({\mathcal {O}}\left( n\log (\epsilon ^{-1})\right)\) under strong convexity. Numerical results illustrating the performance of the proposed method are also reported.

Similar content being viewed by others

Data availability

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.

Notes

In the context of nonconvex problems, evaluation complexity bounds of \({\mathcal {O}}\left( n^{2}\epsilon ^{-2}\right)\) were obtained by Vicente [23] and by Konecny and Richtárik [10] for direct search methods, and also by Garmanjani, Júdice and Vicente [7] for a derivative-free trust-region method.

Evaluation complexity bounds of \({\mathcal {O}}\left( n\epsilon ^{-1}\right)\) and \({\mathcal {O}}\left( n\log (\epsilon ^{-1})\right)\) (in the convex and strongly convex cases, respectively) were established in [4, 18] for randomized DFO methods. They constitute upper bounds for the number of function evaluations that the corresponding methods need to find \(\bar{x}\) such that \(E[f(\bar{x})]-f^{*}\le \epsilon\), where \(f^{*}\) is the optimal value of \(f(\,\cdot \,)\) and E[X] denotes the expected value of a random variable X. For deterministic direct search methods, bounds of \({\mathcal {O}}\left( n^{2}\epsilon ^{-1}\right)\) and \({\mathcal {O}}\left( n^{2}\log (\epsilon ^{-1})\right)\) were established in [6, 10].

Assumptions A1 and A2 are the usual assumptions for the analysis of first-order and derivative-free methods (see, e.g., Section 1.2.3 of [16]). In particular, any twice continuously differentiable function with uniformly bounded Hessian satisfies A1.

In fact, for the theoretical guarantees, any other factor bigger than one can be used.

If assumptions A1 and A5 hold, then \(\mu \le L\). Since \(L<C_{f}\), under these two assumptions it follows that \(\frac{\mu }{C_{f}}\in (0,1)\).

The data profiles were generated using the code data_profile.m freely available in the website https://www.mcs.anl.gov/~more/dfo/.

Namely, the datasets Iris, Breast Cancer Wisconsin, Wine, Sonar, Phishing, Ionosphere, Diabetes, Musk, Seeds, Bank Note Authentication, freely available in the website http://archive.ics.uci.edu/ml/index.php.

References

Andrei, N.: An unconstrained optimization test functions collection. Adv. Model. Optim. 10(1), 147–161 (2008)

Audet, C., Hare, W.: Derivative-free and blackbox optimization. Springer, Berlin (2017)

Audet, C., Orban, D.: Finding optimal algorithmic parameters using derivative-free optimization. SIAM J. Optim. 17(3), 642–664 (2006)

Bergou, E.H., Gorbunov, E., Richtárik, P.: Stochastic three points method for unconstrained smooth minimization. SIAM J. Optim. 30(4), 2726–2749 (2020)

Conn, A.R., Scheinberg, K., Vicente, L.N.: Introduction to Derivative-Free Optimization. SIAM (2009)

Dodangeh, M., Vicente, L.N.: Worst-case complexity of direct search under convexity. Math. Program. 155, 307–332 (2016)

Garmanjani, R., Júdice, D., Vicente, L.N.: Trust-region methods without using derivatives: worst case complexity and the nonsmooth case. SIAM J. Optim. 26(4), 1987–2011 (2016)

Grapiglia, G.N.: Quadratic regularization methods with finite-difference gradient approximations. Comput. Optim. Appl. (2022). https://doi.org/10.1007/s10589-022-00373-z

Karbasian, H.R., Vermeire, B.C.: Gradient-free aerodynamic shape optimization using large Eddy simulation. Comput. Fluids 232, 105185 (2022)

Konecny, J., Richtárik, P.: Simple complexity analysis of simplified direct search. arXiv:1410.0390 [math.OC] (2014)

Larson, J., Menickelly, M., Wild, S.M.: Derivative-free optimization. Acta Numer. 28, 287–404 (2019)

Lojasiewicz, S.: A topological property of real analytic subsets (in French), Coll. du CNRS, Les équations aux dérivées partielles, pp. 87–89 (1963)

Marsden, A.L., Feinstein, J.A., Taylor, C.A.: A computational framework for derivative-free optimization of cardiovascular geometries. Comput. Methods Appl. Mech. Eng. 197, 1890–1905 (2008)

Moré, J.J., Wild, S.M.: Benchmarking derivative-free optimization algorithms. SIAM J. Optim. 20(1), 172–191 (2009)

Nesterov, Yu.: How to make gradients small. Optima 88, 10–11 (2012)

Nesterov, Yu.: Lectures on Convex Optimization, 2nd edn. Springer, Berlin (2018)

Nesterov, Yu., Polyak, B.T.: Cubic regularization of Newton method and its global performance. Math. Program. 108, 177–205 (2006)

Nesterov, Yu., Spokoiny, V.: Random gradient-free minimization of convex functions. Found. Comput. Math. 17, 527–566 (2017)

Polyak, B.T.: Gradient methods for minimizing functionals (in Russian). Zh. Vychisl. Mat. Mat. Fiz., 643–653 (1963)

Russ, J.B., Li, R.L., Herschman, A.R., Haim, W., Vedula, V., Kysar, J.W., Kalfa, D.: Design optimization of a cardiovascular stent with application to a balloon expandable prosthetic heart valve. Mater. Des. 209 (2021)

Sophy, O., Cartis, C., Kriest, I., Tett, S.F.B., Khatiwala, S.: A derivative-free optimisation method for global ocean biogeochemical models. Geosci. Model Dev. 15(9), 3537–3554 (2022)

Sun, Y., Sahinidis, N.V., Sundaram, A., Cheon, M.-S.: Derivative-free optimization for chemical product design. Curr. Option Chem. Eng. 27, 98–106 (2020)

Vicente, L.N.: Worst case complexity of direct search. EURO J. Comput. Optim. 1, 143–153 (2013)

Acknowledgements

The author is very grateful to the two anonymous referees, whose comments helped to improve the manuscript.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

G. N. Grapiglia was partially supported by CNPq (Grant 312777/2020-5).

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Grapiglia, G.N. Worst-case evaluation complexity of a derivative-free quadratic regularization method. Optim Lett 18, 195–213 (2024). https://doi.org/10.1007/s11590-023-01984-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11590-023-01984-z