Abstract

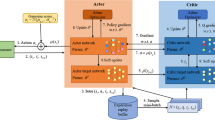

Mapless navigation for mobile Unmanned Ground Vehicles (UGVs) using Deep Reinforcement Learning (DRL) has attracted significantly rising attention in robotic and related research communities. Collision avoidance from dynamic obstacles in unstructured environments, such as pedestrians and other vehicles, is one of the key challenges for mapless navigation. This paper proposes a DRL algorithm based on heuristic correction learning for autonomous navigation of a UGV in mapless configuration. We use a 24-dimensional lidar sensor, and merge the target position information and the speed information of the UGV as the input of the reinforcement learning agent. The actions of the UGV are produced as the output of the agent. Our proposed algorithm has been trained and evaluated in both static and dynamic environments. The experimental result shows that our proposed algorithm can reach the target in less time with shorter distances under the premise of ensuring safety than other algorithms. Especially, the success rate of our proposed algorithm is 2.05 times higher than the second effective algorithm and the trajectory efficiency is improved by \(24\%\) in the dynamic environment. Finally, our proposed algorithm is deployed on a real robot in the real-world environment to validate and evaluate the algorithm performance. Experimental results show that our proposed algorithm can be directly applied to real robots robustly.

Article PDF

Similar content being viewed by others

Code Availability

The simulation data will be available upon request.

References

Likhachev, M., Ferguson, D.I., Gordon, G.J., Stentz, A., Thrun, S.: Anytime dynamic a*: An anytime, replanning algorithm. In: International Conference on Automated Planning and Scheduling (ICAPS), vol. 5, pp. 262–271 (2005)

Nasir, J., Islam, F., Malik, U., Ayaz, Y., Hasan, O., Khan, M., Muhammad, M.S.: Rrt*-smart: A rapid convergence implementation of rrt. Int J Adv Robot Syst 10(7), 1651–1656 (2013)

Durrant-Whyte, H., Bailey, T.: Simultaneous localization and mapping: part i. IEEE Robot Autom Mag 13(2), 99–110 (2006)

Chen, Y.F., Everett, M., Liu, M., How, J.P.: Socially aware motion planning with deep reinforcement learning. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1343–1350 (2017). IEEE

Silver, D., Huang, A., Maddison, C.J., Guez, A., Sifre, L., Van Den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M., et al.: Mastering the game of go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016)

Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D., Riedmiller, M.: Playing atari with deep reinforcement learning (2013). arXiv:1312.5602

Ye, D., Liu, Z., Sun, M., Shi, B., Zhao, P., Wu, H., Yu, H., Yang, S., Wu, X., Guo, Q., et al.: Mastering complex control in moba games with deep reinforcement learning. In: AAAI Conference on Artificial Intelligence, vol. 34, pp. 6672–6679 (2020)

Mnih, V., Badia, A.P., Mirza, M., Graves, A., Lillicrap, T., Harley, T., Silver, D., Kavukcuoglu, K.: Asynchronous methods for deep reinforcement learning. In: International Conference on Machine Learning (ICML), pp. 1928–1937 (2016). PMLR

Lillicrap, T.P., Hunt, J.J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., Wierstra, D.: Continuous control with deep reinforcement learning (2015). arXiv:1509.02971

Schulman, J., Levine, S., Abbeel, P., Jordan, M., Moritz, P.: Trust region policy optimization. In: International Conference on Machine Learning (ICML), pp. 1889–1897 (2015). PMLR

Wang, Y., He, H., Tan, X.: Truly proximal policy optimization. In: Uncertainty in Artificial Intelligence, pp. 113–122 (2020). PMLR

Tai, L., Paolo, G., Liu, M.: Virtual-to-real deep reinforcement learning: Continuous control of mobile robots for mapless navigation. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 31–36 (2017). IEEE

Zhang, P., Wei, C., Cai, B., Ouyang, Y.: Mapless navigation for autonomous robots: A deep reinforcement learning approach. In: Chinese Automation Congress (CAC), pp. 3141–3146 (2019). IEEE

Gu, S., Holly, E., Lillicrap, T., Levine, S.: Deep reinforcement learning for robotic manipulation with asynchronous off-policy updates. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 3389–3396 (2017). IEEE

Chaffre, T., Moras, J., Chan-Hon-Tong, A., Marzat, J.: Sim-to-real transfer with incremental environment complexity for reinforcement learning of depth-based robot navigation (2020). arXiv:2004.14684

Pakrooh, R., Bohlooli, A.: A survey on unmanned aerial vehicles-assisted internet of things: A service-oriented classification. Wirel Pers Commun 119, 1541–1575 (2021)

Alam, T.: Blockchain-enabled deep reinforcement learning approach for performance optimization on the internet of things. Wirel Pers Commun 126(2), 995–1011 (2022)

Swarup, A., Gopal, M.: Control strategies for robot manipulators-a review. IETE J Res 35(4), 198–207 (1989)

An, X., Wang, Y.: Smart wearable medical devices for isometric contraction of muscles and joint tracking with gyro sensors for elderly people. J Ambient Intell Hum Comput, 1–12 (2021)

Ding, H.: Motion path planning of soccer training auxiliary robot based on genetic algorithm in fixed-point rotation environment. J Ambient Intell Hum Comput 11, 6261–6270 (2020)

Pawar, P., Yadav, S.M., Trivedi, A.: Performance study of dual unmanned aerial vehicles with underlaid device-to-device communications. Wirel Pers Commun 105, 1111–1132 (2019)

Alimi, I.A., Teixeira, A.L., Monteiro, P.P.: Effects of correlated multivariate fso channel on outage performance of space-air-ground integrated network (sagin). Wirel Pers Commun 106(1), 7–25 (2019)

Li, H., Luo, J., Li, J.: Reinforcement learning based full-duplex cognitive anti-jamming using improved energy detector. Wirel Pers Commun 111, 2107–2127 (2020)

Li, L., Mao, Y.: Autonomously coordinating multiple unmanned vehicles for data communication between two stations. Wirel Pers Commun 97, 3793–3810 (2017)

Praise, J.J., Raj, R.J.S., Benifa, J.B.: Development of reinforcement learning and pattern matching (rlpm) based firewall for secured cloud infrastructure. Wirel Pers Commun 115, 993–1018 (2020)

Tasgaonkar, P.P., Garg, R.D., Garg, P.K.: Vehicle detection and traffic estimation with sensors technologies for intelligent transportation systems. Sens & Imaging 21, 1–28 (2020)

Annepu, V., Rajesh, A.: Implementation of an efficient artificial bee colony algorithm for node localization in unmanned aerial vehicle assisted wireless sensor networks. Wirel Pers Commun 114, 2663–2680 (2020)

Kumar, A.: Real-time performance comparison of vision-based autonomous landing of quadcopter on a ground moving target. IETE J Res, 1–18 (2021)

Li, X.: Robot target localization and interactive multi-mode motion trajectory tracking based on adaptive iterative learning. J Ambient Intell Hum Comput 11, 6271–6282 (2020)

Muller, U., Ben, J., Cosatto, E., Flepp, B., Cun, Y.: Off-road obstacle avoidance through end-to-end learning. Adv Neural Inf Process Syst 18, 739–746 (2005). (MIT Press)

Tai, L., Li, S., Liu, M.: A deep-network solution towards model-less obstacle avoidance. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 2759–2764 (2016). IEEE

Chen, C., Seff, A., Kornhauser, A., Xiao, J.: Deepdriving: Learning affordance for direct perception in autonomous driving. In: IEEE International Conference on Computer Vision, pp. 2722–2730 (2015). IEEE

Kretzschmar, H., Spies, M., Sprunk, C., Burgard, W.: Socially compliant mobile robot navigation via inverse reinforcement learning. Int J Robot Res 35(11), 1289–1307 (2016)

Pfeiffer, M., Schaeuble, M., Nieto, J., Siegwart, R., Cadena, C.: From perception to decision: A data-driven approach to end-to-end motion planning for autonomous ground robots. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 1527–1533 (2017). IEEE

Zhu, D., Li, T., Ho, D., Wang, C., Meng, M.Q.-H.: Deep reinforcement learning supervised autonomous exploration in office environments. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 7548–7555 (2018). IEEE

Zhang, J., Springenberg, J.T., Boedecker, J., Burgard, W.: Deep reinforcement learning with successor features for navigation across similar environments. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 2371–2378 (2017). IEEE

Everett, M., Chen, Y.F., How, J.P.: Motion planning among dynamic, decision-making agents with deep reinforcement learning. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 3052–3059 (2018). IEEE

Gu, S., Lillicrap, T., Sutskever, I., Levine, S.: Continuous deep q-learning with model-based acceleration. In: International Conference on Machine Learning (ICML), pp. 2829–2838 (2016). PMLR

Chen, C., Liu, Y., Kreiss, S., Alahi, A.: Crowd-robot interaction: Crowdaware robot navigation with attention-based deep reinforcement learning. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 6015–6022 (2019). IEEE

Ciou, P.-H., Hsiao, Y.-T., Wu, Z.-Z., Tseng, S.-H., Fu, L.-C.: Composite reinforcement learning for social robot navigation. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 2553–2558 (2018). IEEE

Chen, Y.F., Liu, M., Everett, M., How, J.P.: Decentralized noncommunicating multiagent collision avoidance with deep reinforcement learning. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 285–292 (2017). IEEE

Fujimoto, S., Hoof, H., Meger, D.: Addressing function approximation error in actor-critic methods. In: International Conference on Machine Learning (ICML), pp. 1587–1596 (2018). PMLR

Hasselt, H.: Double q-learning. Adv Neural Inf Process Syst 23, 2613–2621 (2010)

Schaul, T., Quan, J., Antonoglou, I., Silver, D.: Prioritized experience replay (2015). arXiv:1511.05952

Koenig, N., Howard, A.: Design and use paradigms for gazebo, an opensource multi-robot simulator. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), vol. 3, pp. 2149–2154 (2004). IEEE

Funding

This work was supported in part by the National Natural Science Foundation of China under Grant 61703138.

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Changyun Wei and Yongping Ouyang performed material preparation, data collection and analysis. Changyun Wei and Yongping Ouyang wrote the first draft of the manuscript. Yajun Li and Ze Ji revised the manuscript based on previous versions. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Ethical approval

Not applicable. Our manuscript does not report results of studies involving humans or animals.

Consent to participate

Not applicable. Our manuscript does not report results of studies involving humans or animals.

Consent for Publication

All authors have approved and consented to publish the manuscript.

Conflicts of interest

The authors have no relevant financial or nonfinancial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wei, C., Li, Y., Ouyang, Y. et al. Deep Reinforcement Learning with Heuristic Corrections for UGV Navigation. J Intell Robot Syst 109, 18 (2023). https://doi.org/10.1007/s10846-023-01950-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-023-01950-y