Abstract

In this paper we consider filtering and smoothing of partially observed chaotic dynamical systems that are discretely observed, with an additive Gaussian noise in the observation. These models are found in a wide variety of real applications and include the Lorenz 96’ model. In the context of a fixed observation interval T, observation time step h and Gaussian observation variance \(\sigma _Z^2\), we show under assumptions that the filter and smoother are well approximated by a Gaussian with high probability when h and \(\sigma ^2_Z h\) are sufficiently small. Based on this result we show that the maximum a posteriori (MAP) estimators are asymptotically optimal in mean square error as \(\sigma ^2_Z h\) tends to 0. Given these results, we provide a batch algorithm for the smoother and filter, based on Newton’s method, to obtain the MAP. In particular, we show that if the initial point is close enough to the MAP, then Newton’s method converges to it at a fast rate. We also provide a method for computing such an initial point. These results contribute to the theoretical understanding of widely used 4D-Var data assimilation method. Our approach is illustrated numerically on the Lorenz 96’ model with state vector up to 1 million dimensions, with code running in the order of minutes. To our knowledge the results in this paper are the first of their type for this class of models.

Similar content being viewed by others

1 Introduction

Filtering and smoothing are among the most important problems for several applications, featuring contributions from mathematics, statistics, engineering and many more fields; see, for instance, [13] and the references therein. The basic notion of such models is the idea of an unobserved stochastic process that is observed indirectly by data. The most typical model is perhaps where the unobserved stochastic process is a Markov chain or a diffusion process. In this paper, we are mainly concerned with the scenario when the unobserved dynamics are deterministic and, moreover, chaotic. The only randomness in the unobserved system is uncertainty in the initial condition, and it is this quantity that we wish to infer, on the basis of discretely and sequentially observed data; we explain the difference between filtering and smoothing in this context below. This class of problems has slowly become more important in the literature, particularly in the area of data assimilation [27]. The model itself has a substantial number of practical applications, including weather prediction, oceanography and oil reservoir simulation, see, for instance, [24].

In this paper we consider smoothing and filtering for partially observed deterministic dynamical systems of the general form

where \(\varvec{u}: \mathbb {R}^+\rightarrow \mathbb {R}^d\) is a dynamical system in \(\mathbb {R}^d\) for some \(d\in \mathbb {Z}_+\), \(\varvec{A}\) is linear operator in \(\mathbb {R}^d\) (i.e. \(\varvec{A}\) is a \(d\times d\) matrix), \(\varvec{f}\in \mathbb {R}^d\) is a constant vector, and \(\varvec{B}(\varvec{u},\varvec{u})\) is a bilinear form corresponding to the nonlinearity (i.e. \(\varvec{B}\) is a \(d\times d\times d\) array). We denote the solution of Eq. (1.1) with initial condition \(\varvec{u}(0){\mathrel {\mathop :}=}\varvec{v}\) for \(t\ge 0\) by \(\varvec{v}(t)\). The derivatives of the solution \(\varvec{v}(t)\) at time \(t=0\) will be denoted by

in particular, \(\varvec{D}^0\varvec{v}=\varvec{v}\) , \(\varvec{D}\varvec{v}{\mathrel {\mathop :}=}\varvec{D}^1\varvec{v}=-\varvec{A} \varvec{v}-\varvec{B}(\varvec{v},\varvec{v})+\varvec{f}\) (the right-hand side of (1.1)), and \(\varvec{D}^2\varvec{v}=-\varvec{A} \varvec{D}^1 \varvec{v}-\varvec{B}(\varvec{D}^1 \varvec{v},\varvec{v})-\varvec{B}(\varvec{v},\varvec{D}^1 \varvec{v})\).

In order to ensure the existence of a solution to Eq. (1.1) for every \(t\ge 0\), we assume that there are constants \(R>0\) and \(\delta >0\) such that

We call this the trapping ball assumption. Let \(\mathcal {B}_R{\mathrel {\mathop :}=}\{\varvec{v}\in \mathbb {R}^d: \Vert \varvec{v}\Vert \le R\}\) be the ball of radius R. Using the fact that \(\left<\frac{d}{\hbox {d}t} \varvec{v}(t),\varvec{v}(t)\right>= \frac{1}{2}\frac{d }{d t}\Vert \varvec{v}(t)\Vert ^2\), one can show that the solution to (1.1) exists for \(t\ge 0\) for every \(\varvec{v}\in \mathcal {B}_R\) and satisfies that \(\varvec{v}(t)\in \mathcal {B}_R\) for \(t\ge 0\).

Equation (1.1) was shown in Sanz-Alonso and Stuart [42] and Law et al. [27] to be applicable to three chaotic dynamical systems: the Lorenz 63’ model, the Lorenz 96’ model and the Navier–Stokes equation on the torus; such models have many applications. We note that instead of the trapping ball assumption, these papers have considered different assumptions on \(\varvec{A}\) and \(\varvec{B}(\varvec{v},\varvec{v})\). As we shall explain in Sect. 1.1, their assumptions imply (1.3); thus, the trapping ball assumption is more general.

We assume that the system is observed at time points \(t_j=j h\) for \(j=0,1,\ldots \), with observations

where \(\varvec{H}: \mathbb {R}^d \rightarrow \mathbb {R}^{d_o}\) is a linear operator and \((\varvec{Z}_j)_{j\ge 0}\) are i.i.d. centred random vectors taking values in \(\mathbb {R}^{d_o}\) describing the noise. We assume that these vectors have distribution \(\eta \) that is Gaussian with i.i.d. components of variance \(\sigma _Z^2\).Footnote 1

The contributions of this article are as follows. In the context of a fixed observation interval T, we show under assumptions that the filter and smoother are well approximated by a Gaussian law when \(\sigma ^2_Z h\) is sufficiently small. Our next result, using the ideas of the first one, shows that the maximum a posteriori (MAP) estimators (of the filter and smoother) are asymptotically optimal in mean square error when \(\sigma ^2_Z h\) tends to 0. The main practical implication of these mathematical results is that we can then provide a batch algorithm for the smoother and filter, based on Newton’s method, to obtain the MAP. In particular, we prove that if the initial point is close enough to the MAP, then Newton’s method converges to it at a fast rate. We also provide a method for computing such an initial point and prove error bounds for it. Our approach is illustrated numerically on the Lorenz 96’ model with state vector up to 1 million dimensions. We believe that the method of this paper has a wide range of potential applications in meteorology, but we only include one example due to space considerations.

We note that in this paper, we consider finite-dimensional models. There is a substantial interest in the statistics literature in recent years in nonparametric inference for infinite-dimensional PDE models, see [15] for an overview and references, and Giné and [21] for a comprehensive monograph on the mathematical foundations of infinite-dimensional statistical models. This approach can result in MCMC algorithms that are robust with respect to the refinement of the discretisation level, see, for example, [11, 12, 14, 39, 50, 52]. There are also other randomization and optimization based methods that have been recently proposed in the literature, see, for example, [2, 51].

A key property of these methods is that the prior is defined on the function space, and the discretisations automatically define corresponding prior distributions with desirable statistical properties in a principled manner. This is related to modern Tikhonov–Phillips regularisation methods widely used in applied mathematics, see [4] for a comprehensive overview. In the context of infinite-dimensional models, MAP estimators are non-trivial to define in a mathematically precise way on the infinite-dimensional function space, but several definitions of MAP estimators, various weak consistency results under the small noise limit, and posterior contraction rates have been shown in recent years, see, for example, [10, 16, 19, 23, 25, 34, 36, 49]. Some other important works on similar models and/or associated filtering/smoothing algorithms include [6, 22, 28]. These results are very interesting from a mathematical and statistical point of view; however, the intuitive meaning of some of the necessary conditions, and their algorithmic implications are difficult to grasp.

In contrast to these works, our results in this paper concern the finite-dimensional setting that is the most frequently used one in the data assimilation community. By working in finite dimensions, we are able to show consistency results and convergence rates for the MAP estimators under small observation noise/high observation frequency limits under rather weak assumptions. (In particular, in Section 4.4 of Paulin et al. [38] our key assumption on the dynamics was verified in 100 trials when \(\varvec{A}\), \(\varvec{B}\) and \(\varvec{f}\) were randomly chosen, and only the first component of the system was observed, and they were always satisfied.) Moreover, previous work in the literature has not said anything about the computational complexity of actually finding the MAP estimators, which is a non-trivial problem in nonlinear setting due to the existence of local maxima for the log-likelihood. In our paper we propose appropriate initial estimators and show that Newton’s method started from them converges to the true MAP with high probability in the small noise/high observation frequency scenario when started from this initial estimator.

It is important to mention that the MAP estimator forms the basis of the 4D-Var method introduced in Le Dimet and Talagrand [29] and Talagrand and Courtier [44] that is widely used in weather forecasting. A key methodological innovation of this method is that the gradients of the log-likelihood are computed via the adjoint equations, so that each gradient evaluation takes a similar amount of computation effort as a single run of the model. This has allowed the application of the method on large-scale models with up to \(d=10^9\) dimensions. See Dimet and Shutyaev [17] for some theoretical results, and Navon [35], Bannister [1] for an overview of some recent advances. The present paper offers rigorous statistical foundations for this method for the class of nonlinear systems defined by (1.1).

The structure of the paper is as follows. In Sect. 1.1, we state some preliminary results for systems of the type (1.1). Section 2 contains our main results: Gaussian approximations, asymptotic optimality of MAP estimators and approximation of MAP estimators via Newton’s method with precision guarantees. In Sect. 3 we apply our algorithm to the Lorenz 96’ model. Section 4 contains some preliminary results, and Sect. 5 contains the proofs of our main results. Finally, “Appendix” contains the proofs of our preliminary results based on concentration inequalities for empirical processes.

1.1 Preliminaries

Some notations and basic properties of systems of the form (1.1) are now detailed below. The one-parameter solution semigroup will be denoted by \(\varPsi _t\); thus, for a starting point \(\varvec{v}=(v_1,\ldots ,v_d)\in \mathbb {R}^d\), the solution of (1.1) will be denoted by \(\varPsi _t(\varvec{v})\), or equivalently, \(\varvec{v}(t)\). Sanz-Alonso and Stuart [42] and Law et al. [27] have assumed that the nonlinearity is energy conserving, i.e. \(\left<\varvec{B}(\varvec{v},\varvec{v}),\varvec{v}\right>=0\) for every \(\varvec{v}\in \mathbb {R}^d\). They also assume that the linear operator \(\varvec{A}\) is positive definite, i.e. there is a \(\lambda _{\varvec{A}}>0\) such that \(\left<\varvec{A}\varvec{v},\varvec{v}\right>\ge \lambda _{\varvec{A}} \left<\varvec{v},\varvec{v}\right>\) for every \(\varvec{v}\in \mathbb {R}^d\). As explained on page 50 of Law et al. [27], (1.1) together with these assumptions above implies that for every \(\varvec{v}\in \mathbb {R}^d\),

From (1.4) one can show that \(\mathcal {B}_R\) is an absorbing set for any

thus, all paths enter into this set, and they cannot escape from it once they have reached it. This in turn implies the existence of a global attractor (see, for example, [45], or Chapter 2 of Stuart and Humphries [43]). Moreover, the trapping ball assumption (1.3) holds.

For \(t\ge 0\), let \(\varvec{v}(t)\) and \(\varvec{w}(t)\) denote the solutions of (1.1) started from some points \(\varvec{v},\varvec{w}\in \mathbb {R}^d\). Based on (1.1), we have that for any two points \(\varvec{v}, \varvec{w}\in \mathcal {B}_R\), any \(t\ge 0\),

and therefore by Grönwall’s lemma, we have that for any \(t\ge 0\),

for a constant \(G{\mathrel {\mathop :}=}\Vert \varvec{A}\Vert +2\Vert \varvec{B}\Vert R\), where

For \(t\ge 0\), let \(\varPsi _{t}(\mathcal {B}_R){\mathrel {\mathop :}=}\{\varPsi _{t}(\varvec{v}): \varvec{v}\in \mathcal {B}_R\}\), then by (1.6), it follows that \(\varPsi _{t}:\mathcal {B}_R\rightarrow \varPsi _{t}(\mathcal {B}_R)\) is a one-to-one mapping, which has an inverse that we denote as \(\varPsi _{-t}: \varPsi _{t}(\mathcal {B}_R)\rightarrow \mathcal {B}_R\).

The main quantities of interest of this paper are the smoothing and filtering distributions corresponding to the conditional distribution of \(\varvec{u}(t_0)\) and \(\varvec{u}(t_k)\), respectively, given the observations \(\varvec{Y}_{0:k}{\mathrel {\mathop :}=}\left\{ \varvec{Y}_0,\ldots ,\varvec{Y}_k\right\} \). The densities of these distributions will be denoted by \(\mu ^{{\mathrm {sm}}}(\varvec{v}|\varvec{Y}_{0:k})\) and \(\mu ^{{\mathrm {fi}}}(\varvec{v}|\varvec{Y}_{0:k})\). To make our notation more concise, we define the observed part of the dynamics as

for any \(t\in \mathbb {R}\) and \(\varvec{v}\in \mathcal {B}_R\). Using these notations, the densities of the smoothing and filtering distributions can be expressed as

where \(\det \) stands for determinant, and \(Z_{k}^{\mathrm {sm}}, Z_{k}^{\mathrm {fi}}\) are normalising constants independent of \(\varvec{v}\). Since the determinant of the inverse of a matrix is the inverse of its determinant, we have the equivalent formulation

We assume a prior q on the initial condition that is absolutely continuous with respect to the Lebesgue measure, and zero outside the ball \(\mathcal {B}_R\) [where the value of R is determined by the trapping ball assumption (1.3)].

For \(k\ge 1\), we define the kth Jacobian of a function \(g:\mathbb {R}^{d_1}\rightarrow \mathbb {R}^{d_2}\) at point \(\varvec{v}\) as a \(k+1\)-dimensional array, denoted by \(\varvec{J}^k g(\varvec{v})\) or equivalently \(\varvec{J}^k_{\varvec{v}} g\) , with elements

We define the norm of this kth Jacobian as

where for a \(k+1\)-dimensional \(d_1\times \ldots \times d_{k+1}\)-sized array \(\varvec{M}\), we denote

Using (1.1) and (1.3), we have that

By induction, we can show that for any \(i\ge 2\), and any \(\varvec{v}\in \mathbb {R}^d\), we have

From this, the following bounds follow (see Section A.1 of “Appendix” for a proof).

Lemma 1.1

For any \(i\ge 0\), \(k\ge 1\), \(\varvec{v}\in \mathcal {B}_R\), we have

In some of our arguments we are going to use the multivariate Taylor expansion for vector-valued functions. Let \(g:\mathbb {R}^{d_1}\rightarrow \mathbb {R}^{d_2}\) be \(k+1\) times differentiable for some \(k\in \mathbb {N}\). Then using the one-dimensional Taylor expansion of the functions \(g_i(\varvec{a}+t\varvec{h})\) in t (where \(g_i\) denotes the ith component of g), one can show that for any \(\varvec{a}, \varvec{h}\in \mathbb {R}^{d_1}\), we have

where \(\varvec{h}^j{\mathrel {\mathop :}=}(\varvec{h},\ldots ,\varvec{h})\) denotes the j times repetition of \(\varvec{h}\) and the error term \(\varvec{R}_{k+1}(\varvec{a},\varvec{h})\) is of the form

whose norm can be bounded using the fact that \(\int _{t=0}^{1} (1-t)^k \hbox {d}t=\frac{1}{k+1}\) as

In order to be able to use such multivariate Taylor expansions in our setting, the existence and finiteness of \(\varvec{J}^k\varPsi _{t}(\varvec{v})\) can be shown rigorously in the following way. Firstly, for \(0<t<\left( C_{\varvec{J}}^{(k)}\right) ^{-1}\), one has

and using inequality (1.15), we can show that

is convergent and finite. For \(t\ge \left( C_{\varvec{J}}^{(k)}\right) ^{-1}\), we can express \(\varPsi _{t}(\varvec{v})\) as a composition \(\varPsi _{t_1}(\ldots (\varPsi _{t_m}(\varvec{v})))\) for \(t_1+\cdots +t_m=t\) and establish the existence of the partial derivatives by the chain rule.

After establishing the existence of the partial derivatives \(\varvec{J}^k \varPsi _{t}(\varvec{v})\), we are going to bound their norm in the following lemma (proven in Section A.1 of “Appendix”).

Lemma 1.2

For any \(k\ge 1\), let

Then for any \(k\ge 1\), \(T\ge 0\), we have

2 Main Results

In this section, we present our main results. We start by introducing our assumptions. In Sect. 2.1 we show that the smoother and the filter can be well approximated by Gaussian distributions when \(\sigma _Z^2 h\) is sufficiently small. This is followed by Sect. 2.2 where based on the Gaussian approximation result we show that the maximum a posteriori (MAP) estimators are asymptotically optimal in mean square error in the \(\sigma _Z^2 h\rightarrow 0\) limit. We also show that Newton’s method can be used for calculating the MAP estimators if the initial point \(\varvec{x}_0\) can be chosen sufficiently close to the true starting position \(\varvec{u}\). Finally, in Sect. 2.3 we propose estimators to use as initial point \(\varvec{x}_0\) that satisfy this criteria when \(\sigma _Z^2 h\) and h are sufficiently small.

We start with an assumption that will be used in these results.

Assumption 2.1

Let \(T>0\) be fixed, and suppose that \(T=kh\), where \(k\in \mathbb {N}\). Suppose that \(\Vert \varvec{u}\Vert <R\), and that there exist constants \(h_{\max }(\varvec{u},T)>0\) and \(c(\varvec{u},T)>0\) such that for every \(\varvec{v}\in \mathcal {B}_R\), for every \(h\le h_{\max }(\varvec{u},T)\) (or equivalently, every \(k\ge T/h_{\max }(\varvec{u},T)\)), we have

As we shall see in Proposition 2.1, this assumption follows from the following assumption on the derivatives (introduced in Paulin et al. [38]).

Assumption 2.2

Suppose that \(\Vert \varvec{u}\Vert <R\), and there is an index \(j\in \mathbb {N}\) such that the system of equations in \(\varvec{v}\) defined as

has a unique solution \(\varvec{v}:=\varvec{u}\) in \(\mathcal {B}_R\), and

where \(\left( \varvec{H}\varvec{D}^i \varvec{u}\right) _k\) refers to coordinate k of the vector \(\varvec{H}\varvec{D}^i \varvec{u}\in \mathbb {R}^{d_o}\) and \(\nabla \) denotes the gradient of the function in \(\varvec{u}\).

Proposition 2.1

Assumption 2.2 implies Assumption 2.1.

The proof is given in Section A.1 of “Appendix”. Assumption 2.2 was verified for the Lorenz 63’ and 96’ models in Paulin et al. [38] (for certain choices of the observation matrix \(\varvec{H}\)); thus, Assumption 2.1 is also valid for these models.

We denote \(\mathrm {int}(\mathcal {B}_R){\mathrel {\mathop :}=}\{\varvec{v}\in \mathbb {R}^d: \Vert \varvec{v}\Vert <R\}\) the interior of \(\mathcal {B}_R\). In most of our results, we will make the following assumption about the prior q.

Assumption 2.3

The prior distribution q is assumed to be absolutely continuous with respect to the Lebesgue measure on \(\mathbb {R}^d\), supported on \(\mathcal {B}_R\). We assume that \(v\rightarrow q(\varvec{v})\) is strictly positive and continuous on \(\mathcal {B}_R\) and that it is 3 times continuously differentiable at every interior point of \(\mathcal {B}_R\). Let

We assume that these are finite.

After some simple algebra, the smoothing distribution for an initial point \(\varvec{u}\) and the filtering distribution for the current position \(\varvec{u}(T)\) can be expressed as

where \(C_{k}^{\mathrm {sm}}\) is a normalising constant independent of \(\varvec{v}\) (but depending on \((\varvec{Z}_j)_{j\ge 0}\)).

In the following sections, we will present our main results for the smoother and the filter. First, in Sect. 2.1 we are going to state Gaussian approximation results, then in Sect. 2.2 we state various results about the MAP estimators, and in Sect. 2.3 we propose an initial estimator for \(\varvec{u}\) based on the observations \(\varvec{Y}_0,\ldots , \varvec{Y}_k\), to be used as a starting point for Newton’s method.

2.1 Gaussian Approximation

We define the matrix \(\varvec{A}_k\in \mathbb {R}^{d\times d}\) and vector \(\varvec{B}_k\in \mathbb {R}^d\) as

where \(\varvec{J}\varPhi _{t_i}\) and \(\varvec{J}^2 \varPhi _{t_i}\) denote the first and second Jacobian of \(\varPhi _{t_i}\), respectively, and \(\varvec{J}^2 \varPhi _{t_i}(\varvec{u})[\cdot ,\cdot ,\varvec{Z}_i]\) denotes the \(d\times d\) matrix with elements

If \(\varvec{A}_k\) is positive definite, then we define the centre of the Gaussian approximation of the smoother as

and define the Gaussian approximation of the smoother as

If \(\varvec{A}_k\) is not positive definite, then we define the Gaussian approximation of the smoother \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\) to be the d-dimensional standard normal distribution (an arbitrary choice), and \(\varvec{u}^{\mathcal {G}}:=\varvec{0}\). If \(\varvec{A}_k\) is positive definite, and \(\varvec{u}^{\mathcal {G}}\in \mathcal {B}_R\), then we define the Gaussian approximation of the filter as

Alternatively, if \(\varvec{A}_k\) is not positive definite, or \(\varvec{u}^{\mathcal {G}}\notin \mathcal {B}_R\), then we define the Gaussian approximation of the smoother \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\) to be the d-dimensional standard normal distribution.

In order to compare the closeness between the target distributions and their Gaussian approximation, we are going to use two types of distance between distributions. The total variation distance of two distributions \(\mu _1,\mu _2\) on \(\mathbb {R}^d\) that are absolutely continuous with respect to the Lebesgue measure is defined as

where \(\mu _1(x)\) and \(\mu _2(x)\) denote the densities of the distributions.

The Wasserstein distance (also called first Wasserstein distance) of two distributions \(\mu _1,\mu _2\) on \(\mathbb {R}^d\) (with respect to the Euclidean distance) is defined as

where \(\varGamma (\mu _1,\mu _2)\) is the set of all measures on \(\mathbb {R}^d\times \mathbb {R}^d\) with marginals \(\mu _1\) and \(\mu _2\).

The following two theorems bound the total variation and Wasserstein distances between the smoother, the filter and their Gaussian approximations. In some of our bounds, the quantity \(T+h_{\max }(\varvec{u},T)\) appears. For brevity, we denote this as

We are also going to use the constant \(C_{\Vert \varvec{A}\Vert }\) defined as

Theorem 2.1

(Gaussian approximation of the smoother) Suppose that Assumptions 2.1 and 2.3 hold for the initial point \(\varvec{u}\) and the prior q. Then there are constants \(C^{(1)}_{\mathrm {TV}}(\varvec{u},T)\), \(C^{(2)}_{\mathrm {TV}}(\varvec{u},T)\), \(C^{(1)}_{\mathrm {W}}(\varvec{u},T)\), and \(C^{(2)}_{\mathrm {W}}(\varvec{u},T)\) independent of \(\sigma _Z\), h and \(\varepsilon \) such that for any \(0<\varepsilon \le 1\), \(\sigma _Z>0\), and \(0\le h\le h_{\max }(\varvec{u},T)\) satisfying that \(\sigma _Z\sqrt{h}\le \frac{1}{2}C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), we have

Theorem 2.2

(Gaussian approximation of the filter) Suppose that Assumptions 2.1 and 2.3 hold for the initial point \(\varvec{u}\) and the prior q. Then there are constants \(D^{(1)}_{\mathrm {TV}}(\varvec{u},T)\), \(D^{(2)}_{\mathrm {TV}}(\varvec{u},T)\), \(D^{(1)}_{\mathrm {W}}(\varvec{u},T)\), and \(D^{(2)}_{\mathrm {W}}(\varvec{u},T)\) independent of \(\sigma _Z\), h and \(\varepsilon \) such that for any \(0<\varepsilon \le 1\), \(\sigma _Z>0\), and \(0\le h\le h_{\max }(\varvec{u},T)\) satisfying that \(\sigma _Z\sqrt{h}\le \frac{1}{2}D_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), we have

Note that the Gaussian approximations \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}\) and \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}\) as defined above are not directly computable based on the observations \(\varvec{Y}_{0:k}\), since they involve the true initial position \(\varvec{u}\) in both their mean and covariance matrix. However, we believe that with some additional straightforward calculations one could show that results similar to Theorems 2.1 and 2.2 also hold for the Laplace approximations of the smoothing and filtering distributions (i.e. when the mean and covariance of the normal approximation is replaced by the MAP and the inverse Hessian of the log-likelihood at the MAP, respectively), which are directly computable based on the observations \(\varvec{Y}_{0:k}\).

2.2 MAP Estimators

Let \(\overline{\varvec{u}}^{{\mathrm {sm}}}\) be the mean of the smoothing distribution, \(\overline{\varvec{u}}^{{\mathrm {fi}}}\) be the mean of the filtering distribution, and \(\hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}\) be the maximum a posteriori of the smoothing distribution, i.e.

In case there are multiple maxima, we choose any of them. For the filter, we will use the push-forward MAP estimator

Based on the Gaussian approximation results, we prove the following two theorems about these estimators.

Theorem 2.3

(Comparison of mean square error of MAP and posterior mean for smoother) Suppose that Assumptions 2.1 and 2.3 hold for the initial point \(\varvec{u}\) and the prior q. Then there is a constant \(S^{\mathrm {sm}}_{\max }(\varvec{u},T)>0\) independent of \(\sigma _Z\) and h such that for \(0<h<h_{\max }(\varvec{u},T)\), \(\sigma _Z\sqrt{h}\le S^{\mathrm {sm}}_{\max }(\varvec{u},T)\), we have that

where the expectations are taken with respect to the random observations, and \(\underline{C}^{\mathrm {sm}}(\varvec{u},T)\), \(\overline{C}^{\mathrm {sm}}(\varvec{u},T)\), and \(C^{\mathrm {sm}}_{\mathrm {MAP}}(\varvec{u},T)\) are finite positive constants independent of \(\sigma _Z\) and h.

Theorem 2.4

(Comparison of mean square error of MAP and posterior mean for filter) Suppose that Assumptions 2.1 and 2.3 hold for the initial point \(\varvec{u}\) and the prior q. Then there is a constant \(S^{\mathrm {fi}}_{\max }(\varvec{u},T)>0\) independent of \(\sigma _Z\) and h such that for \(0<h<h_{\max }(\varvec{u},T)\), \(\sigma _Z\sqrt{h}\le S^{\mathrm {fi}}_{\max }(\varvec{u},T)\), we have that

where the expectations are taken with respect to the random observations, and \(\underline{C}^{\mathrm {fi}}(\varvec{u},T)\), \(\overline{C}^{\mathrm {fi}}(\varvec{u},T)\), and \(C^{\mathrm {fi}}_{\mathrm {MAP}}(\varvec{u},T)\) are finite positive constants independent of \(\sigma _Z\) and h.

Remark 2.1

Theorems 2.3 and 2.4 in particular imply that when T is fixed, and \(\sigma _Z\sqrt{h}\) tends to 0, the ratio between the mean square errors of the posterior mean and MAP estimators conditioned on the initial position \(\varvec{u}\) tends to 1. Since the mean of the posterior distributions, \(\overline{\varvec{u}}^{{\mathrm {sm}}}\) (or \(\overline{\varvec{u}}^{{\mathrm {fi}}}\) for the filter), is the estimator \(U(\varvec{Y}_{0:k})\) that minimises \(\mathbb {E}(\Vert U(\varvec{Y}_{0:k})-\varvec{u}\Vert ^2)\) (or \(\mathbb {E}(\Vert U(\varvec{Y}_{0:k})-\varvec{u}(T)\Vert ^2)\) for the filter), our results imply that the mean square error of the MAP estimators is close to optimal when \(\sigma _Z\sqrt{h}\) is sufficiently small.

Next we propose a method to compute the MAP estimators. Let \(g^{\mathrm {sm}}: \mathcal {B}_R\rightarrow \mathbb {R}\) be

Then \(-g^{\mathrm {sm}}(\varvec{v})\) is the log-likelihood of the smoother, except that it does not contain the normalising constant term.

The following theorem shows that Newton’s method can be used to compute \(\hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}\) to arbitrary precision if it is initiated from a starting point \(\varvec{x}_0\) that is sufficiently close to the initial position \(\varvec{u}\). The proof is based on the concavity properties of the log-likelihood near \(\varvec{u}\). Based on this, an approximation for the push-forward MAP estimator \(\hat{\varvec{u}}^{{\mathrm {fi}}}\) can be then computed by moving forward the approximation of \(\hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}\) by time T according to the dynamics \(\varPsi _T\). [This will not increase the error by more than a factor of \(\exp (GT)\) according to (1.6)].

Theorem 2.5

(Convergence of Newton’s method to the MAP) Suppose that Assumptions 2.1 and 2.3 hold for the initial point \(\varvec{u}\) and the prior q. Then for every \(0<\varepsilon \le 1\), there exist finite constants \(S_{\max }^{\mathrm {sm}}(\varvec{u},T,\varepsilon )\), \(N^{\mathrm {sm}}(\varvec{u},T)\) and \(D_{\max }^{\mathrm {sm}}(\varvec{u},T)\in (0, N^{\mathrm {sm}}(\varvec{u},T)]\) (defined in (5.62) and (5.63)) such that the following holds. If \(\sigma _Z\sqrt{h}\le S_{\max }^{\mathrm {sm}}(\varvec{u},T,\varepsilon )\), and the initial point \(\varvec{x}_0\in \mathcal {B}_R\) satisfies that \(\Vert \varvec{x}_0-\varvec{u}\Vert < D_{\max }^{\mathrm {sm}}(\varvec{u},T)\), then the iterates of Newton’s method defined recursively as

satisfy that

Remark 2.2

The bound (2.31) means that the number of digits of precision essentially doubles in each iteration. In other words, only a few iterations are needed to approximate the MAP estimator with high precision if \(\varvec{x}_0\) is sufficiently close to \(\varvec{u}\).

2.3 Initial Estimator

First, we are going to estimate the derivatives \(\varvec{H} \varvec{D}^l \varvec{u}\) for \(l\in \mathbb {N}\) based on observations \(\varvec{Y}_{0:k}\). For technical reasons, the estimators will depend on \(\varvec{Y}_{0:\hat{k}}\) for some \(0\le \hat{k}\le k\) (which will be chosen depending on l). For any \(j\in \mathbb {N}\), we define \(\varvec{v}^{(j|\hat{k})}\in \mathbb {R}^{\hat{k}+1}\) as

For \(j_{\max }\in \mathbb {N}\), we define \(\varvec{M}^{(j_{\max }|\hat{k})}\in \mathbb {R}^{(j_{\max }+1)\times (\hat{k}+1)}\) as a matrix with rows \(\varvec{v}^{(0|\hat{k})}\), \(\ldots \), \(\varvec{v}^{(j_{\max }|\hat{k})}\). We denote by \(\varvec{I}_{j_{\max }+1}\) the identity matrix of dimension \(j_{\max }+1\), and by \(\varvec{e}^{(l|j_{\max })}\) a column vector in \(\mathbb {R}^{j_{\max }+1}\) whose every component is zero except the \(l+1\)th one which is 1. For any \(l\in \mathbb {N}\), \(j_{\max }\ge l\), \(\hat{k}\ge j_{\max }\), we define the vector

Then

is an estimator of \(\varvec{H} \varvec{D}^l \varvec{u}\). The fact that the matrix \(\varvec{M}^{(j_{\max }|\hat{k})}(\varvec{M}^{(j_{\max }|\hat{k})})'\) is invertible follows from the fact that \(\varvec{v}^{(0|\hat{k})},\ldots , \varvec{v}^{(j_{\max }|\hat{k})}\) are linearly independent (since the matrix with rows \(\varvec{v}^{(0|\hat{k})}, \ldots , \varvec{v}^{(\hat{k}|\hat{k})}\) is the so-called Vandermonde matrix whose determinant is nonzero). From (2.33), it follows that the norm of \(\varvec{c}^{(l|j_{\max }|\hat{k})}\) can be expressed as

To lighten the notation, for \(j_{\max }\ge l\) and \(\hat{k}\ge j_{\max }\), we will denote

The next proposition gives an error bound for this estimator, which we will use for choosing the values \(\hat{k}\) and \(j_{\max }\) given l.

Proposition 2.2

Suppose that \(j_{\max }\ge l\) and \(\hat{k}\ge 2j_{\max }+3\). Then for any \(0< \varepsilon \le 1\),

where

The following lemma shows that as \(\hat{k}\rightarrow \infty \), the constant \(C_{\varvec{M}}^{(l|j_{\max }|\hat{k})}\) tends to a limit.

Lemma 2.6

Let \(\varvec{K}^{(j_{\max })}\in \mathbb {R}^{j_{\max }+1\times j_{\max }+1}\) be a matrix with elements \(\varvec{K}^{(j_{\max })}_{i,j}:=\frac{1}{i+j-1}\) for \(1\le i,j\le j_{\max }+1\). Then for any \(l\in \mathbb {N}\), \(j_{\max }\ge l\), the matrix \(\varvec{K}^{(j_{\max })}\) is invertible, and

The proofs of the above two results are included in Sect. 5.4. Based on these results, we choose \(\hat{k}\in \{2j_{\max }+3,\ldots , k\}\) such that the function \(g(l,j_{\max },\hat{k})\) is minimised. We denote this choice of \(\hat{k}\) by \(\hat{k}_{\mathrm {opt}}(l,j_{\max })\). (If \(g(l,j_{\max },\hat{k})\) takes the same value for several \(\hat{k}\), then we choose the smallest of them.) By taking the derivative of \(g(l,j_{\max },\hat{k})\) in \(\hat{k}\), it is easy to see that it has a single minimum among positive real numbers achieved at

Based on this, we have

Finally, based on the definition of \(\hat{k}_{\mathrm {opt}}(l,j_{\max })\), we choose \(j_{\max }^{\mathrm {opt}}(l)\) as

where \(J_{\max }^{(l)}\in \{l,l+1,\ldots , \lfloor (k-3)/2 \rfloor \}\) is a parameter to be tuned. We choose the smallest possible \(j_{\max }\) where the minimum is taken. Based on these notations, we define our estimator for \(\varvec{H} \varvec{D}^l \varvec{u}\) as

The following theorem bounds the error of this estimator.

Theorem 2.7

Suppose that \(\varvec{u}\in \mathcal {B}_R\), and \(T=kh\). Then for any \(l\in \mathbb {N}\), there exist some positive constants \(h_{\max }^{(l)}\), \(s_{\max }^{(l)}(T)\) and \(S_{\max }^{(l)}(T)\) such that for any choice of the parameter \(J_{\max }^{(l)}\in \{l,l+1,\ldots , \lfloor (k-3)/2 \rfloor \}\), any \(\varepsilon >0\), \(0<s\le s_{\max }^{(l)}(T)\), \(0<h\le \frac{h_{\max }^{(l)} \cdot s}{\sqrt{1+\frac{\log \left( 1/\varepsilon \right) }{\log (d_o+1)}}}\), \(0\le \sigma _Z \sqrt{h}\le S_{\max }^{(l)}(T)\cdot \left( \frac{s}{\sqrt{1+\frac{\log \left( 1/\varepsilon \right) }{\log (d_o+1)}}}\right) ^{l+3/2}\),

The following theorem proposes a way of estimating \(\varvec{u}\) from estimates for the derivatives \(\left( \varvec{H} \varvec{D}^i \varvec{u}\right) _{0\le i\le j}\). This will be used as our initial estimator for Newton’s method.

Theorem 2.8

Suppose that for some \(j\in \mathbb {N}\) there is a function \(F: (\mathbb {R}^{d_o})^{j+1}\rightarrow \mathbb {R}^d\) independent of \(\varvec{u}\) such that

for \(\sum _{i=0}^{j}\left\| \varvec{H}\varvec{D}^i \varvec{u}-\varvec{x}^{(i)}\right\| ^2\le D_F(\varvec{u})\), for some positive constants \(C_F(\varvec{u})\), \(D_F(\varvec{u})\). Then \(F(\hat{\varPhi }^{(0)},\ldots , \hat{\varPhi }^{(j)})\) satisfies that if \(\sum _{i=0}^{j}\left\| \varvec{H}\varvec{D}^i \varvec{u}-\hat{\varPhi }^{(i)}\right\| ^2\le D_F(\varvec{u})\), then

In particular, under Assumption 2.2 and for j as determined therein, function F defined as

satisfies conditions (2.43) and (2.44).

Thus, the initial estimator can simply be chosen as

with the above two theorems implying that the estimate gets close to u for decreasing \(\sigma _Z \sqrt{h}\). Solving polynomial sum of squares minimisation problems of this type is a well-studied problem in optimization theory (see [26] for a theoretical overview), and several toolboxes are available (see [40, 47]). Besides (2.46), other problem-specific choices of F satisfying conditions (2.43) and (2.44) can also be used, as we explain in Sect. 3.2 for the Lorenz 96’ model.

2.4 Optimization Based Smoothing and Filtering

The following algorithm provides an estimator of \(\varvec{u}\) given \(\varvec{Y}_{0:k}\). We assume that either there is a problem-specific F satisfying conditions (2.43) and (2.44) for some \(j\in \mathbb {N}\), or we suppose that Assumption 2.2 is satisfied for the true initial point \(\varvec{u}\), and use F as defined in (2.46).

The following algorithm returns an online estimator of \(\left( \varvec{u}(t_i)\right) _{i\ge 0}\) given \(\varvec{Y}_{0:i}\) at time \(t_i\).

This algorithm can be modified to run Step 2 only at every K step for some \(K\in \mathbb {Z}_+\) (i.e. for \(i=k+lK\) for \(l\in \mathbb {N}\)) and propagate forward the estimate of the previous time we ran Step 2 at the intermediate time points. This increases the execution speed at the cost of the loss of some precision (depending on the choice of K).

Based on our results in the previous sections, we can see that if the assumptions of the results hold and \(\varDelta _{\min }\) is chosen sufficiently small then the estimation errors are of \(O(\sigma _Z \sqrt{h})\) with high probability for both algorithms (in the second algorithm, for \(i\ge k\)).

3 Application to the Lorenz 96’ Model

The Lorenz 96’ model is a d-dimensional chaotic dynamical system that was introduced in Lorenz [31]. In its original form, it is written as

where the indices are understood modulo d, and f is the so-called forcing constant. In this paper we are going to fix this as \(f=8\). (This is a commonly used value that is experimentally known to cause chaotic behaviour, see [32, 33].) As shown on page 16 of Sanz-Alonso and Stuart [42], this system can be written in the form (1.1), and the bilinear form \(\varvec{B}(\varvec{u},\varvec{u})\) satisfies the energy-conserving property (i.e. \(\left<\varvec{B}(\varvec{v},\varvec{v}),\varvec{v}\right>=0\) for any \(\varvec{v}\in \mathbb {R}^d\)).

We consider 2 observation scenarios for this model. In the first scenario, we assume that d is divisible by 6 and choose \(\varvec{H}\) such that coordinates 1, 2, 3, 7, 8, 9, \(\ldots \), \(d-5,d-4,d-3\) are observed directly, i.e. each observed batch of 3 is followed by a non-observed batch of 3. In this case, the computational speed is fast, and we are able to obtain simulation results for high dimensions. We consider first a small-dimensional case (\(d=12\)) to show the dependence of the MSE of the MAP estimator on the parameter k (the amount of observations), when the parameters \(\sigma _Z\) and h are fixed. After this, we consider a high-dimensional case (\(d=1{,}000{,}002\)) and look at the dependence of the MSE of the MAP estimator on \(\sigma _Z\sqrt{h}\).

In the second scenario, we choose \(\varvec{H}\) such that we observe the first 3 coordinates directly. We present some simulation results for \(d=60\) dimensions for this scenario.

In Paulin et al. [38], we have shown that in the second scenario, the system satisfies Assumption 2.2 for Lebesgue-almost every initial point \(\varvec{u}\in \mathbb {R}^d\). A simple modification of that argument shows that Assumption 2.2 holds for Lebesgue-almost every initial point \(\varvec{u}\in \mathbb {R}^d\) in the first observation scenario, too.

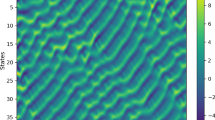

In each case, we have set the initial point as \(\varvec{u}=\left( \frac{d+1}{2d},\frac{d+2}{2d},\ldots , 1\right) \). (We have tried different randomly chosen initial points and obtained similar results.) Figures 1, 2 and 3 show the simulation results when applying Algorithm 1 (optimization based smoother) to each of these cases. Note that Algorithm 2 (optimization based filter) applied to this setting yields very similar results.

In Fig. 1, we can see that the MAP estimators RMSE (root-mean-square error) does not seem to decrease significantly after a certain amount of observations. This is consistent with the non-concentration of the smoother due to the existence of leaf sets, described in Paulin et al. [38]. Moreover, by increasing k above 100, we have observed that the Newton’s method often failed to improve significantly over the initial estimator, and the RMSE of the estimator became of order \(10^{-1}\), significantly worse than for smaller values of k. We believe that this is due to the fact that as we increase k, while keeping \(\sigma _Z\) and h fixed, the normal approximation of the smoother breaks down, and the smoother becomes more and more multimodal. Due to this, we are unable to find the true MAP when starting from the initial estimator and settle down at another mode. To conclude, for optimal performance, it is important to tune the parameter k of the algorithm.

In Fig. 2, we present results for a \(d=1{,}000{,}002\)-dimensional Lorenz 96’ system, with half of the coordinates observed. The observation time is \(T=10^{-5}\). The circles correspond to data points with \(h=10^{-6}\) (so \(k=10\)), while the triangles correspond to data points with \(h=2\cdot 10^{-7}\) (so \(k=50\)). The plots show that the method works as expected for this high-dimensional system and that the RMSE of the estimator is proportional to \(\sigma _Z \sqrt{h}\).

Finally, in Fig. 3, we present results for a \(d=60\)-dimensional system with the first 3 coordinates observed. The observation time is \(T=10^{-3}\). The circles correspond to data points with \(h=5 \cdot 10^{-5}\), while the triangles correspond to data points with \(h=2.5\cdot 10^{-5}\). We can see that Algorithm 1 is able to handle a system which has only a small fraction of its coordinates observed. Note that the calculations are done with numbers having 360 decimal digits of precision. Such high precision is necessary because the interaction between the 3 observed coordinates of the system and some of the non-observed coordinates is weak.

The requirements on the observations noise \(\sigma _Z\) and observation time step h for the applicability of our method depend heavily on the parameters of the model (such as the dimension d) and on the observation matrix \(\varvec{H}\). In the first simulation (Fig. 1), relatively large noise (\(\sigma _Z=10^{-3}\)) and large time step (\(h=10^{-2}\)) were possible. For the second simulation (Fig. 1), due to the high dimensionality of the model (\(d=1{,}000{,}002\)), and the sparse approximation used in the solver, we had to choose smaller time step (\(h\le 10^{-6}\)) and smaller observation noise (\(\sigma _Z\le 10^{-6}\)). Finally, in the third simulation (Fig. 3), since only a very small fraction of the \(d=60\) coordinates is observed, the observation noise has to be very small in order for us to be able to recover the unobserved coordinates (\(\sigma _Z\le 10^{-120}\)).

In all of the above cases, the error of the MAP estimator is several orders of magnitude less than the error of the initial estimator. When comparing these results with the simulation results of Law et al. [28] using the 3D-Var and extended Kalman filter methods for the Lorenz 96’ model, it seems that our method improves upon them, since it allows for larger dimensions and smaller fraction of coordinates observed.

In the following sections, we describe the theoretical and technical details of these simulations. First, in Sect. 3.1, we bound some constants in our theoretical results for the Lorenz 96’ model. In Sect. 3.2, we explain the choice of the function F in our initial estimator (see Theorem 2.8) in the two observation scenarios. In Sect. 3.3, we adapt the Taylor expansion method for numerically solving ODEs to our setting. Finally, based on these preliminary results, we give the technical details of the simulations in Sect. 3.4.

3.1 Some Bounds for Lorenz 96’ Model

In this section, we will bound some of the constants in Sect. 1.1 for the Lorenz 96’ model. Since \(\varvec{A}\) is the identity matrix, we have \(\Vert \varvec{A}\Vert =1\) which satisfies that \(\left<\varvec{v},\varvec{A}\varvec{v}\right>\ge \lambda _{\varvec{A}}\varvec{v}\) for every \(\varvec{v}\in \mathbb {R}^d\) for \(\lambda _{\varvec{A}}=1\). The condition that \(\left<\varvec{B}(\varvec{v},\varvec{v}),\varvec{v}\right>=0\) for every \(\varvec{v}\in \mathbb {R}^d\) was verified for the Lorenz 96’ model, see Property 3.1 of Law et al. [28]. For our choice \(f=8\), we have \(\Vert \varvec{f}\Vert =8\sqrt{d}\). Thus, based on (1.5), the trapping ball assumption (1.3) is satisfied for the choice \(R:=\frac{\Vert \varvec{f}\Vert }{\lambda _{\varvec{A}}}=8\sqrt{d}\).

For \(\varvec{B}\), given any \(\varvec{u},\varvec{v}\in \mathbb {R}^d\) such that \(\Vert \varvec{u}\Vert ,\Vert \varvec{v}\Vert \le 1\), by the arithmetic mean–root-mean-square inequality, and the inequality \((ab)^2\le \frac{a^2+b^2}{2}\), we have

thus, \(\Vert \varvec{B}\Vert \le 2\). If d is divisible by 2, then the choice \(u_i=v_i=\frac{(-1)^i}{\sqrt{d}}\) shows that this bound is sharp, and \(\Vert \varvec{B}\Vert =2\). For simplicity, we have chosen the prior q as the uniform distribution on \(\mathcal {B}_R\). Based on these, and definitions (1.16) and (1.6), we have

3.2 Choice of the Function F in the Initial Estimator

In this section, we will construct a computationally simple function F satisfying the conditions of Theorem 2.8 for the two observation scenarios.

First, we look at the second scenario, when only the first 3 coordinates are observed. We are going to show that for \(j=\left\lceil \frac{d-3}{3}\right\rceil \), it is possible to construct a function \(F: (\mathbb {R}^{d_o})^{j+1}\rightarrow \mathbb {R}^d\) such that F is computationally simple, Lipschitz in a neighbourhood of \(\varvec{u}\), and \(F\left( \varvec{H} \varvec{u}, \ldots , \varvec{H}\varvec{D}^j \varvec{v}\right) =\varvec{u}\), and thus satisfies the conditions of Theorem 2.8. Notice that

so for \(m=0\), we have

In general, for \(m\ge 1\), by differentiating (3.3) m times, we obtain that

thus, for any \(m\ge 1\),

Thus, for any \(m\in \mathbb {N}\), we have a recursion for the mth derivative of \(u_i\) based on the first \(m+1\) derivatives of \(u_{i-1}\) and the first m derivatives of \(u_{i-2}\) and \(u_{i-3}\). Based on this recursion, and the knowledge of the first j derivatives of \(u_1\), \(u_2\) and \(u_3\), we can compute the first \(j-1\) derivatives of \(u_4\), then the first \(j-2\) derivatives of \(u_5\), etc., and finally the zeroth derivative of \(u_{3+j}\) (i.e. \(u_{3+j}\) itself).

In the other direction,

therefore, for \(m=0\), we have

By differentiating (3.7) m times, we obtain that

thus,

Thus, for any \(m\in \mathbb {N}\), we have a recursion allowing us to compute the first m derivatives of \(u_i\) based on the first \(m+1\) derivatives of \(u_{i+2}\) and the first m derivatives of \(u_{i+1}\) and \(u_{i+3}\) (with indices considered modulo d). This means that given the first j derivatives of \(u_1\), \(u_2\) and \(u_3\), we can compute the first \(j-1\) derivatives of \(u_d\) and \(u_{d-1}\), then the first \(j-2\) derivatives of \(u_{d-2}\) and \(u_{d-3}\), etc., and finally the zeroth derivatives of \(u_{d+2-2j}, u_{d+1-2j}\).

Based on the choice \(j:=\left\lceil \frac{d-3}{3}\right\rceil \), these recursions together define a function F for this case. From the recursion formulas, and the boundedness of \(\varvec{u}\), it follows that F is Lipschitz in a neighbourhood of \(\left( \varvec{H}\varvec{u}, \ldots , \varvec{H} \varvec{D}^j \varvec{u}\right) \) as long as none of the coordinates of \(\varvec{u}\) is 0 (thus for Lebesgue-almost every \(\varvec{u}\in \mathcal {B}_R\)).

Now we look at the first observation scenario, i.e. suppose that d is divisible by 6, and we observe coordinates \((6i+1, 6i+2, 6i+3)_{0\le i\le d/6-1}\). In this case, we choose \(j=1\) and define \(F\left( \varvec{H} \varvec{u}, \varvec{H} \varvec{D}\varvec{u}\right) \) based on formulas (3.4) and (3.8), so that we can express \(u_{6i+4}, u_{6i-1}, u_{6i-2}\) based on \(u_{6i+1}, u_{6i+2}, u_{6i+3}\) and \(\varvec{D}u_{6i+1}, \varvec{D}u_{6i+2}, \varvec{D}u_{6i+3}\) for \(0\le i\le d/6 -1\) (with indices counted modulo d). Based on Eqs. (3.4) and (3.8), we can see that F defined as above satisfies the conditions of Theorem 2.8 as long as none of the coordinates of u is 0 (thus for Lebesgue-almost every \(\varvec{u}\in \mathcal {B}_R\)). In both scenarios, F is computationally simple.

We note that in the above argument, F might be not defined if some of the components of \(\varvec{u}\) are 0. (And the proof of Assumption 2.2 in Paulin et al. [38] also requires that none of the components are 0.) Moreover, due to the above formulas, some numerical instability might arise when some of the components of \(\varvec{u}\) are very small in absolute value. In Section A.2 of “Appendix”, we state a simple modification of the initial estimator of Theorem 2.8 based on the above F that is applicable even when some of the components of \(\varvec{u}\) are zero.

3.3 Numerical Solution of Chaotic ODEs Based on Taylor Expansion

Let \(\varvec{v}\in \mathcal {B}_R\) and \(i_{\max }\in \mathbb {N}\). The following lemma provides some simple bounds that allow us to approximate the quantities \(\varvec{v}(t)=\varPsi _t(\varvec{v})\) for sufficiently small values of t by the sum of the first \(i_{\max }\) terms in their Taylor expansion. These bounds will be used to simulate system (1.1) and to implement Newton’s method as described in (2.30).

Lemma 3.1

For any \(\varvec{v}\in \mathcal {B}_R\), we have

where \(\varvec{D}^0=\varvec{v}\), and for \(i\ge 1\), we have the recursion

Proof

The bounds on the error in the Taylor expansion follow from (1.14). The recursion equation is just (1.13). \(\square \)

The following proposition shows a simple way of estimating \(\varvec{v}(t)\) for larger values t. (The bound (3.11) is not useful for \(t\ge \frac{1}{C_{{\mathrm {der}}}}\).) For \(\varvec{v}\in \mathbb {R}^d\), we let

be the projection of \(\varvec{v}\) on \(\mathcal {B}_R\).

Proposition 3.1

Let \(\varvec{v}\in \mathcal {B}_R\), and suppose that \(\varDelta < \frac{1}{C_{{\mathrm {der}}}}\). Let \(\widehat{\varvec{v}}(0)=\varvec{v}\), \(\widehat{\varvec{v}}(\varDelta ):=P_{\mathcal {B}_R}\left( \sum _{i=0}^{i_{\max }}\frac{\varDelta ^i}{i!} \varvec{D}^i \varvec{v}\right) \), and similarly, given \(\widehat{\varvec{v}}(j \varDelta )\), define

Finally, let \(\delta :=t-\lfloor \frac{t}{\varDelta }\rfloor \varDelta \) and \(\widehat{\varvec{v}}(t):=P_{\mathcal {B}_R}\left( \sum _{i=0}^{i_{\max }}\frac{\delta ^i}{i!} \varvec{D}^i( \widehat{\varvec{v}}(\lfloor \frac{t}{\varDelta }\rfloor \varDelta ))\right) .\) Then the error is bounded as

Proof

Using (3.11) and the fact that the projection \(P_{\mathcal {B}_R}\) decreases distances we know that for every \(j\in \left\{ 0, 1,\ldots ,\lceil t/\varDelta \rceil -1\right\} \),

By inequality (1.6), it follows that

and using the triangle inequality, we have

so the claim follows. \(\square \)

3.4 Simulation Details

The algorithms were implemented in Julia and ran on a computer with a 2.5Ghz Intel Core i5 CPU. In all cases, the convergence of Newton’s method up to the required precision (chosen to be much smaller than the RMSE) occurred in typically 3–8 steps.

In the case of Fig. 1 (\(d=12\), half of the coordinates observed) the observation time T is much larger than \(\frac{1}{C_{{\mathrm {der}}}}\), so we have used the method of Proposition 3.1 to simulate from the system. The gradient and Hessian of the function \(g^{\mathrm {sm}}\) were approximated numerically based on finite difference formulas (requiring \(O(d^2)\) simulations from the ODE). We have noticed that in this case, the Hessian has elements with significantly large absolute value even far away from the diagonal. The running time of Algorithm 1 was approximately 1 s. The RMSEs were numerically approximated from 20 parallel runs. The parameters \(J_{\max }^{(0)}\) and \(J_{\max }^{(1)}\) of the initial estimator were chosen as 1.

In the case of Fig. 1 (\(d=1{,}000{,}002\), half of the coordinates observed), we could not use the same simulation technique as previously (finite difference approximation of the gradient and Hessian of \(g^{\mathrm {sm}}\)) because of the huge computational and memory requirements. Instead, we have computed the Newton’s method iterations described in (2.30) based on preconditioned conjugate gradient solver, with the gradient and the product of the Hessian with a vector evaluated based on adjoint methods as described by Eqs. (3.5)–(3.7) and Section 3.2.1 of Paulin et al. [37] (see also Le Dimet et al. [30]). This means that the Hessians were approximated using products of Jacobian matrices that were stored in sparse format due to the local dependency of Eq. (3.1). This efficient storage has allowed us to run Algorithm 1 in approximately 20–40 min in the simulations. We made 4 parallel runs to estimate the MSEs. The parameters \(J_{\max }^{(0)}\) and \(J_{\max }^{(1)}\) of the initial estimator were chosen as 2.

Finally, in the case of Fig. 3 (\(d=60\), first 3 coordinates observed), we used the same method as in the first example (finite difference approximation of the gradient and Hessian of \(g^{\mathrm {sm}}\)). The running time of Algorithm 1 was approximately 1 hour (in part due to the need of using arbitrary precision arithmetics with hundreds of digits of precision). The MSEs were numerically approximated from 2 parallel runs. The parameters \(J_{\max }^{(0)}, \ldots , J_{\max }^{(19)}\) of the initial estimator were chosen as 24.

4 Preliminary Results

The proof of our main theorems are based on several preliminary results. Let

These quantities are related to the log-likelihoods of the smoothing distribution and its Gaussian approximation as

where \(\succ \) denotes the positive definite order (i.e. \(\varvec{A}\succ \varvec{B}\) if and only if \(\varvec{A}-\varvec{B}\) is positive definite). Similarly, \(\succeq \) denotes the positive semidefinite order.

The next three propositions show various bounds on the log-likelihood-related quantity \(l^{{\mathrm {sm}}}(\varvec{v})\). Their proof is included in Section A.1 of “Appendix”.

Proposition 4.1

(A lower bound on the tails of \(l^{{\mathrm {sm}}}(\varvec{v})\)) Suppose that Assumption 2.1 holds for \(\varvec{u}\), and then for any \(0<\varepsilon \le 1\), for every \(\sigma _Z>0\), \(h\le h_{\max }(\varvec{u},T)\), we have

where

Proposition 4.2

(A bound on the difference between \(l^{{\mathrm {sm}}}(\varvec{v})\) and \(l^{{\mathrm {sm}}}_{{\mathcal {G}}}(\varvec{v})\)) If Assumption 2.1 holds for \(\varvec{u}\), then for any \(0<\varepsilon \le 1\), \(\sigma _Z>0\), \(0< h\le h_{\max }(\varvec{u},T)\), we have

where

Proposition 4.3

(A bound on the difference between \(\nabla l^{{\mathrm {sm}}}(\varvec{v})\) and \(\nabla l^{{\mathrm {sm}}}_{{\mathcal {G}}}(\varvec{v})\)) If Assumption 2.1 holds for \(\varvec{u}\), then for any \(0<\varepsilon \le 1\), \(\sigma _Z>0\), \(0<h\le h_{\max }(\varvec{u},T)\), we have

where

The following lemma is useful for controlling the total variation distance of two distributions that are only known up to normalising constants.

Lemma 4.1

Let f and g be two probability distributions which have densities on \(\mathbb {R}^d\) with respect to the Lebesgue measure. Then their total variation distance satisfies that for any constant \(c>0\),

Proof

We are going to use the following characterisation of the total variation distance,

Based on this, the result is trivial for \(c=1\). If \(c>1\), then we have

and the \(c<1\) case is similar. \(\square \)

The following lemma is useful for controlling the Wasserstein distance of two distributions.

Lemma 4.2

Let f and g be two probability distributions which have densities on \(\mathbb {R}^d\) with respect to the Lebesgue measure, also denoted by \(f(\varvec{x})\) and \(g(\varvec{x})\). Then their Wasserstein distance (defined as in (2.13)) satisfies that for any \(\varvec{y}\in \mathbb {R}^d\),

Proof

Let \(m(\varvec{x}):=\min (f(\varvec{x}),g(\varvec{x}))\), \(\gamma :=\int _{\varvec{x}\in \mathbb {R}^d}m(\varvec{x})d\varvec{x}\), \(\hat{f}(\varvec{x}):=f(\varvec{x})-m(\varvec{x})\), \(\hat{g}(\varvec{x}):=g(\varvec{x})-m(\varvec{x})\). Suppose first that \(\gamma \ne 0\) and \(1-\gamma \ne 0\). Let \(\nu ^{(1)}_{f,g}\) denote the distribution of a random vector \((\varvec{X},\varvec{X})\) on \(\mathbb {R}^d\times \mathbb {R}^d\) for a random variable \(\varvec{X}\) with distribution with density \(m(\varvec{x})/\gamma \). (The two components are equal.) Let \(\nu ^{(2)}_{f,g}\) be a distribution on \(\mathbb {R}^d\times \mathbb {R}^d\) with density \(\nu ^{(2)}_{f,g}(\varvec{x}_1,\varvec{x}_2):=\frac{\hat{f}(\varvec{x}_1)}{1-\gamma }\cdot \frac{\hat{g}(\varvec{x}_2)}{1-\gamma }\). (The two components are independent.)

We define the optimal coupling of f and g as a probability distribution \(\nu _{f,g}\) on \(\mathbb {R}^d\times \mathbb {R}^d\) as a mixture of \(\nu ^{(1)}_{f,g}\) and \(\nu ^{(2)}_{f,g}\), that is, for any Borel-measurable \(E\in \mathbb {R}^d\times \mathbb {R}^d\),

It is easy to check that this distribution has marginals f and g, so by (2.13), and the fact that \(\int _{\varvec{x}_1\in \mathbb {R}^d}\hat{f}(\varvec{x}_1) d\varvec{x}_1= \int _{\varvec{x}_2\in \mathbb {R}^d}\hat{g}(\varvec{x}_2) d\varvec{x}_2=1-\gamma \), we have

and the result follows from the fact that \(\hat{f}(\varvec{x})+\hat{g}(\varvec{x})=|f(\varvec{x})-g(\varvec{x})|\). Finally, if \(\gamma =0\) then we can set \(\nu _{f,g}\) as \(\nu ^{(2)}_{f,g}\), and the same argument works, while if \(\gamma =1\), then both sides of the claim are zero (since \(f(\varvec{x})=g(\varvec{x})\) Lebesgue-almost surely). \(\square \)

The following two lemmas show concentration bounds for the norm of \(\varvec{B}_k\) and the smallest eigenvalue of \(\varvec{A}_k\). The proofs are included in Section A.1 of “Appendix”. (They are based on matrix concentration inequalities.)

Lemma 4.3

Suppose that Assumption 2.1 holds for \(\varvec{u}\), then the random vector \(\varvec{B}_k\) defined in (2.8) satisfies that for any \(t\ge 0\),

Thus, for any \(0<\varepsilon \le 1\), we have

Lemma 4.4

Suppose that Assumption 2.1 holds for \(\varvec{u}\), then the random matrix \(\varvec{A}_k\) defined in (2.7) satisfies that for any \(t\ge 0\),

thus, for any \(0<\varepsilon \le 1\), we have

The following proposition bounds the derivatives of the log-determinant of the Jacobian.

Proposition 4.4

The function \(\log \det \varvec{J}\varPsi _T: \mathcal {B}_R\rightarrow \mathbb {R}\) is continuously differentiable on \(\mathrm {int}(\mathcal {B}_R)\), and its derivative can be bounded as

Proof

Let \(\mathbb {M}^d\) denote the space of \(d\times d\) complex matrices. By the chain rule, we can write the derivatives of the log-determinant as functions of the derivatives of the determinant, which were shown to exist in Bhatia and Jain [5]. Following their notation, we define the kth derivative of \(\det \) at a point \(\varvec{M}\in \mathbb {M}^d\) as a map \(D^k \det \varvec{M}\) from \((\mathbb {M}^d)^k\) to \(\mathbb {C}\) with value

They have defined the norm of kth derivative of the determinant as

From Theorem 4 of Bhatia and Jain [5], it follows that for any \(k\ge 1\), the norm of the kth derivative can be bounded as

Based on the chain rule, the norm first derivative of the log-determinant can be bounded as

and the result follows by (1.21) and (4.10) for \(k=1\) and \(\varvec{M}=\varvec{J}\varPsi _T(\varvec{v})\). \(\square \)

5 Proof of the Main Results

5.1 Gaussian Approximation

In the following two subsections, we are going to prove our Gaussian approximation results for the smoother and the filter, respectively.

5.1.1 Gaussian Approximation for the Smoother

In this section, we are going to describe the proof of Theorem 2.1. First, we will show that the result can be obtained by bounding 3 separate terms, then bounds these in 3 lemmas and finally, combine them.

By choosing the constants \(C^{(1)}_{\mathrm {TV}}(\varvec{u},T)\) and \(C^{(2)}_{\mathrm {TV}}(\varvec{u},T)\) sufficiently large, we can assume that \(C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )\) satisfies the following bounds,

Based on the assumption that \(\sigma _Z\sqrt{h}\le \frac{1}{2}C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), (5.1), and Lemma 4.4, we have

where \(C_{\Vert \varvec{A}\Vert }\) is defined as in (2.15). The event \(\lambda _{\min }(\varvec{A}_k)> \frac{c(\varvec{u},T)}{2h}\) implies in particular that \(\varvec{A}_k\) is positive definite. From Lemma 4.3, we know that

From Proposition 4.1, we know that

Finally, from Proposition 4.2, it follows that

In the rest of the proof, we are going to assume that all four of the events in Eqs. (5.6), (5.7), (5.8) and (5.9) hold. From the above bounds, we know that this happens with probability at least \(1-\varepsilon \).

Let \(\varvec{Z}\) be a d-dimensional standard normal random vector, then from the definition of \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}\), it follows that when conditioned on \(\varvec{B}_k\) and \(\varvec{A}_k\),

has distribution \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\). This fact will be used in the proof several times.

Since the normalising constant of the smoother \(C_{k}^{\mathrm {sm}}\) of (2.4) is not known, it is not easy to bound the total variation distance of the two distributions directly. Lemma 4.1 allows us to deal with this problem by rescaling the smoothing distribution suitably. We define the rescaled smoothing distribution (which is not a probability distribution in general) as

which is of similar form as the Gaussian approximation

Let

then based on the assumption that \(\sigma _Z\sqrt{h}\le C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), (5.2) and (5.3), it follows that

Let \(B_{\rho }:=\{\varvec{v}\in \mathbb {R}^d: \Vert \varvec{v}-\varvec{u}\Vert \le \rho (h,\sigma _Z)\}\) and denote by \(B_\rho ^c\) its complement in \(\mathbb {R}^d\). Then by Lemma 4.1, we have

By Lemma 4.2, we can bound the Wasserstein distance of \(\mu ^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k})\) and \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\) as

In the following six lemmas, we bound the three terms in inequalities (5.16) and (5.17).

Lemma 5.1

Using the notations and assumptions of this section, we have

Proof

Note that by (5.14), we know that \(B_{\rho }\subset \mathcal {B}_R\), and

Now using (5.14), we can see that \(\sup _{\varvec{v}\in B_{\rho }} \left| \log \left( \frac{q(\varvec{v})}{q(\varvec{u})}\right) \right| \le C_{q}^{(1)}\cdot \frac{1}{2C_{q}^{(1)}}\le \frac{1}{2}\), and using (5.9), we have \(\left| \frac{(l^{{\mathrm {sm}}}(\varvec{v})-l^{{\mathrm {sm}}}_{{\mathcal {G}}}(\varvec{v}))}{2\sigma _Z^2}\right| \le \frac{1}{2}\). Using the fact that \(|1-\exp (x)|\le 2|x|\) for \(-1\le x\le 1\), and the bounds \(|\log (q(\varvec{v})/q(\varvec{u}))|\le C_{q}^{(1)}\Vert \varvec{v}-\varvec{u}\Vert \) and (5.9), we can see that for every \(\varvec{v}\in B_{\rho }\),

therefore,

Let \(\varvec{Z}\) denote a d-dimensional standard normal random vector, then it is easy to see that \(\mathbb {E}(\Vert \varvec{Z}\Vert )\le (\mathbb {E}(\Vert \varvec{Z}\Vert ^2))^{1/2}\le d^{1/2}\) and \(\mathbb {E}(\Vert \varvec{Z}\Vert ^3)\le (\mathbb {E}(\Vert \varvec{Z}\Vert ^4))^{3/4}\le (3d^2)^{3/4}\le 3d^{3/2}\). Since we have assumed that the events in (5.6) and (5.7) hold, we know that

Finally, it is not difficult to show that for any \(a,b\ge 0\), \((a+b)^3\le 4(a^3+b^3)\). Therefore,

thus, the result follows. \(\square \)

Lemma 5.2

Using the notations and assumptions of this section, we have

Proof

Note that if \(\varvec{Z}\) is a d-dimensional standard normal random vector, then by Theorem 4.1.1 of Tropp [46], we have

Since the random variable \(\varvec{W}\) defined in (5.10) is distributed as \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\) when conditioned on \(\varvec{A}_k, \varvec{B}_k\), we have

Based on the assumption that \(\sigma _Z\sqrt{h}\le C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), and (5.4), we have \(\frac{2 C_{\varvec{B}}\left( \frac{\varepsilon }{4}\right) }{c(\varvec{u},T)} \cdot \sigma _Z\sqrt{h}\le \frac{\rho (h,\sigma _Z)}{2}\), and

and the result follows by (5.20). \(\square \)

Lemma 5.3

Using the notations and assumptions of this section, we have

Proof

Let \(q_{\max }:=\sup _{\varvec{v}\in \mathcal {B}_R}q(\varvec{v})\). By our assumption that the event in (5.8) holds, we have

where in the last step we have used the fact that \(\det (\varvec{A}_k)^{1/2}\le \Vert \varvec{A}_k\Vert ^{d/2}\). Based on the assumption that \(\sigma _Z\sqrt{h}\le C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), and (5.5), we have

therefore by (5.20), we have

and the claim of the lemma follows by (5.20). \(\square \)

From inequality (5.16) and Lemmas 5.1, 5.2 and 5.3, it follows that under the assumptions of this section, we can set \(C_{\mathrm {TV}}^{(1)}(\varvec{u},T)\) and \(C_{\mathrm {TV}}^{(2)}(\varvec{u},T)\) sufficiently large such that we have

Now we bound the three terms needed for the Wasserstein distance.

Lemma 5.4

Using the notations and assumptions of this section, we have

for some finite positive constants \(C_{1}^*(\varvec{u},T)\), \(C_{2}^*(\varvec{u},T)\).

Proof

Note that for any \(\varvec{v}\in \mathcal {B}_{\rho }\), we have

From (5.21), and the fact that \(\tilde{\mu }^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k})\) is a rescaled version of \(\tilde{\mu }^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k})\), it follows that for \(\sigma _Z\sqrt{h}\le \frac{1}{2}C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), we have

By (5.18) and (5.14), it follows that \(\frac{\tilde{\mu }^{{\mathrm {sm}}}(\varvec{v}|\varvec{Y}_{0:k})}{\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\varvec{v}|\varvec{Y}_{0:k})}\le 3\) for every \(\varvec{v}\in \mathcal {B}_{\rho }\). By using these and bounding \(\left| \frac{\tilde{\mu }^{{\mathrm {sm}}}(\varvec{v}|\varvec{Y}_{0:k})}{\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\varvec{v}|\varvec{Y}_{0:k})}-1\right| \) via (5.18), we obtain that

Based on (5.19), we have

where \(\varvec{Z}\) is a d-dimensional standard normal random vector. The claimed result now follows using the fact that \(\mathbb {E}(\Vert \varvec{Z}\Vert )\le \sqrt{d}\), \(\mathbb {E}(\Vert \varvec{Z}\Vert ^2)\le d\), and \(\mathbb {E}(\Vert \varvec{Z}\Vert ^4)\le 3d^2\). \(\square \)

Lemma 5.5

Using the notations and assumptions of this section, we have

Proof

Using (5.22), Lemma 5.3, and the fact that \(\sigma _Z\sqrt{h}\le \frac{1}{2}C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), we have

and the result follows from \(\int _{\varvec{v}\in B_\rho ^c}\Vert \varvec{v}-\varvec{u}\Vert \mu ^{{\mathrm {sm}}}(\varvec{v}|\varvec{Y}_{0:k}) \hbox {d}\varvec{v}\le 2R\cdot \mu ^{{\mathrm {sm}}}(B_\rho ^c|\varvec{Y}_{0:k})\). \(\square \)

Lemma 5.6

Using the notations and assumptions of this section, we have

for some finite positive constants \(C_{3}^*(\varvec{u},T)\), \(C_{4}^*(\varvec{u},T)\).

Proof

Let \(\varvec{W}\) be defined as in (5.10) and \(\varvec{Z}\) be d-dimensional standard normal. Using the fact that for a nonnegative-valued random variable X, we have \(\mathbb {E}(X)=\int _{t=0}^{\infty }\mathbb {P}(X\ge t)dt\), it follows that

and the claim of the lemma follows. (We have used Lemma 5.2 in the last step.) \(\square \)

From inequality (5.17) and Lemmas 5.1, 5.2 and 5.3, it follows that under the assumptions of this section, for some appropriate choice of constants \(C_{\mathrm {W}}^{(1)}(\varvec{u},T)\) and \(C_{\mathrm {W}}^{(2)}(\varvec{u},T)\), we have

Proof of Theorem 2.1

The claim of the theorem follows from inequalities (5.21) and (5.23), and the fact that the assumption that all four of the events in Eqs. (5.6), (5.7), (5.8), and (5.9) hold happens with probability at least \(1-\varepsilon \). \(\square \)

5.1.2 Gaussian Approximation for the Filter

In this section, we are going to describe the proof of Theorem 2.2. We start by some notation. We define the restriction of \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\) to \(\mathcal {B}_R\), denoted by \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k})\) as

This is a probability distribution which is supported on \(\mathcal {B}_R\). We denote its push-forward map by \(\varPsi _T\) as \(\eta ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\), i.e. if a random vector \(\varvec{X}\) is distributed as \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k})\), then \(\eta ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\) denotes the distribution of \( \varPsi _T(\varvec{X})\).

The proof uses a coupling argument stated in the next two lemmas that allows us to deduce the results based on the Gaussian approximation of the smoother (Theorem 2.1).

Lemma 5.7

(Coupling argument for total variation distance bound) The total variation distance of the filtering distribution and its Gaussian approximation can be bounded as follows,

Proof

First, notice that by Proposition 3(f) of Roberts and Rosenthal [41], we have

By Proposition 3(g) of Roberts and Rosenthal [41], there is a coupling \((\varvec{X}_1, \varvec{X}_2)\) of random vectors such that \(\varvec{X}_1\sim \mu ^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k})\), \(\varvec{X}_2\sim \mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k})\), and \(\mathbb {P}(\varvec{X}_1\ne \varvec{X}_2|\varvec{Y}_{0:k})=d_{{\mathrm {TV}}}(\mu ^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k}),\mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k}))\). Given this coupling, we look at the coupling of the transformed random variables \((\varPsi _T(\varvec{X}_1),\varPsi _T(\varvec{X}_2))\). This obviously satisfies that \(\mathbb {P}(\varPsi _T(\varvec{X}_1)\ne \varPsi _T(\varvec{X}_2)|\varvec{Y}_{0:k})\le d_{{\mathrm {TV}}}(\mu ^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k}),\mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k}))\). Moreover, we have \(\varPsi _T(\varvec{X}_1)\sim \mu ^{{\mathrm {fi}}}(\cdot |\varvec{Y}_{0:k})\) and \(\varPsi _T(\varvec{X}_2)\sim \eta ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\); thus,

The statement of the lemma now follows by the triangle inequality. \(\square \)

Lemma 5.8

(Coupling argument for Wasserstein distance bound) The Wasserstein distance of the filtering distribution and its Gaussian approximation satisfies that

Proof

By Theorem 4.1 of Villani [48], there exists a coupling of random variables \((\varvec{X}_1,\varvec{X}_2)\) (called the optimal coupling) such that \(\varvec{X}_1\sim \mu ^{{\mathrm {sm}}}(\cdot |\varvec{Y}_{0:k})\), \(\varvec{X}_2\sim \mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\cdot |\varvec{Y}_{0:k})\), and

Let \(\hat{\varvec{X}}_2:=\varvec{X}_2\cdot 1_{[\Vert \varvec{X}_2\Vert \le R]}+\frac{\varvec{X}_2}{\Vert \varvec{X}_2\Vert }\cdot R\cdot 1_{[\Vert \varvec{X}_2\Vert >R]}\) denote the projection of \(\varvec{X}_2\) on the ball \(\mathcal {B}_R\). Then using the fact that \(\varvec{X}_1\in \mathcal {B}_R\), it is easy to see that \(\Vert \hat{\varvec{X}}_2-\varvec{X}_1\Vert \le \Vert \varvec{X}_2-\varvec{X}_1\Vert \), and therefore,

where \(\mathcal {L}(\hat{\varvec{X}}_2|\varvec{Y}_{0:k})\) denotes the distribution of \(\hat{\varvec{X}}_2\) conditioned on \(\varvec{Y}_{0:k}\).

Moreover, by the definitions, for a given \(\varvec{Y}_{0:k}\), it is easy to see we can couple random variables \(\hat{\varvec{X}}_2\) and \(\tilde{\varvec{X}}_2\sim \mu ^{{\mathrm {sm}}}_{\mathcal {G}|\mathcal {B}_R}(\cdot |\varvec{Y}_{0:k})\) such that they are the same with probability at least \(1-\mu ^{{\mathrm {sm}}}_{\mathcal {G}}(\mathcal {B}_R^c)\). Since the maximum distance between two points in \(\mathcal {B}_R\) is at most 2R, it follows that

By the triangle inequality, we obtain that

and by (1.6), it follows that

The claim of the lemma now follows by the triangle inequality. \(\square \)

As we can see, the above results still require us to bound the total variation and Wasserstein distances between the distributions \(\eta ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\) and \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\). Let

then the density of \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\) can be written as

Since the normalising constant is not known for the case of \(\eta ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\), we define a rescaled version \(\tilde{\eta }^{{\mathrm {fi}}}_{{\mathcal {G}}}(\cdot |\varvec{Y}_{0:k})\) with density

The following lemma bounds the difference between the logarithms of \(\tilde{\eta }^{{\mathrm {fi}}}_{{\mathcal {G}}}(\varvec{v}|\varvec{Y}_{0:k})\) and \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\varvec{v}|\varvec{Y}_{0:k})\).

Lemma 5.9

For any \(\varvec{v}\in \varPsi _T(\mathcal {B}_R)\), we have

Proof of Lemma 5.9

The absolute value of the first difference can be bounded by Proposition 4.4 as

For any two vectors \(\varvec{x},\varvec{y}\in \mathbb {R}^d\), we have

so the second difference can be bounded as

Using (1.6), we have

By (1.19), we have

so by (1.6), it follows that

We obtain the claim of the lemma by combining the stated bounds. \(\square \)

Now we are ready to prove our Gaussian approximation result for the filter.

Proof of Theorem 2.2

We suppose that \(D^{(1)}_{\mathrm {TV}}(\varvec{u},T)\ge C^{(1)}_{\mathrm {TV}}(\varvec{u},T)\) and \(D^{(2)}_{\mathrm {TV}}(\varvec{u},T)\)\(\ge C^{(2)}_{\mathrm {TV}}(\varvec{u},T)\), and thus \(D_{\mathrm {TV}}(\varvec{u},T,\varepsilon ) \ge C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )\). We also assume that \(D^{(1)}_{\mathrm {TV}}(\varvec{u},T)\) satisfies that

Based on these assumptions on \(D_{\mathrm {TV}}(\varvec{u},T,\varepsilon )\), and the assumption that \(\sigma _Z\sqrt{h}\le \frac{1}{2} D_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), it follows that the probability that all the four events in Eqs. (5.6), (5.7), (5.8) and (5.9) hold is at least \(1-\varepsilon \). We are going to assume that this is the case for the rest of the proof. We define

Based on (5.29), (5.30), and the assumption that \(\sigma _Z\sqrt{h}\le \frac{1}{2} D_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), it follows that

The surface of a ball of radius \(R-\Vert \varvec{u}\Vert \) centred at \(\varvec{u}\) is contained in \(\mathcal {B}_R\), and it will be transformed by \(\varPsi _T\) to a closed continuous manifold whose points are at least \((R-\Vert \varvec{u}\Vert )\exp (-GT)\) away from \(\varPsi _T(\varvec{u})\) (based on (1.6)). This implies that the ball of radius \((R-\Vert \varvec{u}\Vert )\exp (-GT)\) centred at \(\varPsi _T(\varvec{u})\) is contained in \(\varPsi _T(\mathcal {B}_R)\), and thus by (5.32), \(\mathcal {B}_{\rho '}\subset \varPsi _T(\mathcal {B}_R)\).

By Lemma 4.1, we have

By Lemma 5.9, (5.32), and the fact that \(|\exp (x)-1|\le 2|x|\) for \(x\in [-1,1]\), it follows that for \(\varvec{v}\in \mathcal {B}_{\rho '}\), we have

Therefore, the first term of (5.33) can be bounded as

This in turn can be bounded as in Lemma 5.1. The terms \(\tilde{\eta }^{{\mathrm {fi}}}_{{\mathcal {G}}}(\mathcal {B}_{\rho '}^c|\varvec{Y}_{0:k})\) and \(\mu ^{{\mathrm {fi}}}_{{\mathcal {G}}}(\mathcal {B}_{\rho '}^c|\varvec{Y}_{0:k})\) can be bounded in a similar way as in Lemmas 5.2 and 5.3. Therefore, by Lemma 5.7 we obtain that under the assumptions of this section, there are some finite constants \(D^{(1)}_{\mathrm {TV}}(\varvec{u},T)\) and \(D^{(2)}_{\mathrm {TV}}(\varvec{u},T)\) such that

For the Wasserstein distance bound, the proof is based on Lemma 5.8. Note that by the proof of Theorem 2.1, under the assumptions on this section, we have

Therefore, we only need to bound the last two terms of (5.25). The fact that

can be shown similarly to the proof of Lemma 5.3. Finally, the last term can be bounded by applying Lemma 4.2 for \(\varvec{y}:=\varPsi _T(\varvec{u}^{\mathcal {G}})\). This implies that

These terms can be bounded in a similar way as in Lemmas 5.4, 5.5 and 5.6, and the claim of the theorem follows. \(\square \)

5.2 Comparison of Mean Square Error of MAP and Posterior Mean

In the following two subsections, we are going to prove our results concerning the mean square error of the MAP estimator for the smoother, and the filter, respectively.

5.2.1 Comparison of MAP and Posterior Mean for the Smoother

In this section, we are going to prove Theorem 2.3. First, we introduce some notations. Let \(\varvec{u}^{\mathcal {G}}:=\varvec{u}-\varvec{A}_k^{-1}\varvec{B}_k\) denote the centre of the Gaussian approximation \(\mu ^{{\mathrm {sm}}}_{\mathcal {G}}\) (defined when \(\varvec{A}_k\) is positive definite). Let \(\rho (h,\sigma _Z)\) be as in (5.13), \(B_{\rho }:=\{\varvec{v}\in \mathbb {R}^d: \Vert \varvec{v}-\varvec{u}\Vert \le \rho (h,\sigma _Z)\}\) and \(B_{\rho }^c\) be the complement of \(B_{\rho }\). The proof is based on several lemmas which are described as follows. All of them implicitly assume that the assumptions of Theorem 2.3 hold.

Lemma 5.10

(A bound on \(\Vert \overline{\varvec{u}}^{{\mathrm {sm}}}-\varvec{u}^{\mathcal {G}}\Vert \)) There are some finite constants \(D_5(\varvec{u},T)\) and \(D_6(\varvec{u},T)\) such that for any \(0<\varepsilon \le 1\), for \(\sigma _Z\sqrt{h}\le \frac{1}{2}\cdot C_{\mathrm {TV}}(\varvec{u},T,\varepsilon )^{-1}\), we have

Proof

This is a direct consequence of the Wasserstein distance bound of Theorem 2.1, since

\(\square \)

Lemma 5.11

(A bound on \(\Vert \hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}-\varvec{u}\Vert \)) For any \(0<\varepsilon \le 1\), we have

Proof

From Proposition 4.1, and (4.3), it follows that for any \(0<\varepsilon \le 1\),

Since \(\hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}\) is the maximiser of \(\log \mu ^{{\mathrm {sm}}}(\varvec{v}|\varvec{Y}_{0:k})\) on \(\mathcal {B}_R\), our claim follows. \(\square \)

Lemma 5.12

(A bound on \(\Vert \hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}-\varvec{u}^{\mathcal {G}}\Vert \)) There are finite constants \(S_{\mathrm {MAP}}^{(1)}>0\), \(S_{\mathrm {MAP}}^{(2)}\), \(D_7(\varvec{u},T)\) and \(D_8(\varvec{u},T)\) such that for any \(0<\varepsilon \le 1\), for

we have

Proof

By choosing \(S_{\mathrm {MAP}}^{(1)}\) and \(S_{\mathrm {MAP}}^{(2)}\) sufficiently large, we can assume that

From Lemma 5.11, we know that

Using (5.37), it follows that if the above event happens, then \(\Vert \hat{\varvec{u}}^{{\mathrm {sm}}}_{{\mathrm {MAP}}}\Vert <R\), and thus

Using the fact that \(\nabla l^{{\mathrm {sm}}}_{{\mathcal {G}}}(\varvec{v})=2\varvec{A}_k(\varvec{v}-\varvec{u}^{\mathcal {G}})\), and Proposition 4.3, it follows that

Moreover, by Lemma 4.4, we know that for any \(0< \varepsilon \le 1\), we have

By combining the four Eqs. (5.38), (5.39), (5.40) and (5.41), it follows that with probability at least \(1-\varepsilon \), we have

and the claim of the lemma follows by rearrangement. \(\square \)