Abstract

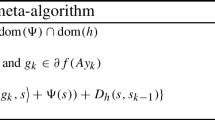

We revisit the proofs of convergence for a first order primal–dual algorithm for convex optimization which we have studied a few years ago. In particular, we prove rates of convergence for a more general version, with simpler proofs and more complete results. The new results can deal with explicit terms and nonlinear proximity operators in spaces with quite general norms.

Similar content being viewed by others

Notes

It must be observed here that the right assumption on g to obtain an accelerated scheme with an arbitrary Bregman distance \(D_x\) should be that g is “strongly convex with respect to \(\psi _x\)”, in the sense that \(g-\gamma \psi _x\) is convex. The proof would then be similar. However, it is not clear whether this covers very interesting situations beyond the standard case.

Using WolframAlpha to check our calculations.

References

Alvarez, F., Attouch, H.: An inertial proximal method for maximal monotone operators via discretization of a nonlinear oscillator with damping. Set-Valued Anal. 9(1–2), 3–11 (2001)

Beck, A., Teboulle, M.: Mirror descent and nonlinear projected subgradient methods for convex optimization. Oper. Res. Lett. 31(3), 167–175 (2003)

Beck, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J. Imaging Sci. 2(1), 183–202 (2009)

Boţ, R.I., Csetnek, E.R., Heinrich, A., Hendrich, C.: On the convergence rate improvement of a primal–dual splitting algorithm for solving monotone inclusion problems. Math. Program. 150(2, Ser. A), 251–279 (2015)

Chambolle, A., Pock, T.: A first-order primal–dual algorithm for convex problems with applications to imaging. J. Math. Imaging Vis. 40(1), 120–145 (2011)

Chen, G., Teboulle, M.: Convergence analysis of a proximal-like minimization algorithm using Bregman functions. SIAM J. Optim. 3(3), 538–543 (1993)

Chen, Yunmei, Lan, Guanghui, Ouyang, Yuyuan: Optimal primal–dual methods for a class of saddle point problems. SIAM J. Optim. 24(4), 1779–1814 (2014)

Combettes, P.L., Condat, L., Pesquet, J.-C., Vũ, B.C.: A forward–backward view of some primal–dual optimization methods in image recovery. In: Proceedings ICIP 2014 Conference, Paris, Oct. 2014 (2014) (to appear)

Condat, L.: A primal–dual splitting method for convex optimization involving Lipschitzian, proximable and linear composite terms. J. Optim. Theory Appl. 158(2), 460–479 (2013)

Davis, D., Yin, W.: A three-operator splitting scheme and its optimization applications. Technical report, CAM Report 15-13/preprint arXiv:1504.01032 (2015)

Drori, Y., Sabach, S., Teboulle, M.: A simple algorithm for a class of nonsmooth convex–concave saddle-point problems. Oper. Res. Lett. 43(2), 209–214 (2015)

Duchi, J., Shalev-Shwartz, S., Singer, Y., Chandra, T.: Efficient projections onto the \(\ell _1\)-ball for learning in high dimensions. In: Proceedings of the 25th international conference on machine learning, ICML ’08, pages 272–279, New York (2008). ACM

Eckstein, J.: Nonlinear proximal point algorithms using bregman functions, with applications to convex programming. Math. Oper. Res. 18(1), 202–226 (1993)

Eckstein, J., Bertsekas, D.P.: On the Douglas–Rachford splitting method and the proximal point algorithm for maximal monotone operators. Math. Program. 55(3, Ser. A), 293–318 (1992)

Esser, E., Zhang, X., Chan, T.F.: A general framework for a class of first order primal–dual algorithms for convex optimization in imaging science. SIAM J. Imaging Sci. 3(4), 1015–1046 (2010)

He, B., Yuan, X.: Convergence analysis of primal–dual algorithms for a saddle-point problem: from contraction perspective. SIAM J. Imaging Sci. 5(1), 119–149 (2012)

He, B., Yuan, X.: On the \(O(1/n)\) convergence rate of the Douglas–Rachford alternating direction method. SIAM J. Numer. Anal. 50(2), 700–709 (2012)

Hohage, T., Homann, C.: A generalization of the Chambolle–Pock algorithm to Banach spaces with applications to inverse problems. Technical report, arXiv:1412.0126 (2014)

Lorenz, D.A., Pock, T.: An inertial forward–backward algorithm for monotone inclusions. J. Math. Imaging Vis. 51(2), 311–325 (2015)

Nemirovski, A.S.: Prox-method with rate of convergence \(O(1/t)\) for variational inequalities with Lipschitz continuous monotone operators and smooth convex–concave saddle point problems. SIAM J. Optim. 15(1), 229–251 (2004). (electronic)

Nemirovski, A.S., Yudin, D.: Problem Complexity and Method Efficiency in Optimization. Wiley, New York (1983). Translated from the Russian and with a preface by E.R. Dawson, Wiley-Interscience Series in Discrete Mathematics

Nesterov, Y.: Introductory Lectures on Convex Optimization. Applied Optimization, vol. 87. Kluwer Academic Publishers, Boston (2004). A basic course

Nesterov, Y.: Smooth minimization of non-smooth functions. Math. Program. 103(1), 127–152 (2005)

Opial, Z.: Weak convergence of the sequence of successive approximations for nonexpansive mappings. Bull. Am. Math. Soc. 73, 591–597 (1967)

Pesquet, J.-C., Repetti, A.: A class of randomized primal–dual algorithms for distributed optimization. arXiv:1406.6404 (2014)

Pock, T., Cremers, D., Bischof, H., Chambolle, A.: An algorithm for minimizing the Mumford-Shah functional. In: ICCV Proceedings, LNCS. Springer (2009)

Rockafellar, R.T.: Monotone operators and the proximal point algorithm. SIAM J. Control Optim. 14(5), 877–898 (1976)

Shefi, R., Teboulle, M.: Rate of convergence analysis of decomposition methods based on the proximal method of multipliers for convex minimization. SIAM J. Optim. 24(1), 269–297 (2014)

Teboulle, M.: Entropic proximal mappings with applications to nonlinear programming. Math. Oper. Res. 17(3), 670–690 (1992)

Tseng, P.: On accelerated proximal gradient methods for convex–concave optimization (2008). SIAM J. Optim. http://www.csie.ntu.edu.tw/~b97058/tseng/papers/apgm. (submitted)

Vũ, B.C.: A splitting algorithm for dual monotone inclusions involving cocoercive operators. Adv. Comput. Math. 38(3), 667–681 (2013)

Acknowledgments

This research is partially supported by the joint ANR/FWF Project Efficient Algorithms for Nonsmooth Optimization in Imaging (EANOI) FWF n. I1148/ANR-12-IS01-0003. A.C. would like to thank his colleague S. Gaiffas for stimulating discussions, as well as J. Fadili for very helpful discussions on nonlinear proximity operators. This work also benefited from the support of the “Gaspard Monge Program in Optimization and Operations Research” (PGMO), supported by EDF and the Fondation Mathématique Jacques Hadamard (FMJH). The authors also need to thank the referees for their careful reading of the manuscript and their numerous helpful comments.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Chambolle, A., Pock, T. On the ergodic convergence rates of a first-order primal–dual algorithm. Math. Program. 159, 253–287 (2016). https://doi.org/10.1007/s10107-015-0957-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-015-0957-3

Keywords

- Saddle-point problems

- First order algorithms

- Primal–dual algorithms

- Convergence rates

- Ergodic convergence