Abstract

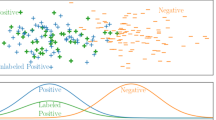

Unsupervised transfer learning methods usually exploit the labeled source data to learn a classifier for unlabeled target data with a different but related distribution. However, most of the existing transfer learning methods leverage 0-1 matrix as labels which greatly narrows the flexibility of transfer learning. Another major limitation is that these methods are influenced by the redundant features and noises residing in cross-domain data. To cope with these two issues simultaneously, this paper proposes a redirected transfer learning (RTL) approach for unsupervised transfer learning with a multi-layer subspace learning structure. Specifically, in the first layer, we first learn a robust subspace where data from different domains can be well interlaced. This is made by reconstructing each target sample with the lowest-rank representation of source samples. Besides, imposing the \(L_{2,1}\)-norm sparsity on the regression term and regularization term brings robustness against noise and works for selecting informative features, respectively. In the second layer, we further introduce a redirected label strategy in which the strict binary labels are relaxed into continuous values for each datum. To handle effectively unknown labels of the target domain, we construct the pseudo-labels iteratively for unlabeled target samples to improve the discriminative ability in classification. The superiority of our method in classification tasks is confirmed on several cross-domain datasets.

Similar content being viewed by others

Data availability

Publicly available data are used.

References

Kan M, Wu J, Shan S, Chen X (2013) Domain adaptation for face recognition: Targetize source domain bridged by common subspace. Int J Comput Vis 109:94–109

Pan SJ, Yang Q (2010) A survey on transfer learning. IEEE Trans Knowl Data Eng 22(10):1345–1359. https://doi.org/10.1109/TKDE.2009.191

Si Y, Pu J, Zang S, Sun L (2021) Extreme learning machine based on maximum weighted mean discrepancy for unsupervised domain adaptation. IEEE Access 9:2283–2293. https://doi.org/10.1109/ACCESS.2020.3047448

Zhang L, Wang S, Huang G-B, Zuo W, Yang J, Zhang D (2019) Manifold criterion guided transfer learning via intermediate domain generation. IEEE Trans Neural Netw Learn Syst 30(12):3759–3773. https://doi.org/10.1109/TNNLS.2019.2899037

Deng W, Liao Q, Zhao L, Guo D, Kuang G, Hu D, Liu L (2021) Joint clustering and discriminative feature alignment for unsupervised domain adaptation. IEEE Trans Image Process 30:7842–7855. https://doi.org/10.1109/TIP.2021.3109530

Wu S, Gao G, Li Z, Wu F, Jing X-Y (2020) Unsupervised visual domain adaptation via discriminative dictionary evolution. Pattern Anal Appl 23(4):1665–1675. https://doi.org/10.1007/s10044-020-00881-w

Prabono AG, Yahya BN, Lee S-L (2021) Hybrid domain adaptation for sensor-based human activity recognition in a heterogeneous setup with feature commonalities. Pattern Anal Appl 24(4):1501–1511. https://doi.org/10.1007/s10044-021-00995-9

Zhang Y, Ye H, Davison BD (2021) Adversarial reinforcement learning for unsupervised domain adaptation. In: 2021 IEEE Winter Conference on Applications of Computer Vision (WACV), pp. 635–644. https://doi.org/10.1109/WACV48630.2021.00068

Lei W, Ma Z, Lin Y, Gao W (2021) Domain adaption based on source dictionary regularized rkhs subspace learning. Pattern Anal Appl 24(4):1513–1532

Pan SJ, Tsang IW, Kwok JT, Yang Q (2011) Domain adaptation via transfer component analysis. IEEE Trans Neural Netw 22(2):199–210. https://doi.org/10.1109/TNN.2010.2091281

Zhang J, Li W, Ogunbona P (2017) Joint geometrical and statistical alignment for visual domain adaptation. arXiv. https://doi.org/10.48550/ARXIV.1705.05498. https://arxiv.org/abs/1705.05498

Si S, Tao D, Geng B (2010) Bregman divergence-based regularization for transfer subspace learning. IEEE Trans Knowl Data Eng 22(7):929–942. https://doi.org/10.1109/TKDE.2009.126

Long M, Wang J, Ding G, Sun J, Yu PS (2013) Transfer feature learning with joint distribution adaptation. In: 2013 IEEE International Conference on Computer Vision, pp. 2200–2207. https://doi.org/10.1109/ICCV.2013.274

Han N, Wu J, Fang X, Xie S, Zhan S, Xie K, Li X (2020) Latent elastic-net transfer learning. IEEE Trans Image Process 29:2820–2833. https://doi.org/10.1109/TIP.2019.2952739

Wan M, Chen X, Zhan T, Yang G, Tan H, Zheng H (2023) Low-rank 2d local discriminant graph embedding for robust image feature extraction. Pattern Recogn 133:109034. https://doi.org/10.1016/j.patcog.2022.109034

Wan M, Yao Y, Zhan T, Yang G (2022) Supervised low-rank embedded regression (slrer) for robust subspace learning. IEEE Trans Circuits Syst Video Technol 32(4):1917–1927. https://doi.org/10.1109/TCSVT.2021.3090420

Shao M, Castillo C, Gu Z, Fu Y (2012) Low-rank transfer subspace learning. In: 2013 IEEE 13th International Conference on Data Mining, pp. 1104–1109. IEEE Computer Society, Los Alamitos, CA, USA. https://doi.org/10.1109/ICDM.2012.102. https://doi.ieeecomputersociety.org/10.1109/ICDM.2012.102

Xu Y, Fang X, Wu J, Li X, Zhang D (2016) Discriminative transfer subspace learning via low-rank and sparse representation. IEEE Trans Image Process 25(2):850–863. https://doi.org/10.1109/TIP.2015.2510498

Zhang L, Fu J, Wang S, Zhang D, Dong Z, Chen CLP (2020) Guide subspace learning for unsupervised domain adaptation. IEEE Trans Neural Netw Learn Syst 31(9):3374–3388. https://doi.org/10.1109/TNNLS.2019.2944455

Xiang S, Nie F, Meng G, Pan C, Zhang C (2012) Discriminative least squares regression for multiclass classification and feature selection. IEEE Trans Neural Netw Learn Syst 23(11):1738–1754. https://doi.org/10.1109/TNNLS.2012.2212721

Zhang X-Y, Wang L, Xiang S, Liu C-L (2015) Retargeted least squares regression algorithm. IEEE Trans Neural Netw Learn Syst 26(9):2206–2213. https://doi.org/10.1109/TNNLS.2014.2371492

Peng Z, Zhang W, Han N, Fang X, Kang P, Teng L (2020) Active transfer learning. IEEE Trans Circuits Syst Video Technol 30(4):1022–1036. https://doi.org/10.1109/TCSVT.2019.2900467

Hu Y, Zhang D, Ye J, Li X, He X (2013) Fast and accurate matrix completion via truncated nuclear norm regularization. IEEE Trans Pattern Anal Mach Intell 35(9):2117–2130. https://doi.org/10.1109/TPAMI.2012.271

Zhang Z, Lai Z, Xu Y, Shao L, Wu J, Xie G-S (2017) Discriminative elastic-net regularized linear regression. IEEE Trans Image Process 26(3):1466–1481. https://doi.org/10.1109/TIP.2017.2651396

Jhuo I-H, Liu D, Lee DT, Chang S-F (2012) Robust visual domain adaptation with low-rank reconstruction. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition, pp. 2168–2175. https://doi.org/10.1109/CVPR.2012.6247924

Boyd S, Parikh N, Chu E, Peleato B, Eckstein J (2011) Distributed optimization and statistical learning via the alternating direction method of multipliers. Found Trends Mach Learn 3(1):1–122. https://doi.org/10.1561/2200000016

Eckstein J, Bertsekas D (1992) On the Douglas-Rachford splitting method and the proximal point algorithm for maximal monotone operators. Math Program 55:293–318. https://doi.org/10.1007/BF01581204

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. In: Proceedings of the 25th International conference on neural information processing systems, pp. 1097–1105. Curran Associates Inc., Red Hook, NY, USA

Wang J, Chen Y, Feng W, Yu H, Huang M, Yang Q (2020) Transfer learning with dynamic distribution adaptation. ACM Trans Intell Syst Technol (TIST) 11(1):1–25

Gong B, Shi Y, Sha F, Grauman K (2012) Geodesic flow kernel for unsupervised domain adaptation. In: 2012 IEEE conference on computer vision and pattern recognition, pp. 2066–2073. https://doi.org/10.1109/CVPR.2012.6247911

Wan M, Chen X, Zhao C, Zhan T, Yang G (2022) A new weakly supervised discrete discriminant hashing for robust data representation. Inf Sci 611:335–348. https://doi.org/10.1016/j.ins.2022.08.015

Long M, Wang J, Sun J, Yu PS (2015) Domain invariant transfer kernel learning. IEEE Trans Knowl Data Eng 27(6):1519–1532. https://doi.org/10.1109/TKDE.2014.2373376

Ma X, Zhang T, Xu C (2019) Gcan: Graph convolutional adversarial network for unsupervised domain adaptation. In: 2019 IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp. 8258–8268. https://doi.org/10.1109/CVPR.2019.00846

van der Maaten L, Hinton G (2008) Visualizing data using t-sne. J Mach Learn Res 9(86):2579–2605

Zou H, Hastie T, Tibshirani R (2006) Sparse principal component analysis. J Comput Graph Stat 15(2):265–286

Liu G, Lin Z, Yan S, Sun J, Yu Y, Ma Y (2013) Robust recovery of subspace structures by low-rank representation. IEEE Trans Pattern Anal Mach Intell 35(1):171–184. https://doi.org/10.1109/tpami.2012.88

Cai J-F, Candès EJ, Shen Z (2010) A singular value thresholding algorithm for matrix completion. SIAM J Optim 20(4):1956–1982. https://doi.org/10.1137/080738970

Funding

This work was partially supported by JSPS KAKENHI (Grant Number 19H04128).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Optimization solutions of RTL

Optimization solutions of RTL

Update W: W can be solved by fixing other irrelevant variables and optimized by the following problem:

Then, we take partial derivative of (19) with respect to W equal to zero, a closed-form solution \(W^*\) can be obtained as

where \(K_1=X_T-X_SZ,K_2=E-\frac{M_1}{\mu },K_3=A-\frac{M_3}{\mu }\). \(G \in \mathbb {R}^{m \times m}\) is a diagonal matrix and its each diagonal element \({\left( G \right) _{ii}} = \frac{1}{{2{{\left\| {{{\left( W \right) }^i}} \right\| }_2}}}\), where \({\left( \cdot \right) ^{i}} \) denotes the ith column of a matrix.

Update P: P can be solved by fixing other irrelevant variables and optimized by the following problem:

We can convert (21) to the following maximization problem:

Let the SVD of \({\mu {K_3}W}\). Then, according to [35], the optimal solution to the problem above is

where U, V is the SVD decomposition value of \({\mu {K_3}W}\).

Update A: A can be solved by fixing other irrelevant variables and optimized by the following convex problem:

Then, we take partial derivative of (24) with respect to A equal to zero, a closed-form solution \(A^*\) can be obtained as

UpdateZ: Z can be solved by fixing other irrelevant variables and optimized by the following convex problem:

Then, we take partial derivative of (26) with respect to Z equal to zero, a closed-form solution \(Z^*\) can be obtained as

Update E: E can be solved by fixing other irrelevant variables and optimized by the following convex problem:

According to [36], the optimal \(E^*\) can be computed as

where \(K = {W^t}{X_T} - {W^t}{X_S}Z + \frac{{{M_1}}}{\mu }\).

Update J: J can be solved by fixing other irrelevant variables and optimized by the following convex problem:

The optimal \(J^*\) can be computed by utilizing singular value thresholding (SVT) algorithm [37] as

where \(\Omega \) is the singular value shrinkage operator.

Update T: T can be solved by fixing other irrelevant variables and optimized by the following convex problem:

It is obvious that problem (32) can be decomposed into the following sub-problems

where \(R=AX\). According to Theorem 2 in [21], after solving problem (33) by solving for all columns of T separately, we can obtain the optimal solution \({{T_{i,:}}}\) of problem (32).

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Bao, J., Kudo, M., Kimura, K. et al. Redirected transfer learning for robust multi-layer subspace learning. Pattern Anal Applic 27, 25 (2024). https://doi.org/10.1007/s10044-024-01233-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10044-024-01233-8