Abstract

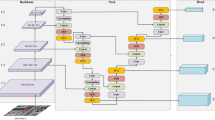

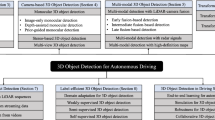

This paper proposed a two-stage Voxel-based 3D Object detector which named GVnet. Voxel-based method mainly relies on sampling and Grouping point in voxel and the feature map generated by subsequent 3D CNN to control the quality of detection. Moreover, traditional voxel feature encoder (VFE) methods cannot adjust the quality of feature map through reasonable sampling. Therefore, the method we propose is an improvement to the existing VFE. The specific operations are: First calculate the corresponding Gaussian distribution of the original point cloud data, and then sampling any number of points by controlling the confidence value to improve the performance of voxel encoder and further improve the quality of the feature map output by the 3D CNN. In addition, a voxel ROI pooling method is proposed in stage two. In ROI Pooling, the receptive field in the original space and the corresponding raw point are obtained through the mapping relationship between feature and ROI, then change the raw point to adjust the receptive field to improve the performance of classification and regression. Finally, the experimental results on the KITTI, nuScenes and Waymo dataset show that the performance of GVnet under most of the evaluation indexes is better than the current detection methods, at the cost of only a small amount of inference time.

Similar content being viewed by others

References

Chen X, Ma H, Wan J, Li B, Xia T (2017) Multi-view 3d object detection network for autonomous driving. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1907–1915

Ku J, Mozifian M, Lee J, Harakeh A, Waslander SL (2018) Joint 3d proposal generation and object detection from view aggregation. In: 2018 IEEE/RSJ international conference on intelligent robots and systems (IROS). IEEE, pp 1–8

Lin TY, Dollár P, Girshick R, He K, Hariharan B, Belongie S (2017) Feature pyramid networks for object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 2117–2125

Liang M, Yang B, Wang S, Urtasun R (2018) Deep continuous fusion for multi-sensor 3d object detection. In: Proceedings of the European conference on computer vision (ECCV), pp 641–656

Qi CR, Liu W, Wu C, Su H, Guibas LJ (2018) Frustum pointnets for 3d object detection from rgb-d data. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 918–927

Liang M, Yang B, Chen Y, Hu R, Urtasun R (2019) Multi-task multi-sensor fusion for 3d object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7345–7353

Xu D, Anguelov D, Jain A (2018) Pointfusion: deep sensor fusion for 3d bounding box estimation. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 244–253

Wang C, Xu D, Zhu Y, Martín-Martín R, Lu C, Fei-Fei L, Savarese S (2019). Densefusion: 6d object pose estimation by iterative dense fusion. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 3343–3352

Vora S, Lang AH, Helou B, Beijbom O (2020) Pointpainting: sequential fusion for 3d object detection. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 4604–4612

Zhou Y, Sun P, Zhang Y, Anguelov D, Gao J, Ouyang T, Vasudevan V et al (2020) End-to-end multi-view fusion for 3d object detection in lidar point clouds. In: Conference on robot learning. PMLR, pp 923–932

Yang Z, Sun Y, Liu S, Shen X, Jia J (2019) Std: Sparse-to-dense 3d object detector for point cloud. In: Proceedings of the IEEE international conference on computer vision, pp 1951–1960

Lang AH, Vora S, Caesar H, Zhou L, Yang J, Beijbom O (2019) Pointpillars: fast encoders for object detection from point clouds. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 12697–12705

Qi CR, Su H, Mo K, Guibas LJ (2017) Pointnet: deep learning on point sets for 3d classification and segmentation. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 652–660

Shi S, Wang X, Li H (2019) Pointrcnn: 3d object proposal generation and detection from point cloud. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 770–779

Yang, B, Luo W, Urtasun R (2018) Pixor: real-time 3d object detection from point clouds. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7652–7660

Ali W, Abdelkarim S, Zidan M, Zahran M, El Sallab A (2018) Yolo3d: End-to-end real-time 3d oriented object bounding box detection from lidar point cloud. In: Proceedings of the European conference on computer vision (ECCV)

Shi S, Wang Z, Shi J, Wang X, Li H (2020) From points to parts: 3d object detection from point cloud with part-aware and part-aggregation network. IEEE Trans Pattern Anal Mach Intell

Kuang H, Wang B, An J, Zhang M, Zhang Z (2020) Voxel-FPN: multi-scale voxel feature aggregation for 3D object detection from LIDAR point clouds. Sensors 20(3):704

Zhou Y, Tuzel O (2018) Voxelnet: end-to-end learning for point cloud based 3d object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 4490–4499

Chen Y, Liu S, Shen X, Jia J (2019) Fast point r-cnn. In: Proceedings of the IEEE international conference on computer vision, pp 9775–9784

Yang Z, Sun Y, Liu S, Jia J (2020) 3dssd: point-based 3d single stage object detector. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 11040–11048

Qi CR, Yi L, Su H, Guibas LJ (2017) Pointnet++: deep hierarchical feature learning on point sets in a metric space. Adv Neural Inf Process Syst 30:5099–5108

Ren S, He K, Girshick R, Sun J (2016) Faster r-cnn: towards real-time object detection with region proposal networks. IEEE Trans Pattern Anal Mach Intell 39(6):1137–1149

Rasmussen C (1999) The infinite Gaussian mixture model. Adv Neural Inf Process Syst 12:554–560

Yan Y, Mao Y, Li B (2018) Second: sparsely embedded convolutional detection. Sensors 18(10):3337

He K, Zhang X, Ren S, Sun J (2015) Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans Pattern Anal Mach Intell 37(9):1904–1916

Lin TY, Goyal P, Girshick R, He K, Dollár P (2017) Focal loss for dense object detection. In: Proceedings of the IEEE international conference on computer vision, pp 2980–2988

Girshick R (2015) Fast r-cnn. In: Proceedings of the IEEE international conference on computer vision, pp 1440–1448

Geiger A, Lenz P, Urtasun R (2012) Are we ready for autonomous driving? The kitti vision benchmark suite. In: 2012 IEEE conference on computer vision and pattern recognition. IEEE, pp 3354–3361

Caesar H, Bankiti V, Lang AH, Vora S, Liong VE, Xu Q, Beijbom O et al (2020) nuscenes: a multimodal dataset for autonomous driving. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 11621–11631

Sun P, Kretzschmar H, Dotiwalla X, Chouard A, Patnaik V, Tsui P, Anguelov D et al (2020) Scalability in perception for autonomous driving: Waymo open dataset. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 2446–2454

Everingham M, Van Gool L, Williams CK, Winn J, Zisserman A (2010) The pascal visual object classes (voc) challenge. Int J Comput Vision 88(2):303–338

Acknowledgements

The authors would like to thanks the National Natural Science Foundation of China (51974229)for their support in this research.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interests

The author(s) declared no potential conflicts of interest with respect to the research, author- ship, and/or publication of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Qin, P., Zhang, C. & Dang, M. GVnet: Gaussian model with voxel-based 3D detection network for autonomous driving. Neural Comput & Applic 34, 6637–6645 (2022). https://doi.org/10.1007/s00521-021-06061-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-021-06061-z