Abstract

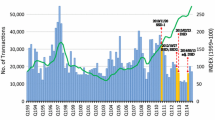

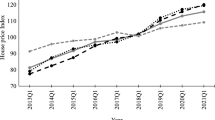

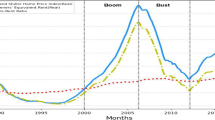

This study evaluates the effects of macroprudential policy on Hong Kong’s housing market using a multivariate ordered probit-augmented vector autoregressive model (MOP-VAR). The proposed MOP-VAR extends the conventional dummy policy variable approach by allowing explicit measurement of time-varying policy intensities that underlie policy rules, and thus facilitates analyses of bilateral relationship between house prices and multiple policy instruments when endogeneity exits among the instruments’ intensities and prices. An impulse response analysis suggests that the dampening effect of macroprudential tightening is stronger and more instantaneous on transactions than on prices. The eventual outcome as indicated by conditional forecasts is dominated by a strong and prolonged own price response to house price shocks and other external developments that undermine the policy’s effectiveness. Moreover, over the long haul, a combination of a stamp duty and stress test tends to be more effective than restricting the loan-to-value ratio in triggering a trend reversal in house prices, despite the government’s preference for the latter. The out-of-sample probabilistic forecasts of policy changes are mostly consistent with the observable outcomes.

Similar content being viewed by others

Notes

14th Annual Demographia International Housing Affordability Survey 2018. Available at: http://www.demographia.com/.

The gap with respect to historical market peaks could also be a subtle reference. These indicators are repeatedly mentioned in official quarterly economic reports in defense of interventions.

The dimension can, in theory, be extended to cover other options such as debt servicing ratio restrictions or to measure the stress test requirement separately. However, we face a potential problem of identification, as some policies are highly synchronized in our case. As a result, the second latent propensity is designed to capture the joint adoption of a stamp duty-related policy and/or a stress test requirement, which are the two most influential policy options other than the LTV ratio.

The gap between the current price and previous market peak indicates the extent of price excessiveness. Price, mortgage rate, and household income together proxy for buyer affordability. As house price is expressed as an index number rather than a dollar value, direct calculation of affordability is not possible. By the same token, actual rental yield has to make way for a scaled version of the price–rental differential. (http://www.info.gov.hk/gia/ISD_public_Calendar_en.html#)

See, for example, The HKSAR Government Press Release “LCQ 21: Measures for cooling down the overheated property market and meeting public demand for housing” (December 17, 2014).

We attempted to use population instead of domestic households and found that the BIC shows virtually identical results. This is not very surprising, as the population and household series have a correlation coefficient of 0.99. More importantly, the HKSAR government has principally relied on the household series to shape their housing policy. See, for instance, the official publications released by the Long-Term Housing Strategy Steering Committee.

According to official statistics, the annual number of immigrants resulting from such policies was less than 0.1% of Hong Kong’s population. See the HKSAR Government Press Releases: “LCQ7: Capital Investment Entrant Scheme” (February 23, 2011); “LCQ16: Quality Migrant Admission Scheme” (January 8, 2014); and “LCQ18: Admission Scheme for Mainland Talents and Professionals” (November 24, 2004). (http://www.info.gov.hk/gia/ISD_public_Calendar_en.html#)

The BFL algorithm, a least-squares approach that technically produces the smallest estimation bias, is a major temporal disaggregation approach that IMF (Bloem et al. 2001) and European Statistical System of EU (European Commission, 2017) have recommended in situations where external referencing data are unavailable. Multiple imputation is an alternative approach to temporal disaggregation when external referencing data are available. To check the robustness of our imputed data to the choice of disaggregation method, we have experimented with applying multiple imputation on the number of domestic households and median household income. The referencing data used include quarterly observations of the working population, the number of unemployed, the number of new leases and tenancy agreements, and a time trend. The quarterly time series imputed from multiple imputation turned out to be highly correlated with those from the BFL algorithm. The correlation coefficients between the two alternative methods are 0.9967 for the number of domestic households and 0.8863 for the median household income.

Figure 4 plots the correlation between the observed intensities and the unobserved propensities of both policy tools.

A one-s.d. LTV shock amounts to 0.53 units, and a one-s.d. SDST shock has a size of about 0.39 units.

The forecasting exercise of Dueker (2005) is not done recursively and concentrates instead on a single out-of-sample period during which there are ex post regime changes. It assesses how well the Qual-VAR foretells the realization of dichotomous states.

The problem raised here is not inherent in the methodology but is purely a data problem. If our end-sample contains episodes of tightening and relaxation, categorizing forecast propensities is straightforward.

References

Akinci O, Olmstead-Rumsey J (2018) How effective are macroprudential policies? An empirical investigation. J Financ Intermed 33:33–57

Albert JH, Chib S (1993) Bayesian analysis of binary and polychotomous response data. J Am Stat Assoc 88(422):669–679

Angelini P, Nicoletti-Altimari S, Visco I (2013) Macroprudential, microprudential and monetary policies: conflicts, complementarities and trade-offs. In: Dombret A, Lucius O (eds) Stability of the financial system—Illusion or feasible concept?. Elgar Edward Publishing, Cheltenham

Ashworth J, Parker SC (1997) Modelling regional house prices in the UK. Scott J Polit Econ 44(3):225–246

Bańbura M, Giannone D, Lenza M (2015) Conditional forecasts and scenario analysis with vector autoregressions for large cross-sections. Int J Forecast 31(3):739–756

Bianchi J (2010) Credit externalities: macroeconomic effects and policy implications. Am Econ Rev 100(2):398–402

Bloem AM, Dippelsman RJ, Maehle NO (2001) Quarterly national accounts manual—concepts, data sources, and compilation. International Monetary Fund, Washington, D.C

Boot JCG, Feibes W, Lisman JHC (1967) Further methods of derivation of quarterly figures from annual data. Appl Stat 16(1):65–75

Candelon B, Dumitrescu E, Hurlin C, Palm FC (2013) Multivariate dynamic probit models—an application to financial crisis mutation. In: Fomby TB, Kilian L, Murphy A (eds) VAR models in macroeconomics—new developments and applications: essays in honor of Christopher A. Sims (Advances in econometrics, volume 32). Emerald Group Publishing, Bingley

Celeux G, Forbes F, Robert CP, Titterington DM (2006) Deviance information criteria for missing data models. Bayesian Anal 1(4):651–674

Cerutti E, Claessens S, Laeven L (2015) The use and effectiveness of macroprudential policies: new evidence. International monetary fund working paper WP/15/61

Cheng L, Li B, Zeng Z (2010) Housing in a neoclassical growth model. Pac Econ Rev 15(2):246–262

Clement P (2010) The term “macroprudential”: origins and evolution. Bank Int Settl Q Rev 59–67

Crockett A (2000) Marrying the micro- and macroprudential dimensions of financial stability. In: Eleventh international conference of banking supervisors, held in Basel, 20–21 September 2000. https://www.bis.org/speeches/sp000921.htm

Doan T, Litterman R, Sims C (1984) Forecasting and conditional projection using realistic prior distributions. Econom Rev 3:1–100

Drake LM (1993) Modelling UK house prices using cointegration: an application of the Johansen technique. Appl Econ 25:1225–1228

Dueker M (2005) Dynamic forecasts of qualitative variables: a Qual VAR model of U.S. recessions. J Bus Econ Stat 23:96–104

Durbin J, Koopman SJ (2002) A simple and efficient simulation smoother for state space time series analysis. Biometrika 89(3):603–615

European Commission (2017). ESS guidelines on temporal disaggregation, benchmarking and reconciliation. From annual to quarterly to monthly data. Version 29, June 2017

Farhi E, Werning I (2016) A theory of macroprudential policies in the presence of nominal rigidities. Econometrica 84(5):1645–1704

Galati G, Moessner R (2013) Macroprudential policy—a literature review. J Econ Surv 27(5):846–878

Galati G, Teppa F, Alessie R (2011) Macro and micro drivers of house price dynamics: an application to Dutch data. DNB working papers 288, Netherlands Central Bank, Research Department

Gerlach S, Peng W (2005) Bank lending and property prices in Hong Kong. J Bank Finance 29(2):461–481

HKMA (2011) Loan-to-value ratio as a macroprudential tool—Hong Kong SAR’s experience and cross-country evidence. Bank Int Settl 57:163–178

Ho WL, Wong C, Shik AW (2010) Hong Kong people’s opinion on property market and housing policy in 2010: summary of survey findings. Press releases, Communications and Public Relations Office, Chinese University of Hong Kong, Shatin

Kirke R, Lo S (2013) Cooling measures in the Hong Kong real estate market: an assessment of their effectiveness and market reaction. Research paper. Colliers International

Koop G, Pesaran MH, Potter SM (1996) Impulse response analysis in nonlinear multivariate models. J Econom 74(1):119–147

Krainer J (2001) A theory of liquidity in residential real estate markets. J Urban Econ 49(1):32–53

Leung CKY (2001) Relating international trade to the housing market. Rev Dev Econ 5(2):328–335

Leung CKY (2003) Economic growth and increasing house prices. Pac Econ Rev 8(2):183–190

Lim C, Columba F, Costa A, Kongsamut P, Otani A, Saiyid M, Wezel T, Wu X (2011). Macroprudential policy: what instruments and how to use them? Lessons from country experiences. International monetary fund working paper WP/11/238

Ma L, Wang H, Chen J (2010) Analysis of Kalman filter with correlated noises under different dependence. J Inf Comput Sci 7(5):1147–1154

Mendicino C, Punzi MT (2014) House prices, capital inflows and macroprudential policy. J Bank Finance 49:337–355

Ortalo-Magne F, Rady S (2006) Housing market dynamics: on the contribution of income shocks and credit constraints. Rev Econ Stud 73(2):459–485

Rebelo S (1991) Long-run policy analysis and long-run growth. J Polit Econ 99(3):500–521

Simon D (2010) Kalman filtering with state constraints: a survey of linear and nonlinear algorithms. IET Control Theory Appl 4(8):1303–1318

Tillmann P (2015) Estimating the effects of macroprudential policy shocks: a Qual VAR approach. Econ Lett 135:1–4

Funding

This study was funded by Hong Kong Research Grant Council (Grant Number: PolyU 5917/13H).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors of this article declare that they have no conflict of interest.

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The views expressed in this paper are those of the authors and do not represent the stance of the HKSAR Government. The data are retrieved from official sources open to the public. Codes are available from the authors. The authors thank the anonymous referee and Bertrand Candelon (Editor) for their helpful comments.

Appendix: Estimation algorithm

Appendix: Estimation algorithm

-

Stacking the observations and reorganizing the algebra of the VAR in (1) gives

$$\tilde{Y} = \tilde{X}H + U,$$where \(\tilde{Y} = \left[ {\tilde{Y}_{1} , \ldots ,\tilde{Y}_{T} } \right]^{'}\) and \(U = \left[ {u_{1} , \cdots ,u_{T} } \right]^{'}\) are both \(T \times \left( {m + q} \right)\); \(\tilde{X}\) is the \(T \times \left( {n + \left( {m + q} \right)p} \right)\) matrix of the conforming exogenous and lagged variables; and \(H = \left[ {B A_{1} \cdots A_{p} } \right]^{'}\) is the \(\left( {n + \left( {m + q} \right)p} \right) \times \left( {m + q} \right)\) coefficient matrix. The Minnesota prior assumes the following distribution on \(h = vec\left( H \right)\):

$$h \sim N\left( {\mu_{h} , V_{h} } \right),$$where \(\mu_{h}\) is a vector of zeros except for the elements corresponding to the first own lag of \(\tilde{Y}\) that equals one. The prior variance \(V_{h}\) is a diagonal matrix with the elements

$$\left( {\frac{{\lambda_{1} }}{{l^{{\lambda_{3} }} }}} \right)^{2} {\text{if}} i = j\quad {\text{and }}\quad \left( {\frac{{\sigma_{i} \lambda_{1} \lambda_{2} }}{{\sigma_{j} l^{{\lambda_{3} }} }}} \right)^{2} {\text{if}}\quad i \ne j$$for lag \(l\) of the \(j^{th}\) variable in the \(i^{th}\) equation. \(\sigma_{i}\) is the OLS estimate of the standard deviation of the \(i^{th}\) error term. The prior variances of other exogenous variables are \(\lambda_{4}\). We set \(\lambda_{1} = 0.1, \lambda_{2} = 0.25, \lambda_{3} = 2,\, {\text{and}}\, \lambda_{4} = 10,000\). The VAR order is \(p = 2\).

-

Let \(D\) denote data, \(\varTheta\) the set of all of the parameters \(\left\{ {h,\varSigma , \beta , \rho , Y_{t}^{*} , c_{ik} } \right\}\), and \(\varTheta_{\backslash a}\) the subset of \(\varTheta\) excluding \(a\). Assuming a conjugate inverted-Wishart prior for the VAR covariance matrix \({{\Sigma }}\), the conditionals \(f\left( {h|{{\Theta }}_{\backslash h} ,D} \right)\) and \(f\left( {{{\Sigma }}|{{\Theta }}_{{\backslash {{\Sigma }}}} ,D} \right)\) are normal and inverted-Wishart, respectively.

-

The probit regression coefficient \(\beta\) has a normal prior with a mean and variance set to the OLS estimates of the corresponding univariate ordered probit models. Gibbs sampling from the conditional \(f\left( {\beta |{{\Theta }}_{\backslash \beta } ,D} \right)\) is straightforward. We assume the variance of \(\varepsilon_{t}\) has the form \({{\Omega }} = \left[ {\begin{array}{*{20}c} 1 & \rho \\ \rho & 1 \\ \end{array} } \right]\) with \(\left| \rho \right| < 1\) to ensure that the matrix is positive semi-definite. MCMC updates of \(\rho\) are calculated from the simulated outcomes of \({\hat{{\varepsilon }}}_{t}\). For consistency, we normalized the coefficient vector \(\beta\) correspondingly.

-

For each \(i = 1, \ldots ,q\), the \(K_{i}\) thresholds can be drawn recursively from uniform conditionals given the assumed diffuse priors:

$$f\left( {c_{ik} |{{\Theta }}_{{\backslash c_{ik} }} ,D} \right) = U\left( {{ \hbox{max} }\left( {\mathop {\hbox{max} }\limits_{{y_{it} = k - 1}} \left( {y_{it}^{*} } \right), c_{ik - 1} } \right), { \hbox{min} }\left( {\mathop {\hbox{min} }\limits_{{y_{it} = k}} \left( {y_{it}^{*} } \right), c_{ik + 1} } \right)} \right).$$We find that the mixing of the chain improves with shrewdly chosen upper and lower threshold bounds. After numerous trials, we set those at \(- 19 < c_{1k} < 199\) and \(- 9 < c_{2k} < 19,\) which are wide enough to accommodate their respective OLS estimates.

-

Sampling of \(Y_{t}^{*}\) is more complicated. To begin, we partition the elements of the VAR in the following manner (we assume an order \(p = 2\) in the illustration):

$$\tilde{Y}_{t} = \left[ {\begin{array}{*{20}c} {Z_{t} } \\ {Y_{t}^{*} } \\ \end{array} } \right], \, u_{t} = \left[ {\begin{array}{*{20}c} {u_{zt} } \\ {u_{Yt} } \\ \end{array} } \right], \, {{\Sigma }} = \left[ {\begin{array}{*{20}c} {{{\Sigma }}_{ZZ} } & {{{\Sigma }}_{ZY} } \\ {{{\Sigma }}_{YZ} } & {{{\Sigma }}_{YY} } \\ \end{array} } \right],$$with conforming coefficient matrices \(B = \left[ {\begin{array}{*{20}c} {B_{1} } \\ {B_{2} } \\ \end{array} } \right]\) and \(A_{j} = \left[ {\begin{array}{*{20}c} {A_{j1} } & {A_{j2} } \\ {A_{j3} } & {A_{j4} } \\ \end{array} } \right], \forall j.\) \(B_{1}\) and \(B_{2}\) have respective dimensions of \(m \times n\) and \(q \times n\); \(A_{j1}\) is \(m \times m\); \(A_{j4}\) is \(q \times q\); and \(A_{j2}\) and \(A_{j3}\) are \(m \times q\) and \(q \times m\), respectively. Now,

$$Z_{t} = B_{1} W_{t} + \left[ {A_{11} A_{12} } \right]\left[ {\begin{array}{*{20}c} {Z_{t - 1} } \\ {Y_{t - 1}^{*} } \\ \end{array} } \right] + \left[ {A_{21} A_{22} } \right]\left[ {\begin{array}{*{20}c} {Z_{t - 2} } \\ {Y_{t - 2}^{*} } \\ \end{array} } \right] + u_{zt}\quad {\text{and}}$$(A1)$$Y_{t}^{*} = B_{2} W_{t} + \left[ {A_{13} A_{14} } \right]\left[ {\begin{array}{*{20}c} {Z_{t - 1} } \\ {Y_{t - 1}^{*} } \\ \end{array} } \right] + \left[ {A_{23} A_{24} } \right]\left[ {\begin{array}{*{20}c} {Z_{t - 2} } \\ {Y_{t - 2}^{*} } \\ \end{array} } \right] + u_{Yt} .$$(A2) -

Let \(\mu_{Zt} = B_{1} W_{t} + A_{11} Z_{t - 1} + A_{21} Z_{t - 2}\) and have \(\mu_{Yt}\) similarly defined for terms in (A2). Together with Eq. (2) in the main text, we can rewrite the system in state space form:

$$\left[ {\begin{array}{*{20}c} {Y_{t}^{*} } \\ {Y_{t - 1}^{*} } \\ \end{array} } \right] = \left[ {\begin{array}{*{20}c} {A_{14} } & {A_{24} } \\ {I_{q} } & 0 \\ \end{array} } \right]\left[ {\begin{array}{*{20}c} {Y_{t - 1}^{*} } \\ {Y_{t - 2}^{*} } \\ \end{array} } \right] + \left[ {\begin{array}{*{20}c} {\mu_{Yt} } \\ 0 \\ \end{array} } \right] + \left[ {\begin{array}{*{20}c} {u_{Yt} } \\ 0 \\ \end{array} } \right] {\text{and}}$$(A3)$$\left[ {\begin{array}{*{20}c} {Z_{t} - \mu_{Zt} } \\ {\left( {I_{q} \otimes X_{t - 1}^{'} } \right)\beta } \\ \end{array} } \right] = \left[ {\begin{array}{*{20}c} {A_{12} } & {A_{22} } \\ {I_{q} } & 0 \\ \end{array} } \right]\left[ {\begin{array}{*{20}c} {Y_{t - 1}^{*} } \\ {Y_{t - 2}^{*} } \\ \end{array} } \right] + \left[ {\begin{array}{*{20}c} {u_{Zt} } \\ { - \varepsilon_{t - 1} } \\ \end{array} } \right],$$(A4)where the 0 s have conforming dimensions.

-

The Kalman filter (KF) is then applied to (A3) and (A4) in the forward-filtering stage with the outcome being stored and used in the subsequent stage of backward smoothing/sampling. A few remarks are warranted. First, a normal prior is assumed for the initial state vector \(\left[ {Y_{0}^{*'} ,Y_{ - 1}^{*'} } \right]^{'}\). Second, the error terms in the transition equation (A3) and the measurement equation (A4) are not independent, as in standard KF application, because \({{\Sigma }}_{YZ}\) is not a null matrix. So, modifications to the KF recursion (e.g., Ma et al. 2010) have to be used to account for the correlation between measurement and transition noises. Finally, the drawn values of the latent variables have to obey the inequality constraints instigated by the thresholds \(c_{ik}\) and the observed categorical data \(y_{it}\). Simon (2010) suggests a few ways to resolve the issue, and we accomplish this by adding an extra constrained minimization step after getting the updated state estimates from the unconstrained forward filter and backward smoother.

-

In sum, the algorithm iterates through the normal-inverted-Wishart conditionals for the VAR components, a normal conditional for the probit coefficients and the derived computation of the cross-correlation of the latent variables, uniform conditionals for the thresholds, and a constrained KF update and backward sampling of the latent variables. The simulation runs for 8,000 loops with the last 4,000 used to compute the posterior estimates.

Rights and permissions

About this article

Cite this article

Chow, W.W., Fung, M.K. The effects of macroprudential policy on Hong Kong’s housing market: a multivariate ordered probit-augmented vector autoregressive approach. Empir Econ 60, 633–660 (2021). https://doi.org/10.1007/s00181-019-01765-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00181-019-01765-7

Keywords

- Housing market

- Macroprudential policy

- Hong Kong

- Bayesian

- Vector autoregression

- Multivariate ordered probit