Abstract

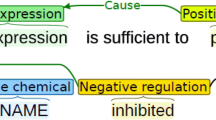

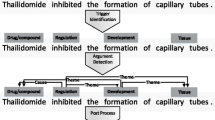

Biomedical event extraction is an important branch of biomedical information extraction. Trigger extraction is the most essential sub-task in event extraction, which has been widely concerned. Existing trigger extraction studies are mostly based on conventional machine learning or neural networks. But they neglect the ambiguity of word representations and the insufficient feature extraction by shallow hidden layers. In this paper, trigger extraction is treated as a sequence labeling problem. We introduce the language model to dynamically compute contextualized word representations and propose a multi-layer residual bidirectional long short-term memory (BiLSTM) architecture. First, we concatenate contextualized word embedding, pretrained word embedding and character-level embedding as the feature representations, which effectively solves the tokens’ ambiguity in biomedical corpora. Then, the designed BiLSTM block with residual connection and gated multi-layer perceptron is adopted to extract features iteratively. This architecture improves the ability of our model to capture information and avoids gradient exploding or vanishing. Finally, we combine the multi-layer residual BiLSTM with CRF layer to obtain more reasonable label sequences. Comparing with other state-of-the-art methods, the proposed model achieves the competitive performance (F1-score: 80.74%) on the biomedical multi-level event extraction (MLEE) corpus without any manual participation and feature engineering.

Similar content being viewed by others

References

Pyysalo S, Ohta T, Miwa M, Cho H-C, Tsujii J, Ananiadou S (2012) Event extraction across multiple levels of biological organization. Bioinformatics 28(18):i575–i581

Zhou D, Zhong D (2015) A semi-supervised learning framework for biomedical event extraction based on hidden topics. Artif Intell Med 64(1):51–58

Zhou D, Zhong D, He Y (2014) Event trigger identification for biomedical events extraction using domain knowledge. Bioinformatics 30(11):1587–1594

He X, Li L, Liu Y, Yu X, Meng J (2017) A two-stage biomedical event trigger detection method integrating feature selection and word embeddings. IEEE/ACM Trans Comput Biol Bioinf 15(4):1325–1332

Zhou W, Zhu Z (2020) A novel bnmf-dnn based speech reconstruction method for speech quality evaluation under complex environments. Int J Mach Learn Cybern 12(11):1–14

Zhang T, Yang X, Wang X, Wang R (2020) Deep joint neural model for single image haze removal and color correction. Inf Sci 541:16–35

Xiao Y, Yin H, Duan T, Qi H, Zhang Y, Jolfaei A, Xia K (2020) An intelligent prediction model for ucg state based on dual-source LSTM. Int J Mach Learn Cybern. https://doi.org/10.1007/s13042-020-01210-7

Li Y, Xu Z, Wang X, Wang X (2020) A bibliometric analysis on deep learning during 2007–2019. Int J Mach Learn Cybern 11(12):2807–2826

Zhang Y, Lin H, Yang Z, Wang J, Sun Y, Xu B, Zhao Z (2019) Neural network-based approaches for biomedical relation classification: a review. J Biomed Inform 99:103294

Wang J, Zhang J, An Y, Lin H, Yang Z, Zhang Y, Sun Y (2016) Biomedical event trigger detection by dependency-based word embedding. BMC Med Genom 9(2):45

Nie Y, Rong W, Zhang Y, Ouyang Y, Xiong Z (2015) Embedding assisted prediction architecture for event trigger identification. J Bioinform Comput Biol 13(03):1541001

Rahul PV, Sahu SK, Anand A (2017) Biomedical event trigger identification using bidirectional recurrent neural network based models. BioNLP 2017:316–321

He X, Li L, Wan J, Song D, Meng J, Wang Z (2018) Biomedical event trigger detection based on bilstm integrating attention mechanism and sentence vector. In: 2018 IEEE International Conference on Bioinformatics and Biomedicine (BIBM). IEEE, pp 651–654

Wang Y, Wang J, Lin H, Tang X, Zhang S, Li L (2018) Bidirectional long short-term memory with crf for detecting biomedical event trigger in fasttext semantic space. BMC Bioinform 19(20):507

Li L, Huang M, Liu Y, Qian S, He X (2019) Contextual label sensitive gated network for biomedical event trigger extraction. J Biomed Inform:103221

Wang C, Zhou SK, Cheng Z (2020) First image then video: a two-stage network for spatiotemporal video denoising. arXiv:2001.00346

Claus M, van Gemert J (2019) Videnn: Deep blind video denoising. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (CVPR) workshops, Long Beach, CA, pp 1843–1852

Huang YY, Wang WY (2017) Deep residual learning for weakly-supervised relation extraction. arXiv:1707.08866

Gui T, Zhang Q, Zhao L, Lin Y, Peng M, Gong J, Huang X (2019) Long short-term memory with dynamic skip connections. Proc AAAI Conf Artif Intell 33:6481–6488

Peters ME, Neumann M, Iyyer M, Gardner M, Clark C, Lee K, Zettlemoyer L (2018) Deep contextualized word representations. arXiv:1802.05365

Lample G, Ballesteros M, Subramanian S, Kawakami K, Dyer C (2016) Neural architectures for named entity recognition. arXiv:1603.01360

Mikolov T, Chen K, Corrado G, Dean J (2013) Efficient estimation of word representations in vector space. arXiv:1301.3781

Pennington J, Socher R, Manning C (2014) Glove: global vectors for word representation. In: Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), Doha, Qatar, pp 1532–1543

Moen S, Ananiadou TSS (2013) Distributional semantics resources for biomedical text processing. In: Proceedings of the 5th international symposium on languages in biology and medicine (LBM), Tokyo, Japan, pp 39–44

Radford A, Narasimhan K, Salimans T, Sutskever I (2018) Improving language understanding by generative pre-training. https://s3-us-west-2.amazonaws.com/openai-assets/research-covers/language-unsupervised/language_understanding_paper.pdf

Devlin J, Chang M-W, Lee K, Toutanova K (2018) Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv:1810.04805

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neural Comput 9(8):1735–1780

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), Las Vegas, Nevada, pp 770–778

Ba JL, Kiros JR, Hinton GE (2016) Layer normalization. arXiv:1607.06450

Chung J, Gulcehre C, Cho K, Bengio Y (2014) Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv:1412.3555

Kingma DP, Ba J (2014) Adam: a method for stochastic optimization. arXiv:1412.6980

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R (2014) Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res 15(1):1929–1958

Fei H, Ren Y, Ji D (2020) A tree-based neural network model for biomedical event trigger detection. Inf Sci 512:175–185

Acknowledgements

This work was supported by National Natural Science Foundation of China (No. 61976124).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Wei, H., Zhou, A., Zhang, Y. et al. Biomedical event trigger extraction based on multi-layer residual BiLSTM and contextualized word representations. Int. J. Mach. Learn. & Cyber. 13, 721–733 (2022). https://doi.org/10.1007/s13042-021-01315-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-021-01315-7