Abstract

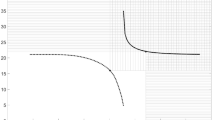

This paper is devoted to estimation problems of ARMA processes in view of statistical information theory. First Lindley’s definition of the information content of a statistical experiment is extended to the information on the model structure. It is shown that the whole information supplied by the experiment can be decomposed into the information on the model structure and the information on the parameters of the model. For nearly nonsta-tionary and seasonal models the properties of estimators can not be evaluated using classical information measures. The arising problems are illustrated by a special example, where the distribution of estimators are studied by the Monte Carlo method.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Akaike, H. (1979): A Bayesian extension of the minimum AIC procedure of autoregressive model fitting, Biometrika 66, 237–242.

Arató, M. (1982): Linear Stochastic Systems with Constant Coefficients, Lecture Notes in Cont. 45, Springer Vlg.

Csiszár, I. (1963): Eine informationstheoretische Ungleichung und ihre Anwendung auf den Beweis der Ergodizitat von Markoffschen Ketten, Magyar Tud. Akad. Mat. Kutató Int. Közl. 8, 85–108.

DeGroot, M. (1972): Uncertainty, information and sequential experiments. Ann. Math. Statist. 33, 404–419.

Efron, B. (1975): Defining the Curvature of a Statistical Problem. Ann. Stat. 3, 1189–1242.

Kullback, S. (1959): Information Theory and Statistics, John Wiley, N.Y.

Lindley, D. (1956): On a measure of the information provided by an experiment. Ann. Math. Statist. 27, 986–1005.

Mallows, C. (1959): The information in an experiment. J. Roy. Stat. Soc. Ser. B 21, 79–86.

Nemetz, T. (1972): Information type measures and their application in statistics. Lecture Notes, Carleton Univ., Ottawa

Pinsker, M. (1960): Information and Informational Stability of Stochastic Variables and Processes, (in Russian) Akademia Nauk SSSR, Moscow.

Rényi, A. (1961): On measures of entropy and information. In: Proc. 4th Berk. Symp. Statist., Univ. California Press, Vol. I, 547–561.

Rao, M. M.(1984): Probability Theory and Its Application, Academic Press, New York — London.

Veres, S. (1983): Common generalization of information measures, Trans. 9th Prague Conf. on Inf. Th., 263–368.

Vincze, I. (1982): A measure of information and its role in uncertainty relations in mathematical statistics, In: Exchangeability in Prob. Statist, (eds: G. Koch and F. Spizzichino), North Holland P. C.

Author information

Authors and Affiliations

Rights and permissions

Copyright information

© 1988 Academia, Publishing House of the Czechoslovak Academy of Sciences, Prague

About this chapter

Cite this chapter

Veres, S. (1988). On the Information of Experiments When Observing Time Series. In: Transactions of the Tenth Prague Conference on Information Theory, Statistical Decision Functions, Random Processes. Transactions of the Tenth Prague Conference on Information Theory, Statistical Decision Functions, Random Processes, vol 10A-B. Springer, Dordrecht. https://doi.org/10.1007/978-94-010-9913-4_49

Download citation

DOI: https://doi.org/10.1007/978-94-010-9913-4_49

Publisher Name: Springer, Dordrecht

Print ISBN: 978-94-010-9915-8

Online ISBN: 978-94-010-9913-4

eBook Packages: Springer Book Archive