Abstract

One approach for constructing copula functions is by multiplication. Given that products of cumulative distribution functions (CDFs) are also CDFs, an adjustment to this multiplication will result in a copula model, as discussed by Liebscher (J Mult Analysis, 2008). Parameterizing models via products of CDFs has some advantages, both from the copula perspective (e.g. it is well-defined for any dimensionality) and from general multivariate analysis (e.g. it provides models where small dimensional marginal distributions can be easily read-off from the parameters). Independently, Huang and Frey (J Mach Learn Res, 2011) showed the connection between certain sparse graphical models and products of CDFs, as well as message-passing (dynamic programming) schemes for computing the likelihood function of such models. Such schemes allow models to be estimated with likelihood-based methods. We discuss and demonstrate MCMC approaches for estimating such models in a Bayesian context, their application in copula modeling, and how message-passing can be strongly simplified. Importantly, our view of message-passing opens up possibilities to scaling up such methods, given that even dynamic programming is not a scalable solution for calculating likelihood functions in many models.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Pseudo-marginal approaches [1], which use estimates of the likelihood function, are discussed briefly in the last section.

- 2.

In practice, this could be achieved by fitting marginal models \(\hat{F}_i(\cdot)\) separately, and transforming the data using plug-in estimates as if they were the true marginals. This framework is not uncommon in frequentist estimation of copulas for continuous data, popularized as “inference function for margins”, IFM [11].

- 3.

Please notice that [10] also presents a way of calculating the gradient of the likelihood function within the message passing algorithm, and as such has also its own advantages for tasks such as maximum likelihood estimation or gradient-based sampling. We do not cover gradient computation in this chapter.

- 4.

Known as Global Markov conditions, as described by e.g. [21].

- 5.

As a matter of fact, with one latent variable per factor, the resulting structure is a Markov network where the edge \(H_{j_1} - H_{j_2}\) appears only if factors j 1 and j 2 have at least one common argument.

- 6.

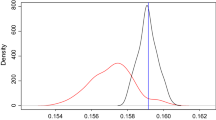

Even though it is still very restricted, since Clayton copulas have single parameters. A plot of the residuals strongly suggests that a t-copula would be a more appropriate choice, but our goal here is just to illustrate the algorithm.

References

Andrieu, C., Roberts, G.: The pseudo-marginal approach for efficient Monte Carlo computations. Ann. Stat. 37, 697–725 (2009)

Bedford, T., Cooke, R.: Vines: a new graphical model for dependent random variables. Ann. Stat. 30, 1031–1068 (2002)

Cowell, R., Dawid, A., Lauritzen, S., Spiegelhalter, D.: Probabilistic Networks and Expert Systems. Springer, Heidelberg (1999)

Drton, M., Richardson, T.: A new algorithm for maximum likelihood estimation in Gaussian models for marginal independence. Proceedings of the 19th Conference on Uncertainty in Artificial Intelligence (2003)

Drton, M., Richardson, T.: Binary models for marginal independence. J. R. Stat. Soc. Ser. B 70, 287–309 (2008)

Elidan, G.: Copulas in machine learning. Lecture notes in statistics (to appear)

Hofert, M.: Sampling Archimedean copulas. Comput. Stat. Data Anal. 52, 5163–5174 (2008)

Huang, J., Frey, B.: Cumulative distribution networks and the derivative-sum-product algorithm. Proceedings of the 24th Conference on Uncertainty in Artificial Intelligence (2008)

Huang, J., Frey, B.: Cumulative distribution networks and the derivative-sum-product algorithm: models and inference for cumulative distribution functions on graphs. J. Mach. Learn. Res. 12, 301–348 (2011)

Huang, J., Jojic, N., Meek, C.: Exact inference and learning for cumulative distribution functions on loopy graphs. Adv. Neural Inf. Process. Syst. 23, 874–882 (2010)

Joe, H.: Multivariate Models and Dependence Concepts. Chapman-Hall, London (1997)

Kirshner, S.: Learning with tree-averaged densities and distributions. In: Platt, J. C., Koller, D., Singer, Y., Roweis, S. T. (eds.) Advances in Neural Information Processing Systems 20, pp. 761–768. Curran Associates, Inc. (2008)

Kschischang, F., Frey, B., Brendan, J., Loeliger, H.A.: Factor graphs and the sum-product algorithm. IEEE Trans. Inf. Theory 47, 498–519 (2001)

Liebscher, E.: Construction of asymmetric multivariate copulas. J. Multivar. Anal. 99, 2234–2250 (2008)

Marshall, A., Olkin, I.: Families of multivariate distributions. J. Am. Statist. Assoc. 83, 834–841 (1988)

Minka, T.: Automatic choice of dimensionality for PCA. Adv. Neural Inf. Process. Syst. 13, 598–604 (2000)

Murray, I., Ghahramani, Z., MacKay, D.: MCMC for doubly-intractable distributions. Proceedings of 22nd Conference on Uncertainty in Artificial Intelligence (2006)

Neal, R.: Slice sampling. Ann. Stat. 31, 705–767 (2003)

Nelsen, R.: An Introduction to Copulas. Springer-Verlag, New York (2007)

Pearl, J.: Probabilistic Reasoning in Expert Systems: Networks of Plausible Inference. Morgan Kaufmann, San Francisco (1988)

Richardson, T., Spirtes, P.: Ancestral graph Markov models. Ann. Stat. 30, 962–1030 (2002)

Silva, R.: Latent composite likelihood learning for the structured canonical correlation model. Proceedings of the 28th Conference on Uncertainty in Artificial Intelligence, UAI (2012)

Silva, R.: A MCMC approach for learning the structure of Gaussian acyclic directed mixed graphs. In: P. Giudici, S. Ingrassia, M. Vichi (eds.) Statistical Models for Data Analysis, pp. 343–352. Springer, Heidelberg (2013)

Silva, R., Ghahramani, Z.: The hidden life of latent variables: Bayesian learning with mixed graph models. J. Machi. Learn. Res. 10, 1187–1238 (2009)

Walker, S.: Posterior sampling when the normalising constant is unknown. Commun. Stat. Simul. Comput. 40, 784–792 (2011)

Acknowledgements

The author would like to thank Robert B. Gramacy for the financial data. This work was supported by a EPSRC grant EP/J013293/1.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Silva, R. (2015). Bayesian Inference in Cumulative Distribution Fields. In: Polpo, A., Louzada, F., Rifo, L., Stern, J., Lauretto, M. (eds) Interdisciplinary Bayesian Statistics. Springer Proceedings in Mathematics & Statistics, vol 118. Springer, Cham. https://doi.org/10.1007/978-3-319-12454-4_7

Download citation

DOI: https://doi.org/10.1007/978-3-319-12454-4_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-12453-7

Online ISBN: 978-3-319-12454-4

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)