Abstract

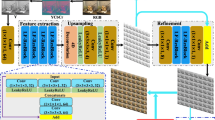

Most existing light field (LF) super-resolution (SR) methods either fail to fully use angular information or have an unbalanced performance distribution because they use parts of views. To address these issues, we propose a novel integration network based on macro-pixel representation for the LF SR task, named MPIN. Restoring the entire LF image simultaneously, we couple the spatial and angular information by rearranging the four-dimensional LF image into a two-dimensional macro-pixel image. Then, two special convolutions are deployed to extract spatial and angular information, separately. To fully exploit spatial-angular correlations, the integration resblock is designed to merge the two kinds of information for mutual guidance, allowing our method to be angular-coherent. Under the macro-pixel representation, an angular shuffle layer is tailored to improve the spatial resolution of the macro-pixel image, which can effectively avoid aliasing. Extensive experiments on both synthetic and real-world LF datasets demonstrate that our method can achieve better performance than the state-of-the-art methods qualitatively and quantitatively. Moreover, the proposed method has an advantage in preserving the inherent epipolar structures of LF images with a balanced distribution of performance.

摘要

现有的大多数光场超分辨率方法不能充分利用角度信息, 或者由于利用部分视图而产生不均衡的性能。为解决这些问题, 本文提出一种基于宏像素表示的光场图像超分辨率聚合网络模型(称为MPIN)。该网络通过将四维光场图像重新排列成二维宏像素图像, 将空间和角度信息进行耦合, 从而同时恢复整张光场图像。网络利用两种特殊的卷积分别提取空间和角度信息。为充分利用空间—角度相关性, 所设计的聚合残差模块融合两种信息使其相互引导, 以实现角度相干性。在宏像素表示下, 该网络通过扩展角度混洗层来提高宏像素图像的空间分辨率, 有效避免了混叠。在合成和真实光场数据集上的大量实验表明, 本文提出的方法在定性和定量上均实现了比现有方法更好的性能。此外, 该方法在保持光场图像固有极线结构的同时, 具有均衡性能分布的优点。

Similar content being viewed by others

References

Alain M, Smolic A, 2018. Light field super-resolution via LFBM5D sparse coding. Proc 25th IEEE Int Conf on Image Processing, p.2501–2505. https://doi.org/10.1109/ICIP.2018.8451162

Bishop TE, Favaro P, 2012. The light field camera: extended depth of field, aliasing, and superresolution. IEEE Trans Patt Anal Mach Intell, 34(5):972–986. https://doi.org/10.1109/TPAMI.2011.168

Bishop TE, Zanetti S, Favaro P, 2009. Light field super-resolution. Proc IEEE Int Conf on Computational Photography, p.1–9. https://doi.org/10.1109/ICCPHOT.2009.5559010

Dong C, Loy CC, He KM, et al., 2014. Learning a deep convolutional network for image super-resolution. Proc 13th European Conf on Computer Vision, p.184–199. https://doi.org/10.1007/978-3-319-10593-2_13

Fan HZ, Liu D, Xiong ZW, et al., 2017. Two-stage convolutional neural network for light field super-resolution. Proc IEEE Int Conf on Image Processing, p.1167–1171. https://doi.org/10.1109/ICIP.2017.8296465

Farrugia RA, Galea C, Guillemot C, 2017. Super resolution of light field images using linear subspace projection of patch-volumes. IEEE J Sel Top Signal Process, 11(7): 1058–1071. https://doi.org/10.1109/JSTSP.2017.2747127

Glorot X, Bengio Y, 2010. Understanding the difficulty of training deep feedforward neural networks. Proc 13th Int Conf on Artificial Intelligence and Statistics, p.249–256.

Gul MSK, Gunturk BK, 2018. Spatial and angular resolution enhancement of light fields using convolutional neural networks. IEEE Trans Image Process, 27(5):2146–2159. https://doi.org/10.1109/TIP.2018.2794181

Honauer K, Johannsen O, Kondermann D, et al., 2016. A dataset and evaluation methodology for depth estimation on 4D light fields. Proc 13th Asian Conf on Computer Vision, p.19–34. https://doi.org/10.1007/978-3-319-54187-7_2

Jin J, Hou JH, Chen J, et al., 2020. Light field spatial super-resolution via deep combinatorial geometry embedding and structural consistency regularization. Proc IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.2257–2266. https://doi.org/10.1109/CVPR42600.2020.00233

Kalantari NK, Wang TC, Ramamoorthi R, 2016. Learning-based view synthesis for light field cameras. ACM Trans Graph, 35(6):193. https://doi.org/10.1145/2980179.2980251

Levoy M, Hanrahan P, 1996. Light field rendering. Proc 23rd Annual Conf on Computer Graphics and Interactive Techniques, p.31–42. https://doi.org/10.1145/237170.237199

Li M, Diao CY, Xu DQ, et al., 2020. A non-Lambertian photometric stereo under perspective projection. Front Inform Technol Electron Eng, 21(8):1191–1205. https://doi.org/10.1631/FITEE.1900156

Liang CK, Ramamoorthi R, 2015. A light transport framework for lenslet light field cameras. ACM Trans Graph, 34(2):16. https://doi.org/10.1145/2665075

Lim B, Son S, Kim H, et al., 2017. Enhanced deep residual networks for single image super-resolution. Proc IEEE Conf on Computer Vision and Pattern Recognition Workshops, p.1132–1140. https://doi.org/10.1109/CVPRW.2017.151

Lim J, Ok H, Park B, et al., 2009. Improving the spatail resolution based on 4D light field data. Proc 16th IEEE Int Conf on Image Processing, p.1173–1176. https://doi.org/10.1109/ICIP.2009.5413719

Mitra K, Veeraraghavan A, 2012. Light field denoising, light field superresolution and stereo camera based refocussing using a GMM light field patch prior. Proc IEEE Computer Society Conf on Computer Vision and Pattern Recognition Workshops, p.22–28. https://doi.org/10.1109/CVPRW.2012.6239346

Nava FP, Luke JP, 2009. Simultaneous estimation of superresolved depth and all-in-focus images from a plenoptic camera. Proc 3DTV Conf: the True Vision—Capture, Transmission and Display of 3D Video, p.1–4. https://doi.org/10.1109/3DTV.2009.5069675

Ng R, Levoy M, Brédif M, et al., 2005. Light Field Photography with a Hand-Held Plenoptic Camera. Technical Report No. CTSR 2005-02, Stanford University, USA.

Peng JY, Xiong ZW, Liu D, et al., 2018. Unsupervised depth estimation from light field using a convolutional neural network. Proc Int Conf on 3D Vision, p.295–303. https://doi.org/10.1109/3DV.2018.00042

Pérez F, Pérez A, Rodríguez M, et al., 2012. Fourier slice super-resolution in plenoptic cameras. Proc IEEE Int Conf on Computational Photography, p.1–11. https://doi.org/10.1109/ICCPhot.2012.6215210

Pérez F, Pérez A, Rodríguez M, et al., 2015. Super-resolved Fourier-slice refocusing in plenoptic cameras. J Math Imag Vis, 52(2):200–217. https://doi.org/10.1007/s10851-014-0540-1

Rerabek M, Ebrahimi T, 2016. New light field image dataset. Proc 8th Int Conf on Quality of Multimedia Experience, p.1–2. https://doi.org/10.5281/zenodo.209499

Rossi M, Frossard P, 2017. Graph-based light field super-resolution. Proc IEEE 19th Int Workshop on Multimedia Signal Processing, p.1–6. https://doi.org/10.1109/MMSP.2017.8122224

Rossi M, Frossard P, 2018. Geometry-consistent light field super-resolution via graph-based regularization. IEEE Trans Image Process, 27(9):4207–4218. https://doi.org/10.1109/TIP.2018.2828983

Shi WZ, Caballero J, Huszár F, et al., 2016. Real-time single image and video super-resolution using an efficient subpixel convolutional neural network. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.1874–1883. https://doi.org/10.1109/CVPR.2016.207

Wang TC, Zhu JY, Hiroaki E, et al., 2016. A 4D light-field dataset and CNN architectures for material recognition. Proc 14th European Conf on Computer Vision, p.121–138. https://doi.org/10.1007/978-3-319-46487-9_8

Wang YL, Hou GQ, Sun ZN, et al., 2016. A simple and robust super resolution method for light field images. Proc IEEE Int Conf on Image Processing, p.1459–1463. https://doi.org/10.1109/ICIP.2016.7532600

Wang YL, Liu F, Zhang KB, et al., 2018. LFNet: a novel bidirectional recurrent convolutional neural network for light-field image super-resolution. IEEE Trans Image Process, 27(9):4274–4286. https://doi.org/10.1109/TIP.2018.2834819

Wang YQ, Wang LG, Yang JG, et al., 2020. Spatial-angular interaction for light field image super-resolution. Proc 16th European Conf on Computer Vision, p.290–308. https://doi.org/10.1007/978-3-030-58592-1_18

Wanner S, Goldluecke B, 2014. Variational light field analysis for disparity estimation and super-resolution. IEEE Trans Patt Anal Mach Intell, 36(3):606–619. https://doi.org/10.1109/TPAMI.2013.147

Wanner S, Meister S, Goldluecke B, 2013. Datasets and benchmarks for densely sampled 4D light fields. Proc 18th Int Workshop on Vision, Modeling, and Visualization, p.225–226. https://doi.org/10.2312/PE.VMV.VMV13.225-226

Yeung HWF, Hou JH, Chen XM, et al., 2019. Light field spatial super-resolution using deep efficient spatial-angular separable convolution. IEEE Trans Image Process, 28(5):2319–2330. https://doi.org/10.1109/TIP.2018.2885236

Yi P, Wang ZY, Jiang K, et al., 2019. Progressive fusion video super-resolution network via exploiting non-local spatio-temporal correlations. Proc IEEE/CVF Int Conf on Computer Vision, p.3106–3115. https://doi.org/10.1109/ICCV.2019.00320

Yoon Y, Jeon HG, Yoo D, et al., 2015. Learning a deep convolutional network for light-field image super-resolution. Proc IEEE Int Conf on Computer Vision Workshop, p.57–65. https://doi.org/10.1109/ICCVW.2015.17

Yuan Y, Cao ZQ, Su LJ, 2018. Light-field image superresolution using a combined deep CNN based on EPI. IEEE Signal Process Lett, 25(9):1359–1363. https://doi.org/10.1109/LSP.2018.2856619

Yücer K, Sorkine-Hornung A, Wang O, et al., 2016. Efficient 3D object segmentation from densely sampled light fields with applications to 3D reconstruction. ACM Trans Graph, 35(3):22. https://doi.org/10.1145/2876504

Zhang S, Lin YF, Sheng H, 2019. Residual networks for light field image super-resolution. Proc IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.11038–11047. https://doi.org/10.1109/CVPR.2019.01130

Zhu H, Wang Q, Yu JY, 2017. Light field imaging: models, calibrations, reconstructions, and applications. Front Inform Technol Electron Eng, 18(9):1236–1249. https://doi.org/10.1631/FITEE.1601727

Author information

Authors and Affiliations

Corresponding author

Additional information

Project supported by the National Natural Science Foundation of China (No. 61773295)

Contributors

Xinya WANG and Jiayi MA designed the research. Xinya WANG and Wenjing GAO processed the data. Xinya WANG drafted the manuscript. Jiayi MA helped organize the manuscript. Jiayi MA and Junjun JIANG revised and finalized the paper.

Compliance with ethics guidelines

Xinya WANG, Jiayi MA, Wenjing GAO, and Junjun JIANG declare that they have no conflict of interest.

Xinya WANG, first author of this invited paper, received her BS degree from the Electronic Information School, Wuhan University, Wuhan, China, in 2018. She is currently pursuing her PhD degree with the Electronic Information School, Wuhan University. Her research interests include neural networks, machine learning, and image processing.

Jiayi MA, corresponding author of this invited paper, received his BS degree in information and computing science and PhD degree in control science and engineering from Huazhong University of Science and Technology, Wuhan, China, in 2008 and 2014, respectively. He is currently a professor with the Electronic Information School, Wuhan University. He has authored or co-authored more than 200 refereed journal and conference papers. His research interests include computer vision, machine learning, and robotics. Dr. Ma has been identified in the 2020 and 2019 Highly Cited Researcher lists from Web of Science. He is an area editor of Information Fusion, an associate editor of Neurocomputing, Sensors, and Entropy, and a guest editor of Remote Sensing.

Rights and permissions

About this article

Cite this article

Wang, X., Ma, J., Gao, W. et al. MPIN: a macro-pixel integration network for light field super-resolution. Front Inform Technol Electron Eng 22, 1299–1310 (2021). https://doi.org/10.1631/FITEE.2000566

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1631/FITEE.2000566