Abstract

A common method for extracting true correlations from large data sets is to look for variables with unusually large coefficients on those principal components with the biggest eigenvalues. Here, we show that even if the top principal components have no unusually large coefficients, large coefficients on lower principal components can still correspond to a valid signal. This contradicts the typical mathematical justification for principal component analysis, which requires that eigenvalue distributions from relevant random matrix ensembles have compact support, so that any eigenvalue above the upper threshold corresponds to signal. The new possibility arises via a mechanism based on a variant of the Feynman-Hellmann theorem, and leads to significant correlations between a signal and principal components when the underlying noise is not both independent and uncorrelated, so the eigenvalue spacing of the noise distribution can be sufficiently large. This mechanism justifies a new way of using principal component analysis and rationalizes recent empirical findings that lower principal components can have information about the signal, even if the largest ones do not.

- Received 17 September 2013

DOI:https://doi.org/10.1103/PhysRevX.4.031032

This article is available under the terms of the Creative Commons Attribution 3.0 License. Further distribution of this work must maintain attribution to the author(s) and the published article’s title, journal citation, and DOI.

Published by the American Physical Society

Popular Summary

Technological advances have made it possible to measure an ever-increasing number of variables during an experiment. Determining correlations between different variables yields insights into the system, potentially leading to predictive theoretical models. For example, the multidimensional data sets generated by the Cancer Genome Atlas allow for the detection of correlated genetic perturbations that result in cancer phenotypes.

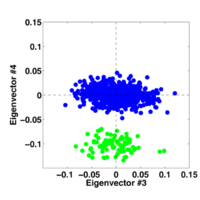

However, when a data set measures many more quantities (i.e., the expression levels of different genes in a genome) than the number of measurements that are made, there is a chance that a measured correlation could be spurious. It is therefore necessary to develop a rigorous procedure for determining when an observed correlation is spurious and when it is statistically reliable. A common technique is to look for variables with unusually large coefficients on principal components with the biggest eigenvalues. We show that even if the top principal components have no unusually large coefficients, large coefficients on lower principal components can still correspond to a valid signal. The mechanism that allows for this methodology is based on a variant of the Feynman-Hellmann theorem, developed for level splittings in quantum mechanics.

Our findings suggest that information about the structure of true covariance between variables can be recovered by examining the component distributions of different eigenvectors (not necessarily those with the largest eigenvalues, as is the case in principal component analysis).