Abstract

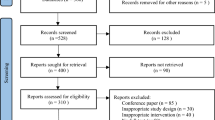

This article proposes a novel framework for the recognition of six universal facial expressions. The framework is based on three sets of features extracted from a face image: entropy, brightness, and local binary pattern. First, saliency maps are obtained using the state-of-the-art saliency detection algorithm “frequency-tuned salient region detection”. The idea is to use saliency maps to determine appropriate weights or values for the extracted features (i.e., brightness and entropy). We have performed a visual experiment to validate the performance of the saliency detection algorithm against the human visual system. Eye movements of 15 subjects were recorded using an eye-tracker in free-viewing conditions while they watched a collection of 54 videos selected from the Cohn-Kanade facial expression database. The results of the visual experiment demonstrated that the obtained saliency maps are consistent with the data on human fixations. Finally, the performance of the proposed framework is demonstrated via satisfactory classification results achieved with the Cohn-Kanade database, FG-NET FEED database, and Dartmouth database of children’s faces.

Similar content being viewed by others

References

Ekman P, Friesen W V, Ellsworth P. Emotion in the Human Face: Guidelines for Research and an Integration of Findings. New York: Pergamon Press, 1972

Ekman P, Friesen W V. Unmasking the Face: A Guide to Recognizing Emotions from Facial Clues. New Jersey: Prentice Hall, 1975

Ekman P. Telling Lies: Clues to Deceit in the Marketplace, Politics, and Marriage. 3rd ed. New York: W. W. Norton & Company, 2001

Ekman P. Facial expression of emotion. Psychologist, 1993, 48(4): 384–392

Bull P. State of the art: nonverbal communication. Psychologist, 2001, 14(12): 644–647

Yngve V H. On getting a word in edgewise. In: Proceedings of the 6th Regional Meeting of the Chicago Linguistic Society. 1970, 567–578

Carrera-Levillain P, Fernandez-Dols J. Neutral faces in context: their emotional meaning and their function. Journal of Nonverbal Behavior, 1994, 18(4): 281–299

Fernandez-Dols JM, Wallbott H, Sanchez F. Emotion category accessibility and the decoding of emotion from facial expression and context. Journal of Nonverbal Behavior, 1991, 15(2): 107–123

Ekman P. Universals and cultural differences in facial expressions of emotion. In: Proceedings of Nebraska Symposium on Motivation. 1971, 207–283

Rajashekar U, Cormack L K, Bovik A C. Visual search: structure from noise. In: Proceedings of Eye Tracking Research & Applications Symposium. 2002, 119–123

Khan R A, Konik H, Dinet E. Enhanced image saliency model based on blur identification. In: Proceedings of IEEE International Conference on Image and Vision Computing New Zealand (IVCNZ). 2010, 1–7

Achanta R, Hemami S, Estrada F, Susstrunk S. Frequency-tuned salient region detection. In: Proceedings of IEEE International Conference on Computer Vision and Pattern Recognition. 2009, 1597–1604

Viola P, Jones M. Rapid object detection using a boosted cascade of simple features. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. 2001, 511–518

Littlewort G, Bartlett M S, Fasel I, Susskind J, Movellan J. Dynamics of facial expression extracted automatically from video. Image and Vision Computing, 2006, 24(6): 615–625

Tian Y. Evaluation of face resolution for expression analysis. In: Proceedings of Computer Vision and Pattern Recognition Workshop, 2004

Yang P, Liu Q, Metaxas D N. Exploring facial expressions with compositional features. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. 2010, 2638–2644

Ojala T, Pietikäinen M, Harwood D. A comparative study of texture measures with classification based on featured distribution. Pattern Recognition, 1996, 29(1): 51–59

Khan R A, Meyer A, Konik H, Bouakaz S. Exploring human visual system: study to aid the development of automatic facial expression recognition framework. In: Proceedings of IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). 2012, 49–54

Ojansivu V, Heikkilä J. Blur insensitive texture classification using local phase quantization. In: Proceedings of International conference on Image and Signal Processing. 2008, 236–243

Khan R A, Meyer A, Konik H, Bouakaz S. Human vision inspired framework for facial expressions recognition. In: Proceedings of IEEE International Conference on Image Processing (ICIP). 2012, 2593–2596

Zhao G, Pietikäinen M. Dynamic texture recognition using local binary patterns with an application to facial expressions. IEEE Transaction on Pattern Analysis and Machine Intelligence, 2007, 29(6): 915–928

Zhang Y, Ji Q. Active and dynamic information fusion for facial expression understanding from image sequences. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2005, 27(5): 699–714

Valstar M F, Patras I, Pantic M. Facial action unit detection using probabilistic actively learned support vector machines on tracked facial point data. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition Workshop. 2005, 76–84

Bai Y, Guo L, Jin L, Huang Q. A novel feature extraction method using pyramid histogram of orientation gradients for smile recognition. In: Proceedings of IEEE International Conference on Image Processing (ICIP). 2009, 3305–3308

Khan R A, Meyer A, Konik H, Bouakaz S. Pain detection through shape and appearance features. In: Proceedings of IEEE International Conference on Multimedia and Expo (ICME). 2013, 1–6

Khan R A, Meyer A, Bouakaz S. Automatic affect analysis: from children to adults. In: Proceedings of International Symposium on Visual Computing (ISVC). 2015, 304–313

Zhao G, Pietikäinen M. Boosted multi-resolution spatiotemporal descriptors for facial expression recognition. Pattern Recognition Letters, 2009, 30(12): 1117–1127

Lucey P, Cohn J F, Kanade T, Saragih J, Ambadar Z, Matthews I. The extended cohn-kande dataset (CK+): a complete facial expression dataset for action unit and emotion-specified expression. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition Workshops. 2010, 94–101

Wallhoff F. Facial expressions and emotion database. 2006

Dalrymple K A, Gomez J, Duchaine B. The Dartmouth database of children’s faces: acquisition and validation of a new face stimulus set. PLoS ONE, 2013, 8(11): e79131

Tian Y, Kanade T, Cohn J F. Handbook of Face Recognition. Berlin: Springer, 2005

Kanade T, Cohn J F, Tian Y. Comprehensive database for facial expression analysis. In: Proceedings of IEEE International Conference on Automatic face and Gesture Recognition. 2000, 46–53

Lin D T, Pan D C. Integrating a mixed-feature model and multiclass support vector machine for facial expression recognition. Integrated Computer-Aided Engineering, 2009, 16(1): 61–74

Ekman P, Friesen W. The facial action coding system: a technique for the measurement of facial movements. Consulting Psychologist. 1978

Pantic M, Valstar M F, Rademaker R, Maat L. Web-based database for facial expression analysis. In: Proceedings of IEEE International Conference on Multimedia and Expo. 2005

Kotsia I, Zafeiriou S, Pitas I. Texture and shape information fusion for facial expression and facial action unit recognition. Pattern Recognition, 2008, 41(3): 833–851

Yang X, Huang D, Wang Y, Chen L. Automatic 3D facial expression recognition using geometric scattering representation. In: Proceedings of the 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition. 2015, 1–6

Zhao X, Huang D, Dellandrea E, Chen L. Automatic 3D facial expression recognition based on a Bayesian belief net and a statistical facial feature model. In: Proceedings of the 20th IEEE International Conference on Pattern Recognition. 2010, 3724–3727

Savran A, Sankur B, Bilge M T. Comparative evaluation of 3D vs. 2D modality for automatic detection of facial action units. Pattern Recognition, 2012, 45(2): 767–782

Yin L, Wei X, Longo P, Bhuvanesh A. Analyzing facial expressions using intensity-variant 3D data for human computer interaction. In: Proceedings of the 18th International Conference on Pattern Recognition. 2006, 1248–1251

Li H, Ding H, Huang D, Wang Y, Zhao X, Morvan J, Chen L. An efficient multimodal 2D + 3D feature-based approach to automatic facial expression recognition. Computer Vision and Image Understanding, 2015, 140: 83–92

Rosato M, Chen X, Yin L. Automatic registration of vertex correspondences for 3D facial expression analysis. In: Proceedings of IEEE International Conference on Biometrics: Theory, Applications and Systems. 2008, 1–7

Le V, Tang H, Huang T S. Expression recognition from 3D dynamic faces using robust spatio-temporal shape features. In: Proceedings of IEEE International Conference and Workshops on Automatic Face and Gesture Recognition. 2011, 414–421

Jost T, Ouerhani N, Wartburg R, Müri R, Hügli H. Assessing the contribution of color in visual attention. Computer Vision and Image Understanding, 2005, 100(1–2): 107–123

Khan R A, Konik H, Dinet E. Visual attention: effects of blur. In: Proceedings of IEEE International Conference on Image Processing. 2011, 3289–3292

Collewijin H, Steinman MR, Erkelens J C, Pizlo Z, Steen J. The Head-Neck Sensory Motor System. New York: Oxford University Press, 1992

Cunningham D W, Kleiner M, Wallraven C, Bülthoff H. Manipulating video sequences to determine the components of conversational facial expressions. ACM Transactions on Applied Perception, 2005, 2(3): 251–269

Boucher J D, Ekman P. Facial areas and emotional information. Journal of Communication, 1975, 25(2): 21–29

Shannon C E,WeaveW. The Mathematical Theory of Communication. Urbana, IL: University of Illinois Press, 1963

Wyszecki G, Stiles WS. Color Science: Concepts and Methods, Quantitative Data and Formulae. 2nd ed. New York: John Wiley and Sons, 1982

Bezryadin S, Bourov P. Color coordinate system for accurate color image editing software. In: Proceedings of International Conference on Printing Technology. 2006, 145–148

Shan C, Gong S, McOwan PW. Facial expression recognition based on local binary patterns: a comprehensive study. Image and Vision Computing, 2009, 27(6): 803–816

Itti L, Koch C, Niebur E. A model of saliency-based visual attention for rapid scene analysis. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1998, 20(11): 1254–1259

Koch C, Harel J, Perona P. Graph-based visual saliency. In: Proceedings of Neural Information Processing Systems. 2006, 545–552

Hou X, Zhang L. Saliency detection: a spectral residual approach. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. 2007, 1–8

Cohen J B. Visual Color and Color Mixture: The Fundamental Color Space. Urbana: University of Illinois Press, 2000

CIEC. Commission internationale de l’Eclairage proceedings. Cambridge: Cambridge University Press, 1931

Khan R A, Meyer A, Konik H, Bouakaz S. Framework for reliable, real-time facial expression recognition for low resolution images. Pattern Recognition Letters, 2013, 34(10): 1159–1168

Ojala T, Pietikäinen M, Mäenpää T. Multiresolution gray-scale and rotation invariant texture classification with local binary patterns. IEEE Transaction on Pattern Analysis and Machine Intelligence, 2002, 24(7): 971–987

Zhan C, Li W, Ogunbona P, Safaei F. A real-time facial expression recognition system for online games. International Journal of Computer Games Technology, 2008

Piatkowska E. Facial expression recognition system. Dissertation for the Master’s Degree. Chicago: DePaul University, 2010

Khanum A, Mufti M, Javed Y, Shafiq Z. Fuzzy case-based reasoning for facial expression recognition. Fuzzy Sets and Systems, 2009, 160(2): 231–250

Rosdiyana S, Hideyuki S. Extraction of the minimum number of gabor wavelet parameters for the recognition of natural facial expressions. Artificial Life and Robotics, 2011, 16(1): 21–31

Egger H L, Pine D S, Nelson E, Leibenluft E, Ernst M, Towbin K, Angold, A. The NIMH child emotional faces picture set (NIMH-ChEFS): a new set of children’s facial emotion stimuli. International Journal of Methods in Psychiatric Research, 2011, 20(3): 145–156

Author information

Authors and Affiliations

Corresponding authors

Additional information

Rizwan Ahmed Khan is an associate professor at Barrett Hodgson University, Pakistan. He has received his PhD degree in computer science from Université Claude Bernard Lyon 1, France in 2013. He has worked as postdoctoral research associate at Laboratoire d’InfoRmatique en Image et Systemes d’information (LIRIS), Lyon, France. His research interests include computer vision, image processing, pattern recognition and human perception.

Alexandre Meyer received his PhD degree in computer science from Université Grenoble 1, France in 2001. From 2002 to 2003, he was a postdoctoral fellow at University College London, UK. Since 2004 he is working as an associate professor at Université Claude Bernard Lyon 1, France and member of the LIRIS research lab. His current research concerns computer animation and computer vision of characters.

Hubert Konik received his PhD degree in computer science from Université Jean Monnet, France in 1995. He is an associate professor at Télécom Saint-Etienne, France and a member of Image Science & Computer Vision team of Laboratoire Hubert Curien, Saint-Etienne, France. His research interests are focused on image processing and analysis, more particularly content aware image processing for new services and usages.

Saida Bouakaz received her PhD degree from Joseph Fourier University in Grenoble, France. She is a full professor at the Department of Computer Science, Université Claude Bernard Lyon 1, France. Her research interests include computer vision and graphics including motion capture and analysis, gesture recognition and facial animation.

Electronic supplementary material

Rights and permissions

About this article

Cite this article

Khan, R.A., Meyer, A., Konik, H. et al. Saliency-based framework for facial expression recognition. Front. Comput. Sci. 13, 183–198 (2019). https://doi.org/10.1007/s11704-017-6114-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11704-017-6114-9