Abstract

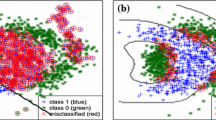

The sparsity driven classification technologies have attracted much attention in recent years, due to their capability of providing more compressive representations and clear interpretation. Two most popular classification approaches are support vector machines (SVMs) and kernel logistic regression (KLR), each having its own advantages. The sparsification of SVM has been well studied, and many sparse versions of 2-norm SVM, such as 1-norm SVM (1-SVM), have been developed. But, the sparsification of KLR has been less studied. The existing sparsification of KLR is mainly based on L 1 norm and L 2 norm penalties, which leads to the sparse versions that yield solutions not so sparse as it should be. A very recent study on L 1/2 regularization theory in compressive sensing shows that L 1/2 sparse modeling can yield solutions more sparse than those of 1 norm and 2 norm, and, furthermore, the model can be efficiently solved by a simple iterative thresholding procedure. The objective function dealt with in L 1/2 regularization theory is, however, of square form, the gradient of which is linear in its variables (such an objective function is the so-called linear gradient function). In this paper, through extending the linear gradient function of L 1/2 regularization framework to the logistic function, we propose a novel sparse version of KLR, the 1/2 quasi-norm kernel logistic regression (1/2-KLR). The version integrates advantages of KLR and L 1/2 regularization, and defines an efficient implementation scheme of sparse KLR. We suggest a fast iterative thresholding algorithm for 1/2-KLR and prove its convergence. We provide a series of simulations to demonstrate that 1/2-KLR can often obtain more sparse solutions than the existing sparsity driven versions of KLR, at the same or better accuracy level. The conclusion is also true even in comparison with sparse SVMs (1-SVM and 2-SVM). We show an exclusive advantage of 1/2-KLR that the regularization parameter in the algorithm can be adaptively set whenever the sparsity (correspondingly, the number of support vectors) is given, which suggests a methodology of comparing sparsity promotion capability of different sparsity driven classifiers. As an illustration of benefits of 1/2-KLR, we give two applications of 1/2-KLR in semi-supervised learning, showing that 1/2-KLR can be successfully applied to the classification tasks in which only a few data are labeled.

Similar content being viewed by others

References

Quinlan J R. Introduction of decision trees. Mach Learn, 1986, 1: 81–106

Quinlan J R. C4.5: Programs for Machine Learning. San Francisco: Morgan Kaufmann, 1993

Cohen W. Fast effective rule introduction. In: Proceedings of ICML-95. San Fransisco: Morgan Kaufmann, 1995. 115–123

Littlestone N, Warmuth M. The weighted majority algorithm. Inform Comput, 1994, 108: 212–261

Freund Y, Schapire R. Large margin classification using the perceptron algorithm. Mach Learn, 1999, 37: 277–296

Rumelhart D E, Hinton G E, Williams R J. Learning internal representations by error propagation. In: Rumelhart D E, McClelland J L, eds. Parallel Distributed Processing: Explorations in the Microstructure of Cognition. Cambridge: MIT Press, 1986. 318–362

Cestnik B. Estimating probabilities: a crucial task in machine learning. In: Proceedings of the European Conference on Artificial Intelligence. Stockholm, 1990. 147–149

Friedman J H. Regularized discriminant analysis. J Am Stat Assoc, 1989, 84: 165–175

Jensen F. An Introduction to Bayesian Networks. New York: Springer, 1996

Cover T, Hart P. Nearest neighbor pattern classification. IEEE Trans Inform Theory, 1967, 13: 7–21

Kubat, Martin M C. A reduction technique for nearest-neighbor classification: small groups of examples. Intell Data Anal, 2001, 5: 463–476

Peleg D, Meir R. A sparsity driven kernel machine based on minimizing a generalization error bound. Pattern Recogn, 2009, 42: 2607–2614

Wright J, Yang A Y, Ganesh A, et al. Robust face recognition via sparse representation. IEEE Trans Pattern Anal, 2009, 31: 210–227

Donoho D L, Elad E. Maximal sparsity representation via L 1 minimization. Proc Natl Acal Sci, 2003, 100: 2197–2202

Katz M, Schaffoner M, Andelic E, et al. Sparse kernel logistic regression using incremental feature selection for textindependent speaker indentification. In: IEEE Odyssey 2006: the Speaker and Language Recognition Workshop. San Juan: IEEE, 2006. 1–6

Krishnapuram B, Carin L, Mario A T, et al. Hartemink, sparse multinomial logistic regression: fast algorithms and generalization bounds. IEEE Trans Pattern Anal, 2005, 27: 957–967

Zhu J, Hastie T. Kernel logistic regression and the import vector machine. J Comput Graph Stat, 2005, 14: 185–205

Liu Y F, Zhang H H, Ahn J, et al. Support vector machines with adaptive Lq penalty. Comput Stat Data An, 2007, 51: 6380–6394

Jaakkola T, Haussler D. Probabilistic kernel regression models. In: Proceedings of the 7th International Workshop on Artificial Intelligence and Statistics. San Francisco: Morgan Kaufmann, 1999

Roth V. Probabilistic discriminative kernel classifiers for multi-class problems. In: Radig B, Florczyk S, eds. Pattern recognition-DAGM’01. London: Springer-Verlag, 2001. 246–253

Lee S-I, Lee H, Abbeel P, et al. Efficient L 1 regularized logistic regression. In: Proceedings of the 21st National Conference on Artificial Intelligence (AAAI-06). California: AAAI Press, 2006

Bradley P S, Mangasarian O L. Feature selection via concave minimization and support vector machines. In: Proceedings of 13th ICML. San Fransisco: Morgan Kaufmann, 1998. 82–90

Liu Z Q, Jiang F, Tian G L, et al. Sparse logistic regression with L p penalty for biomarker identification. Stat Appl Gen Molec Biol, 2007, 6: Article 6

Zhu J, Rosset S, Hastie T, et al. 1-norm support vector machines. In: Neural Information Processing Systems. Cambridge: MIT Press, 2003

Zou H. An improved 1-norm SVM for simulation classification and variable selection. In: Meila M, Shen X, eds. Proceedings of the 11th International Conference on Artificial Intelligence and Statistics. Puerto Rico, 2007. 675–681

Candès E, Tao T. Near-optimal signal recovery from random projections: universal encoding strategies? IEEE Trans Inform Theory, 2006, 52: 5406–5425

Xu Z B, Zhang H, Wang Y, et al. L 1/2 regularizer. Sci China Ser F-Inf Sci, 2009, 52: 1–9

Blumensath T, Davies M E. Iterative thresholding for sparse approximations. J Fourier Anal Appl, 2008, 14: 629–654

Xu Z B, Chang X Y, Xu F M, et al. L 1/2 regularization: a thresholding representation theory and a fast solver. IEEE Trans Neural Networ Learn Syst, 2012, 23: 1013–1027

Bregman L. The relaxation method of finding the common points of convex sets and its application to the solution of problems in convex programming. USSR Comput Math Math Phys, 1967, 7: 200–217

Ripely B D. Neural networks and related method for classification. J Roy Stat Soc-Ser B, 1994, 56: 409–456

Zhou D, Bousquet O, Lal T N, et al. Learning with local and global consistency. In: Advances in Neural Information Processing Systems 16. Cambridge: MIT Press, 2004. 321–328

Sammaria F, Harter A. Parameterisation of a stochastic model for human face identification. In: Proceedings of the 2nd IEEE Workshop on Applications of Computer Vision. Sarasota, 1994. 138–142

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Xu, C., Peng, Z. & Jing, W. Sparse kernel logistic regression based on L 1/2 regularization. Sci. China Inf. Sci. 56, 1–16 (2013). https://doi.org/10.1007/s11432-012-4679-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11432-012-4679-3