Abstract

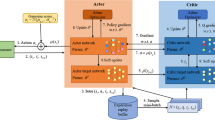

The continuing rise of the amount of mobile traffic is daunting, but the deploying of indoor small cells provides exciting opportunities to boost network capacity, extend cell coverage, and eventually thrive on an increased level of customers’ quality of experience (QoE). Unfortunately, in current wireless systems, traffic exhibits great variations in uplink and downlink directions, which introduces challenges of efficient resource allocation. Through using a dynamic time-division duplexing (TDD) method, network operators can flexibly adapt to such variations. However, cross-link interference appears in a dynamic TDD network and seriously suppresses uplink transmission. In this work, we proposed a decentralized QoE-aware reinforcement learning based approach to dynamic TDD reconfiguration. The objective is to maximize the utility function of the users’ QoE in an indoor small cell network. This was done by empowering each base station to select the best configuration to avoid the occurrence of cross-link interference while maintaining as many users that can enjoy their service at a satisfactory QoE as possible. At each episode, after collecting local reports of the QoE state and traffic load of the users, every base station dynamically chooses the best configuration according to the learning model. The learning process repeats itself until convergence. We implemented a simulator to evaluate the performances of the proposed algorithms. The results show that the proposed strategy achieves the best utility of QoE in comparison with other approaches, especially in the direction of the uplink transmission. The study demonstrates the great potential of harnessing reinforcement learning algorithms to attain higher QoE in small cell networks.

Similar content being viewed by others

References

Cisco. (2016). Cisco visual networking index: Global mobile data traffic forecast update 2015–2020. Document ID 958959758, White Paper.

NGMN. (2012). NGMN whitepaper small cell backhaul requirements. White Paper.

Chandrasekhar, V., Andrews, J. G., & Gatherer, A. (2008). Femtocell networks: A survey. IEEE Communications Magazine, 46(9), 59–67.

Acakpovi, A., Sewordor, H., & Koumadi, K. M. (2013). Performance analysis of femtocell in an indoor cellular network. International Journal of Computer Networks and Wireless Communications (IJCNWC), 3(3), 281–286.

3GPP. (2012). Further enhancements to LTE TDD for DL-UL interference management and traffic adaptation. 3GPP TR 36.828, Tech Rep.

Zhu, D., & Lei, M. (2013). Cluster-based dynamic DL/UL reconfiguration method in centralized RAN TDD with trellis exploration algorithm. In 2013 IEEE on wireless communications and networking conference (WCNC) (pp. 3758–3763).

Sun, F., Zhao, Y., & Sun, H. (2015). Centralized cell cluster interference mitigation for dynamic TDD DL/UL configuration with traffic adaptation for HTN networks. In 2015 IEEE 82nd vehicular technology conference (VTC Fall) (pp. 1–5).

Lin, K. H., Tsai, C. H., Chang, J. W., Chen, Y. C., Wei, H. Y., & Yeh, F. M. (2017). Max-throughput interference avoidance mechanism for indoor self-organizing small cell networks. ICT Express. https://doi.org/10.1016/j.icte.2017.04.005.

Tsolkas, D., Liotou, E., Passas, N., & Merakos, L. (2013). The need for QoE-driven interference management in femtocell-overlaid cellular networks. In International conference on mobile and ubiquitous systems: Computing, networking, and services (pp. 588–601). New York: Springer.

Bennis, M., & Niyato, D. (2010). A Q-learning based approach to interference avoidance in self-organized femtocell networks. In 2010 IEEE GLOBECOM workshops (GC Wkshps) (pp. 706–710).

Wang, Y., & Tao, M. (2014). Dynamic uplink/downlink configuration using q-learning in femtocell networks. In 2014 IEEE/CIC international conference on communications in China (ICCC) (pp. 53–58).

3GPP. (2010). Further advancements for E-UTRA physical layer aspects. 3GPP TR 36.814, Tech Rep.

Mianxiong, D., Kimata, T., Sugiura, K., & Zettsu, K. (2014). Quality-of-experience (QoE) in emerging mobile social networks. IEICE Transactions on Information and Systems, 97(10), 2606–2612.

Mitola, J., Guerci, J., Reed, J., Yao, Y. D., Chen, Y., Clancy, T., et al. (2014). Accelerating 5G QoE via public-private spectrum sharing. IEEE Communications Magazine, 52(5), 77–85.

Chen, Y. C., Chang, J. W., Tsai, C. H., Lin, G. X., Wei, H. Y., & Yeh, F. M. (2017). Max-utility resource allocation for indoor small cell networks. IET Communications, 11(2), 267–272.

Alben, L. (1996). Defining the criteria for effective interaction design. Interactions, 3(3), 11–15.

Reiter, U., Brunnström, K., De Moor, K., Larabi, M. C., Pereira, M., Pinheiro, A., et al. (2014). Factors influencing quality of experience. In Quality of experience (pp. 55–72). New York: Springer.

Kelly, F. (1997). Charging and rate control for elastic traffic. European Transactions on Telecommunications, 8(1), 33–37.

Khan, S., Duhovnikov, S., Steinbach, E., & Kellerer, W. (2007). Mos-based multiuser multiapplication cross-layer optimization for mobile multimedia communication. Advances in Multimedia, 1, 6–6. https://doi.org/10.1155/2007/94918.

Casas, P., & Schatz, R. (2014). Quality of experience in cloud services: survey and measurements. Computer Networks, 68, 149–165.

Holma, H., & Toskala, A. (2007). HSDPA/HSUPA for UMTS: High speed radio access for mobile communications. Hoboken: Wiley.

Holma, H., & Toskala, A. (2011). LTE for UMTS: Evolution to LTE-advanced. Hoboken: Wiley.

Kaelbling, L. P., Littman, M. L., & Moore, A. W. (1996). Reinforcement learning: A survey. Journal of Artificial Intelligence Research, 4, 237–285.

Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep learning. Cambridge: MIT Press.

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., et al. (2015). Human-level control through deep reinforcement learning. Nature, 518(7540), 529–533.

Silver, D., Huang, A., Maddison, C. J., Guez, A., Sifre, L., Van Den Driessche, G., et al. (2016). Mastering the game of Go with deep neural networks and tree search. Nature, 529(7587), 484–489.

Watkins, C. J. C. H. (1989). Learning from delayed rewards. Ph.D. thesis, University of Cambridge, England.

Watkins, C. J., & Dayan, P. (1992). Q-learning. Machine Learning, 8(3–4), 279–292.

Bellman, R. E., & Dreyfus, S. E. (2015). Applied dynamic programming. Princeton: Princeton University Press.

Ross, S. M. (2014). Introduction to stochastic dynamic programming. London: Academic Press.

Gomes, E. R., & Kowalczyk, R. (2009). Dynamic analysis of multiagent Q-learning with epsilon-greedy exploration. In Proceedings of the 26th annual international conference on machine learning (p. 369).

Poole, D. L., & Mackworth, A. K. (2010). Artificial intelligence: Foundations of computational agents. Cambridge: Cambridge University Press.

Lauer, M., & Riedmiller, M. (2000). An algorithm for distributed reinforcement learning in cooperative multi-agent systems. In Proceedings of the seventeenth international conference on machine learning, Citeseer.

Bowling, M., & Veloso, M. (2002). Multiagent learning using a variable learning rate. Artificial Intelligence, 136(2), 215–250.

Abdoos, M., Mozayani, N., & Bazzan, A. L. (2011). Traffic light control in non-stationary environments based on multi agent Q-learning. In 2011 14th international IEEE conference on intelligent transportation systems (ITSC) (pp. 1580–1585).

Miao, G., Zander, J., Sung, K. W., & Slimane, S. B. (2016). Fundamentals of mobile data networks. Cambridge: Cambridge University Press.

Lin, Y. T., Chao, C. C., & Wei, H. Y. (2015). Dynamic TDD interference mitigation by using Soft Reconfiguration. In 2015 11th international conference on heterogeneous networking for quality, reliability, security and robustness (QSHINE) (pp. 352–357).

Tan, M. (1993). Multi-agent reinforcement learning: Independent vs. cooperative agents. In Proceedings of the tenth international conference on machine learning (pp. 330–337).

Acknowledgements

The authors are grateful for the financial support from Gemtek Technology, Hyper-Dense LTE-A Het-Net Local Evolution System Research and Develop program, and MOST grant 103-2221-E-002-086-MY3. In addition, the authors would like to thank Dr. Jen-Wei Chang and Mr. Yu-Chieh Chen for providing technical assistance.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations

Rights and permissions

About this article

Cite this article

Tsai, CH., Lin, KH., Wei, HY. et al. QoE-aware Q-learning based approach to dynamic TDD uplink-downlink reconfiguration in indoor small cell networks. Wireless Netw 25, 3467–3479 (2019). https://doi.org/10.1007/s11276-019-01941-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11276-019-01941-8