Abstract

Stephen John has recently suggested that the ethics of communication yields important insights as to how values should be incorporated into science. In particular, he examines cases of “wishful speaking” in which a scientific actor (e.g. a tobacco company) endorses unreliable conclusions in order to obtain the consequences of the listener treating the results as credible. He concludes that what is wrong in these cases is that the speaker surreptitiously relies on values not accepted by the hearer, violating what he terms “the value-apt ideal”. I expand on this view by integrating into it Miranda Fricker’s account of testimonial injustice. I find testimonial injustice can arise in a manner unanticipated by Fricker, specifically that a credibility excess given to a speaker typically reduces the ability of others in the epistemic community to transmit knowledge, a phenomenon I term “collateral epistemic injustice”. I argue that this possibility entails that receivers have an ethical obligation to assign credibility judiciously. A further consequence of this view is that the value-apt ideal is insufficient. Because audience members have an obligation to assign credibility judiciously, a speaker cannot merely rely on shared values, they must also be open about the extent to which their conclusions depend on those values. Thus, both wishful speaking and obscuring the value-dependent nature of a conclusion makes one an unreliable source of information. Accordingly, other community members have an ethical obligation to ignore a speaker that frequently engages in either.

Similar content being viewed by others

Notes

See Gillam and Bernstein (1987) for a detailed account of early DES studies.

These hearing were convened to examine the pricing practices of pharmaceutical companies and led to the 1962 Kefauver-Harris amendment establishing the modern FDA.

As noted by an anonymous reviewer, an uncritical detailer might be unreliable because they were immersed in positive information from their company. In such a case, because they believed their products were superior, they would be guilty of wishful thinking or simply being naïve, rather than of wishful speaking. While nothing logically prohibits an honest detailer, anthropological studies of detailing find deception to be “a key part of pharmaceutical sales [which] varies in degree from rep to rep and from manager to manager” (Oldani 2004, p. 347; see also Lexchin (1984, pp. 124–127)). Detailer-turned-anthropologist, Oldani (2002) compares detailers to “tricksters” and provides a firsthand account of the methods they use to engage in intentionally misleading speech. Elsewhere, he explores the use of the pharmaceutical “gift economy” to influence doctors’ prescription choices (Oldani 2004). Likewise, Adrianne Fugh-Berman has described how psychological profiles are created to improve detailers’ ability to manipulate physicians’ judgment (Fugh-Berman and Ahari 2007), as well as explored why doctors believe they are impervious to such ploys (Sah and Fugh-Berman 2013). For an argument that these non-informational aspects of the interaction between detailers and physicians cannot be excluded from a pragmatically-grounded medical epistemology see Holman (unpublished manuscript).

It is unclear just how robust this result is, however (Rosenstock et al. 2017).

This is a tad too strong, Fricker admits that in certain cases an epistemic harm can be done to the agent ‘suffering’ from a credibility excess. This is due to the agent failing to cultivate good epistemic virtues over time. Since the harm in question is cumulative, Fricker takes this to be a marginal case of testimonial injustice [c.f. Davis (2016) for a separate argument that epistemic harm befalls the recipient of credibility excess]. As will become clear, I think credibility excess may routinely lead to epistemic injustice as a matter of course rather than in only in the rare scenario already conceded by Fricker.

Fricker contrasts “systematic” cases where the identity prejudice tracks the person in every aspect of life to cases she calls “incidental”. Because “incidental” carries the implication that the consequences are minor and the causes are random, I suggest that “bounded” better captures her characterization of this kind of epistemic injustice. Note that Fricker herself describes this type of epistemic injustice as “a genuine testimonial injustice… [which] may be grievous.. [but] none the less its impact on the subject’s life is, let us assume, highly localized” (Fricker 2007, p. 27). Similar to the identities I discuss, a number of recent works in the philosophy of medicine have applied Fricker’s account to patient groups interacting with the medical establishment (e.g., Merrick 2017; Buete 2019). Since the identity prejudices discussed in these works are localized to specific interactions with the medical profession, they are—properly speaking—cases of bounded/incidental epistemic injustice.

The hearer might however lose faith in herself as a subject of knowledge and thus suffer from reflexive epistemic injustice as, for example, is depicted in the classic film Gaslight.

Fricker (2007, p. 27) is clear she considers writing a form of testimony.

Note, there is nothing unique here in that a person suffers an injustice by an act they are not immediately a part of. If I vow to keep your secret, I break my vow to you when I tell your secret to a third party. Likewise, note that this is not (merely) a case where the testimonial injustice damages the epistemic system, this is a case where a person has been denied their status as a subject of knowledge.

For example, a specific attack on Harry Dowling, read as follows: “I question whether the honorable emeritus professor of medicine has seen a patient in person from or in his ivory tower for some time. However, in my 22 years of vigorous practice…” (Quoted in Podolsky 2015, p. 124).

Medina (2011) also argues that injustices can arise via a credibility excess. While Medina agrees with Fricker that credibility is not a finite good and that it should be distributed in proportion to the “epistemic credentials shown by the speaker” (p. 18), Medina argues there is a tight link between credibility excess and credibility deficit which could result in an “undeserved disparity in the epistemic reputability of social groups” (p. 21). I agree with this general conclusion that credibility excess can result in vast disparities between various groups, but do not think, as Medina does, that a credibility excess need result in a credibility deficit. For example, in cases of synchronic collateral injustice, a mere credibility excess can undercut another speaker by making it difficult, if not impossible, for these other speakers to transmit knowledge (irrespective of whether that agent suffers from a credibility deficit).

See McGarity and Wagner (2008) for numerous cases in which effective regulation is blocked because industry sponsors “counterscience.” In many cases, the purpose of such work is not to attack the credibility of existing experts, but to create new industry-friendly experts and as a result to “manufacture uncertainty.” In such cases the original experts may retain their public credibility, but fail to convey knowledge.

I will charitably assume that Dr. Irving believed, on the basis of the Smiths’ testimony, that DES was safe and effective.

Candidly, I have doubts about the value of such epistemic state of nature stories; I offer the argument here to show that if this is the kind of justification one plumps for, it is available for my account of collateral epistemic injustice as well.

Here I am following Douglas’ (2018) notion of openness about the value-dependency of important choices rather than requiring the more “crystalline” aim of full and total transparency. This point might be further developed in relation Wilholt’s (2009) discussion of conventional standards, but doing so here would take me beyond the scope of the paper.

They also test cases where the scientist is explicitly making a policy recommendation (where values are more likely to be seen as appropriate) and find even fewer conditions where openness results in a loss of trust.

For example, if you are a scientist who values public health over economic growth, John’s conditions for withholding the disclosure of your values only occurs when (1) speaking to a delimited audience with the same values; (2) relating information that shows a product is dangerous; and (3) the epistemic consequences of transmitting this knowledge outweighs the harm caused by (a) your disrespect for the audience’s autonomy; (b) impairing the audience’s ability to assimilate information on the topic in the future; and (c) the epistemic injustice you will do to your dissenting colleagues.

Given that most cases where a scientist loses credibility are cases where their conclusions are in line with their presumed biases, it is not entirely clear that given the sparse information provided to subjects in Elliott’s experiment, the loss of trust is unjustified. It would be interesting to see what happens when subjects have both numerous signs the scientist is trustworthy and a disclosure of values.

References

Anderson, E. (2012). Epistemic justice as a virtue of social institutions. Social Epistemology, 26(2), 163–173.

Apfel, R. J., Fisher, S. M., & Fisher, S. (1986). To do no harm: DES and the dilemmas of modern medicine. New Haven: Yale University Press.

Biddle, J., & Winsberg, E. (2010). Value judgements and the estimation of uncertainty in climate modeling. In P. D. Magnus & J. Busch (Eds.), New waves in philosophy of science (pp. 172–197). New York: Palgrave-Macmillan.

Buete, A. (2019). Psychiatric classification and epistemic injustice. Philosophy of Science. https://doi.org/10.1086/705443.

Camp, E. (2013). Slurring perspectives. Analytic Philosophy, 54, 330–349.

Caplow, T. (1952). Market attitudes: A research report from the medical field. Harvard Business Review, 30(6), 105–112.

CDC. (2012). Center for disease control and prevention. DES Update: Consumers. http://www.cdc.gov/des/consumers/index.html.

ChoGlueck, C. (2019). Broadening the scope of our understanding of mechanisms: Lessons from the history of the morning-after pill. Synthese. https://doi.org/10.1007/s11229-019-02201-0.

Coady, C. A. (1992). Testimony: A philosophical study. Oxford: Clarendon Press.

CPC. (1939). Stilbestrol: Preliminary report of the council. Journal of the American Medical Association, 113, 2312.

Craig, E. (1991). Knowledge and the state of nature: An essay in conceptual synthesis. Oxford: Clarendon Press.

Davis, E. (2016). Typecasts, tokens, and spokespersons: A case for credibility excess as testimonial injustice. Hypatia, 31(3), 485–501.

Davis, M. E., & Fugo, N. W. (1950). Steroids in the treatment of early pregnancy complications. Journal of the American Medical Association, 142(11), 778–785.

Dieckmann, W. J., Davis, M. E., Rynkiewicz, L. M., & Pottinger, R. E. (1953). Does the administration of diethylstilbestrol during pregnancy have therapeutic value? American Journal of Obstetrics and Gynecology, 66(5), 1062.

Douglas, H. (2014). Pure science and the problem of progress. Studies in History and Philosophy of Science Part A, 46, 55–63.

Douglas, H. (2018). From tapestry to loom: Broadening the perspective on values in science. Philosophy, Theory, and Practice in Biology. https://doi.org/10.3998/ptpbio.16039257.0010.008.

Dutton, D. B. (1992). Worse than the disease: Pitfalls of medical progress. Cambridge: Cambridge University Press.

Elliott, K. C. (2017). A tapestry of values: An introduction to values in science. Oxford: Oxford University Press.

Elliott, K. C., McCright, A. M., Allen, S., & Dietz, T. (2017). Values in environmental research: Citizens’ views of scientists who acknowledge values. PLoS ONE, 12(10), e0186049.

Ferguson, J. H. (1953). Effect of stilbestrol on pregnancy compared to the effect of a placebo. American Journal of Obstetrics and Gynecology, 65(3), 592–601.

Fricker, M. (1998). Rational authority and social power: Towards a truly social epistemology. Proceedings of the Aristotelian Society, 98, 159–177.

Fricker, M. (2007). Epistemic injustice: Power and the ethics of knowing. Oxford: Oxford University Press.

Fricker, M. (2010). Replies to Alcoff, Goldberg, and Hookway on epistemic injustice. Episteme, 7, 164–178.

Fugh-Berman, A., & Ahari, S. (2007). Following the script: How drug reps make friends and influence doctors. PLoS Medicine, 4(4), e150.

Gaffin, Ben, and Associates, American Medical Association, & Pharmaceutical Manufacturers Association. (1958). Attitudes of US physicians toward the American Pharmaceutical Industry. The Author.

Gillam, R., & Bernstein, B. J. (1987). Doing harm: The DES tragedy and modern American medicine. The Public Historian, 9, 57–82.

Greene, J. A. (2004). Attention to ‘details’: Etiquette and the pharmaceutical salesman in postwar American. Social Studies of Science, 34(2), 271–292.

Greenhill, J. (1949). The 1949 yearbook of obstetrics and gynecology. Chicago: Yearbook Publishers.

Hearings Before Senate Subcommittee on Antitrust and Monopoly. (1961). Washington, DC: US Government Printing.

Heinonen, O. P. (1973). Diethylstilbestrol in pregnancy. Frequency of exposure and usage patterns. Cancer, 31(3), 573–577.

Holman, B., & Bruner, J. (2015). The problem of intransigently biased agents. Philosophy of Science, 82, 956–968.

Holman, B. (unpublished). Medical knowledge as what doctors know.

Hume, D (1739/2000). An enquiry concerning human understanding. Oxford: Oxford University Press.

IARC. International Agency for Research on Cancer. (2012). Diethylstilbestrol. A review of human carcinogens. IARC Monograph Evaluation Carcinogenic Risks for Humans, 100, 175–218.

JAMA (1949). JAMA, 139, 130.

John, S. (2015). Inductive risk and the contexts of communication. Synthese, 192(1), 79–96.

John, S. (2018a). Epistemic trust and the ethics of science communication: Against transparency, openness, sincerity and honesty. Social Epistemology, 32(2), 75–87.

John, S. (2018b). Science, truth and dictatorship: Wishful thinking or wishful speaking? Studies in History and Philosophy of Science. https://doi.org/10.1016/j.shpsa.2018.12.003.

John, S. (2018c). Scientific deceit. Synthese. https://doi.org/10.1007/s11229-018-02017-4.

Kaufman, R. H. (1982). Structural changes of the genital tract associated with in utero exposure to diethylstilbestrol. Obstetrics and Gynecology Annual, 11, 187.

Kaufman, R. H., Adam, E., Hatch, E. E., Noller, K., Herbst, A. L., Palmer, J. R., et al. (2000). Continued follow-up of pregnancy outcomes in diethylstilbestrol-exposed offspring. Obstetrics and Gynecology, 96(4), 483–489.

Langston, N. (2010). Toxic bodies. New Haven: Yale University Press.

Lasagna, L. (1955). Statistics, sophistication, and sacred cows. Clinical Research Proceedings, 3, 185.

Lexchin, J. (1984). The real pushers: A critical analysis of the Canadian drug industry. Vancouver: New Star Books.

McGarity, T., & Wagner, W. (2008). Bending science: How special interests corrupt public health research. Cambridge, MA: Harvard University Press.

Medina, J. (2011). The relevance of credibility excess in a proportional view of epistemic injustice: Differential epistemic authority and the social imaginary. Social Epistemology, 25(1), 15–35.

Merrick, T. (2017). From ‘Intersex’to ‘DSD’: A case of epistemic injustice. Synthese. https://doi.org/10.1007/s11229-017-1327-x.

Meyers, R. (1983). DES: The bitter pill. New York: Seaview/Putnam.

Oldani, M. (2002). Tales from the “Script”: An insider/outside view of pharmaceutical sales practice. Kroeber Anthropological Society Papers, 92–93, 147–176.

Oldani, M. (2004). Thick prescriptions: Toward an interpretation of pharmaceutical sales practices. Medical Anthropology Quarterly, 18, 325–356.

Podolsky, S. H. (2015). The antibiotic era: Reform, resistance, and the pursuit of a rational therapeutics. Baltimore: JHU Press.

Reed, C. E., & Fenton, S. E. (2013). Exposure to diethylstilbestrol during sensitive life stages: A legacy of heritable health effects. Birth Defects Research Part C: Embryo Today: Reviews, 99(2), 134–146.

Reid, D. D. (1955). The use of hormones in the management of pregnancy in diabetics. Lancet, 2, 833–836.

Robinson, D., & Shettles, L. B. (1952). The use of diethylstilbestrol in threatened abortion. American Journal of Obstetrics and Gynecology, 63(6), 1330–1333.

Rosenstock, S., O’Connor, C., & Bruner, J. (2017). In epistemic networks, is less really more? Philosophy of Science, 84, 234–252.

Sah, S., & Fugh-Berman, A. (2013). Physicians under the influence: Social psychology and industry marketing strategies. The Journal of Law, Medicine & Ethics, 41(3), 665–672.

Smith, O. (1948). Diethylstilbestrol in the prevention and treatment of complications of pregnancy. American Journal of Obstetrics and Gynecology, 56(5), 821–834.

Smith, O. W., & Smith, G. V. S. (1949). The influence of diethylstilbestrol on the progress and outcome of pregnancy as based on a comparison of treated with untreated primigravidas. American Journal of Obstetrics and Gynecology, 58(5), 994–1009.

Swyer, G. I. M., & Law, R. G. (1954). An evaluation of the ante-natal use of stilboestrol-preliminary report. Journal of Endocrinology, 10, vi–vii.

Troisi, R., Hatch, E. E., Titus-Ernstoff, L., Hyer, M., Palmer, J. R., Robboy, S. J., et al. (2007). Cancer risk in women prenatally exposed to diethylstilbestrol. International Journal of Cancer, 121(2), 356–360.

Warner, J. H. (2014). The therapeutic perspective: Medical practice, knowledge, and identity in America, 1820–1885. Princeton NJ: Princeton University Press.

Wilholt, T. (2009). Bias and values in scientific research. Studies in History and Philosophy of Science Part A, 40(1), 92–101.

Zollman, K. (2007). The communication structure of epistemic communities. Philosophy of Science, 74, 574–587.

Acknowledgements

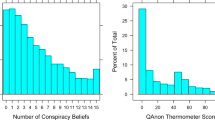

I would like to thank Steven John, Christopher ChoGlueck, and Kevin Elliott who all responded to questions and corrected (at least some of) the misunderstandings I had of their work. The form and substance of the paper was helped tremendously by insightful feedback from Elizabeth Seger, audiences at “Understanding Epistemic Injustice” conference, The University of Texas (El Paso), The University of Ghent, and two blind reviewers. Finally, I owe my biggest debt of gratitude to Justin Bruner. Not only was he kind enough to supply the graphs and to run the simulations reported in Sect. 3, the core of this paper emerges from our early work on intransigently biased agents. Sections 3–5 were revised and sharpened over multiple drafts and innumerable long conversations with him. Justin spotted numerous gaps in my thinking and though we do not agree on all points contained herein, the paper has been greatly improved by our ongoing disagreement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Holman, B. An ethical obligation to ignore the unreliable. Synthese 198 (Suppl 23), 5825–5848 (2021). https://doi.org/10.1007/s11229-019-02483-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11229-019-02483-4