Abstract

A dynamical system with a plastic self-organising velocity vector field was introduced in Janson and Marsden (Sci Rep 7:17007, 2017) as a mathematical prototype of new explainable intelligent systems. Although inspired by the brain plasticity, it does not model or explain any specific brain mechanisms or processes, but instead expresses a hypothesised principle possibly implemented by the brain. The hypothesis states that, by means of its plastic architecture, the brain creates a plastic self-organising velocity vector field, which embodies self-organising rules governing neural activity and through that the behaviour of the whole body. The model is represented by a two-tier dynamical system, in which the observable behaviour obeys a velocity field, which is itself controlled by another dynamical system. Contrary to standard brain models, in the new model the sensory input affects the velocity field directly, rather than indirectly via neural activity. However, this model was postulated without sufficient explication or theoretical proof of its mathematical consistency. Here we provide a more rigorous mathematical formulation of this problem, make several simplifying assumptions about the form of the model and of the applied stimulus, and perform its mathematical analysis. Namely, we explore the existence, uniqueness, continuity and smoothness of both the plastic velocity vector field controlling the observable behaviour of the system, and the of the behaviour itself. We also analyse the existence of pullback attractors and of forward limit sets in such a non-autonomous system of a special form. Our results verify the consistency of the problem and pave the way to constructing more models with specific pre-defined cognitive functions.

Similar content being viewed by others

Notes

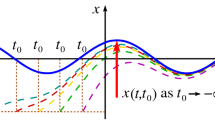

He required the system to be defined in the whole past and the convergence to be uniform in \(t_0 \in {\mathbb {R}}\).

References

Abbot, L.F., Nelson, S.B.: Synaptic plasticity taming the beast. Nat. Neurosci. 3, 1178–1183 (2000)

Ardiansyah, S., Majid, M.A., Zain, J.M.: Knowledge of extraction from trained neural network by using decision tree. In: 2nd International Conference on Science in Information Technology (ICSITech), pp. 220–225 (2016)

Arnold, L.: Random Dynamical Systems. Springer, Berlin (1998)

Barron, A.B., Hebets, E.A., Cleland, T.A., Fitzpatrick, C.L., Hauber, M.E., Stevens, J.R.: Embracing multiple definitions of learning. Trends Neurosci. 38(7), 405–407 (2015)

Bi, G., Poo, M.: Synaptic modification of correlated activity: Hebb’s postulate revisited. Ann. Rev. Neurosci. 24, 139–166 (2001)

Bleicher, A.: Demystifying the black box that is AI. Sci. Am. 9, 8 (2017)

Boz, O.: Extracting decision trees from trained neural networks. In: Proceedings of the Eighth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, ACM, New York, pp. 456–461 (2002)

Brinker, T.J., Hekler, A., Enk, A.H., Klode, J., Hauschild, A., Berking, C., Schilling, B., Haferkamp, S., Schadendorf, D., Holland-Letz, T., Utikal, J.S., von Kalle, C., et al.: Deep learning outperformed 136 of 157 dermatologists in a head-to-head dermoscopic melanoma image classification task. Eur. J. Cancer 113, 47–54 (2019)

Crauel, H., Kloeden, P.E.: Nonautonomous and random attractors. Jahresbericht der Deutschen Mathematiker-Vereinigung 117, 173–206 (2015)

Crutchfield, J.P.: Dynamical embodiments of computation in cognitive processes. Behav. Brain Sci. 21, 635 (1998)

Cui, H., Langa, J.A.: Uniform attractors for non-autonommous random dynamical systems. J. Differ. Equ. 263, 1225–1268 (2017)

Cui, H., Kloeden, P.E.: Invariant forward random attractors of non-autonomous random dynamical systems. J. Differ. Eqn. 65, 6166–6186 (2018)

Djurfeldt, M., Johansson, C., Ekeberg, Ö., Rehn, M., Lundqvist, M., Lansner, A.: Massively parallel simulation of brain-scale neuronal network models. Computational biology and neurocomputing, School of Computer Science and Communication. Royal Institute of Technology, Stockholm. TRITA-NA-P0513 (2005)

Dong, D.W., Hopfield, J.J.: Dynamic properties of neural networks with adapting synapses. Netw. Comput. Neural Syst. 3, 267–283 (1992)

Esteva, A., Kuprel, B., Novoa, R.A., Ko, J., Swetter, S.M., Blau, H.M., Thrun, S.: Dermatologist-level classification of skin cancer with deep neural networks. Nature 542, 115 (2017)

van Gelder, T.: The dynamical hypothesis in cognitive science. Behav. Brain Sci. 21, 615–665 (1998)

Hammarlund, P., Ekeberg, Ö.: Large neural network simulations on multiple hardware platforms. J. Comput. Neurosci. 5, 443–459 (1998)

Han, X., Kloeden, P.E.: Random Ordinary Differential Equations and their Numerical Solution. Springer, Singapore (2017)

Janson, N.B., Marsden, C.J.: Dynamical system with plastic self-organized velocity field as an alternative conceptual model of a cognitive system. Sci. Rep. 7, 17007 (2017)

Janson, N.B., Marsden, C.J.: Supplementary Note to: Dynamical system with plastic self-organized velocity field as an alternative conceptual model of a cognitive system. Sci. Rep. 7, 17007 (2017)

Kloeden, P.E.: Pullback attractors of nonautonomous semidynamical systems. Stoch. Dyn. 3, 101–112 (2003)

Kloeden, P.E., Rasmussen, M.: Nonautonomous Dynamical Systems. American Mathematical Society, Providence (2011)

Kloeden, P.E.: Asymptotic invariance and the discretisation of nonautonomous forward attracting sets. J. Comput. Dyn. 3, 179–189 (2016)

Marr, B.: 5 Important Artificial Intelligence predictions (for 2019) everyone should read. Forbes, 3 December (2018). https://www.forbes.com/sites/bernardmarr/2018/12/03/5-important-artificial-intelligence-predictions-for-2019-everyone-should-read/#6b4e590c319f

Moravčík, M., Schmid, M., Burch, N., Lisý, V., Morrill, D., Bard, N., Davis, T., Waugh, K., Johanson, M., Bowling, M.: DeepStack: expert-level artificial intelligence in heads-up no-limit poker. Science 356, 508–513 (2017)

McGough, M.: How bad is Sacramento’s air, exactly? Google results appear at odds with reality, some say. Sacramento Bee, 7 August (2018). https://www.sacbee.com/news/california/fires/article216227775.html

Olcese, U., Oude Lohius, M.N., Pennartz, C.M.A.: Sensory processing across conscious and nonconscious brain states: from single neurons to distributed networks for inferential representation. Front. Syst. Neurosci. 12, 49 (2018)

Peng, T.: AI hasn’t found its Isaac Newton: Gary Marcus on deep learning defects and “Frenemy” Yann LeCun. Synced AI Technology and Industry Review, 15 February (2019). https://syncedreview.com/2019/02/15/ai-hasnt-found-its-isaac-newton-gary-marcus-on-deep-learning-defects-frenemy-yann-lecun/

Romeiras, F., Grebogi, C., Ott, E.: Multifractal properties of snap-shot attractors of random maps. Phys. Rev. A 41, 784–799 (1990)

Rudin, C.: Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nat. Mach. Intell. 1, 206–215 (2019)

Silver, D., Schrittwieser, J., Simonyan, K., Antonoglou, I., Huang, A., Guez, A., Hubert, T., Baker, L.R., Lai, M., Bolton, A., Chen, Y., Lillicrap, T.P., Hui, F.F., Sifre, L., Driessche, G.V., Graepel, T., Hassabis, D.: Mastering the game of Go without human knowledge. Nature 550, 354–359 (2017)

Stickgold, R.: Sleep-dependent memory consolidation. Nature 437, 1272–1278 (2005)

Taigman, Y., Yang, M., Ranzato, M., Wolf, L.: DeepFace: closing the gap to human-level performance in face verification. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1701–1708 (2014)

Varshney, K.R., Alemzadeh, H.: On the safety of machine learning: cyber-physical systems, decision sciences, and data products. Big Data 5, 246–255 (2017)

Velluti, R.: Interactions between sleep and sensory physiology, ain states: from single neurons to distributed networks for inferential representation. J. Sleep Res. 6, 61–77 (1997)

Vincent, J.: The state of AI in 2019. The Verge, 28 January (2019)

Vincent, J.: AI systems should be accountable, explainable, and unbiased, says EU. The Verge, 8 April (2019)

Vishik, M.I.: Asymptotic Behaviour of Solutions of Evolutionary Equations. Cambridge University Press, Cambridge (1992)

Wexler, R.: When a computer program keeps you in jail: How computers are harming criminal justice. New York Times, 13 June (2017)

Walter, W.: Ordinary Differential Equations. Springer, New York (1998)

Zhu, J., Liapis, A., Risi, S., Bidarra, R., Youngblood, G.M.: Explainable AI for designers: a human-centered perspective on mixed-initiative co-creation. In: IEEE Conference on Computational Intelligence and Games (CIG), pp 1–8 (2018)

Acknowledgements

The visit of PEK to Loughborough University was supported by London Mathematical Society.

Author information

Authors and Affiliations

Corresponding author

Additional information

Dedicated to the memory of Russell Johnson.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Janson, N.B., Kloeden, P.E. Mathematical Consistency and Long-Term Behaviour of a Dynamical System with a Self-Organising Vector Field. J Dyn Diff Equat 34, 63–78 (2022). https://doi.org/10.1007/s10884-020-09834-7

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10884-020-09834-7

Keywords

- Learning

- Non-autonomous dynamical system

- Velocity vector field

- Plasticity

- Self-organisation

- Pullback attractor

- Explainable artificial intelligence