Abstract

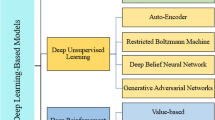

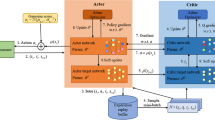

Sustainable cities are envisioned to have economic and industrial steps toward reducing pollution. Many real-world applications such as autonomous vehicles, transportation, traffic signals, and industrial automation can now be trained using deep reinforcement learning (DRL) techniques. These applications are designed to take benefit of DRL in order to improve the monitoring as well as measurements in industrial internet of things for automation identification system. The complexity of these environments means that it is more appropriate to use multi-agent systems rather than a single-agent. However, in non-stationary environments multi-agent systems can suffer from increased number of observations, limiting the scalability of algorithms. This study proposes a model to tackle the problem of scalability in DRL algorithms in transportation domain. A partition-based approach is used in the proposed model to reduce the complexity of the environment. This partition-based approach helps agents to stay in their working area. This reduces the complexity of the learning environment and the number of observations for each agent. The proposed model uses generative adversarial imitation learning and behavior cloning, combined with a proximal policy optimization algorithm, for training multiple agents in a dynamic environment. We present a comparison of PPO, soft actor-critic, and our model in reward gathering. Our simulation results show that our model outperforms SAC and PPO in cumulative reward gathering and dramatically improved training multiple agents.

Similar content being viewed by others

References

Arshad, S. R., Saeed, A., Akre, V., Khattak, H. A., Ahmed, S., Khan, Z. U., Khan, Z. A., & Nawaz, A. (2020). Leveraging traffic condition using iot for improving smart city street lights. In 2020 IEEE international conference on communication, networks and satellite (Comnetsat), pp. 92–96. IEEE.

Arulkumaran, K., Deisenroth, M. P., Brundage, M., & Bharath, A. A. (2017). Deep reinforcement learning: A brief survey. IEEE Signal Processing Magazine, 34(6), 26–38.

Awan, K. A., Din, I. U., Almogren, A., Khattak, H. A., & Rodrigues, J. J. (2021). Edgetrust: A lightweight data-centric trust management approach for green internet of edge things. Wireless Personal Communications.

Basit, M. A., Khattak, H. A., Ahmed, S., Nawaz, A., Habib, M., & Zaman, K. (2020). Driving behaviour analysis in connected vehicles. In 2020 International conference on UK-China Emerging Technologies (UCET), pp. 1–5. IEEE.

Bicocchi, N., Cabri, G., Leonardi, L., & Salierno, G. (2019). Intelligent agents supporting digital factories. In WOA, pp. 29–34.

Borenstein, J., Koren, Y., et al. (1991). The vector field histogram-fast obstacle avoidance for mobile robots. IEEE Transactions on Robotics and Automation, 7(3), 278–288.

Cao, Z., & Lin, C. T. (2019). Hierarchical critics assignment for multi-agent reinforcement learning. arXiv preprint arXiv:1902.03079

Chu, T., Wang, J., Codecà, L., & Li, Z. (2019). Multi-agent deep reinforcement learning for large-scale traffic signal control. IEEE Transactions on Intelligent Transportation Systems, 21(3), 1086–1095.

Diallo, E. A. O., Sugiyama, A., & Sugawara, T. (2020). Coordinated behavior of cooperative agents using deep reinforcement learning. Neurocomputing, 396, 230–240.

Durrant-Whyte, H., & Bailey, T. (2006). Simultaneous localization and mapping: Part I. IEEE Robotics & Automation Magazine, 13(2), 99–110.

Foerster, J., Nardelli, N., Farquhar, G., Afouras, T., Torr, P. H., Kohli, P., & Whiteson, S. (2017). Stabilising experience replay for deep multi-agent reinforcement learning. arXiv preprint arXiv:1702.08887

Gao, J., Wang, H., & Shen, H. (2020). Machine learning based workload prediction in cloud computing. In 2020 29th international conference on computer communications and networks (ICCCN), pp. 1–9. IEEE.

Gao, J., Wang, H., & Shen, H. (2020). Smartly handling renewable energy instability in supporting a cloud datacenter. In 2020 IEEE international parallel and distributed processing symposium (IPDPS), pp. 769–778. IEEE.

Gao, J., Wang, H., & Shen, H. (2020). Task failure prediction in cloud data centers using deep learning. IEEE Transactions on Services Computing.

Gheisari, M., Najafabadi, H. E., Alzubi, J. A., Gao, J., Wang, G., Abbasi, A. A., & Castiglione, A. (2021). Obpp: An ontology-based framework for privacy-preserving in iot-based smart city. Future Generation Computer Systems, 123, 1–13.

Gupta, J. K., Egorov, M., & Kochenderfer, M. (2017). Cooperative multi-agent control using deep reinforcement learning. In International conference on autonomous agents and multiagent systems, pp. 66–83. Springer.

Haarnoja, T., Zhou, A., Abbeel, P., & Levine, S. (2018). Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. arXiv preprint arXiv:1801.01290

Hasan, Y. A., Garg, A., Sugaya, S., & Tapia, L. (2020). Defensive escort teams for navigation in crowds via multi-agent deep reinforcement learning. IEEE Robotics and Automation Letters, 5(4), 5645–5652.

Henna, S., Davy, A., Khattak, H.A., & Minhas, A.A. (2019). An internet of things (iot)-based coverage monitoring for mission critical regions. In 2019 10th IFIP international conference on new technologies, mobility and security (NTMS), pp. 1–5. IEEE.

Hernandez-Leal, P., Kartal, B., & Taylor, M. E. (2019). A survey and critique of multiagent deep reinforcement learning. Autonomous Agents and Multi-Agent Systems, 33(6), 750–797.

Hernandez-Leal, P., Kartal, B., & Taylor, M. E. (2020). A very condensed survey and critique of multiagent deep reinforcement learning. In Proceedings of the 19th international conference on autonomous agents and multiagent systems, pp. 2146–2148.

Ho, J., & Ermon, S. (2016). Generative adversarial imitation learning. arXiv preprint arXiv:1606.03476

Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735–1780.

Hodge, V. J., Hawkins, R., & Alexander, R. (2020). Deep reinforcement learning for drone navigation using sensor data. Neural Computing and Applications, pp. 1–19.

Hu, J., Niu, H., Carrasco, J., Lennox, B., & Arvin, F. (2020). Voronoi-based multi-robot autonomous exploration in unknown environments via deep reinforcement learning. IEEE Transactions on Vehicular Technology, 69(12), 14413–14423.

Huang, H., Yang, Y., Wang, H., Ding, Z., Sari, H., & Adachi, F. (2019). Deep reinforcement learning for uav navigation through massive mimo technique. IEEE Transactions on Vehicular Technology, 69(1), 1117–1121.

Juliani, A., Berges, V. P., Vckay, E., Gao, Y., Henry, H., Mattar, M., & Lange, D. (2018). Unity: A general platform for intelligent agents. arXiv preprint arXiv:1809.02627

Kang, J. M., Chang, H., & Park, B. J. (2019). A simulator for training path-following agents using reinforcement learning. International Journal of Applied Engineering Research, 14(23), 4325–4328.

Khatib, O. (1986). Real-time obstacle avoidance for manipulators and mobile robots. In Autonomous robot vehicles, pp. 396–404. Springer.

Koller, D., Friedman, N., Džeroski, S., Sutton, C., McCallum, A., Pfeffer, A., Abbeel, P., Wong, M. F., Meek, C., & Neville, J., et al. (2007). Introduction to statistical relational learning. MIT Press.

Li, Y. (2017). Deep reinforcement learning: An overview. arXiv preprint arXiv:1701.07274

Li, Y., Dai, S., Shi, Y., Zhao, L., & Ding, M. (2019). Navigation simulation of a mecanum wheel mobile robot based on an improved a* algorithm in unity3d. Sensors, 19(13), 2976.

Liu, J., Zhang, H., Fu, Z., & Wang, Y. (2021). Learning scalable multi-agent coordination by spatial differentiation for traffic signal control. Engineering Applications of Artificial Intelligence, 100, 104165.

Luong, M., & Pham, C. (2021). Incremental learning for autonomous navigation of mobile robots based on deep reinforcement learning. Journal of Intelligent & Robotic Systems, 101(1), 1–11.

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., Graves, A., Riedmiller, M., Fidjeland, A. K., & Ostrovski, G., et al. (2015). Human-level control through deep reinforcement learning.Nature, 518(7540), 529–533.

Moradi, M. H., Razini, S., & Hosseinian, S. M. (2016). State of art of multiagent systems in power engineering: A review. Renewable and Sustainable Energy Reviews, 58, 814–824.

Nawaz, A., Ahmed, S., Khattak, H. A., Akre, V., Rajan, A. & Khan, Z. A. (2020). Latest advances in interent of things and big data with requirments and taxonomy. In 2020 Seventh international conference on information technology trends (ITT), pp. 13–19. IEEE.

Ng, A. Y., & Jordan, M. I. (2003). Shaping and policy search in reinforcement learning. Ph.D. thesis, University of California, Berkeley Berkeley

Nguyen, N. D., Nguyen, T., & Nahavandi, S. (2017). System design perspective for human-level agents using deep reinforcement learning: A survey. IEEE Access, 5, 27091–27102.

Nguyen, T. T., Nguyen, N. D., & Nahavandi, S. (2020). Deep reinforcement learning for multiagent systems: A review of challenges, solutions, and applications. IEEE Transactions on Cybernetics, 50(9), 3826–3839.

Padakandla, S., Prabuchandran, K., & Bhatnagar, S. (2020). Reinforcement learning algorithm for non-stationary environments. Applied Intelligence, 50(11), 3590–3606.

Reis, S., Reis, L. P., & Lau, N. (2020). Game adaptation by using reinforcement learning over meta games. Group Decision and Negotiation, pp. 1–20.

Rizk, Y., Awad, M., & Tunstel, E. W. (2018). Decision making in multiagent systems: A survey. IEEE Transactions on Cognitive and Developmental Systems, 10(3), 514–529.

Schulman, J., Wolski, F., Dhariwal, P., Radford, A., & Klimov, O. (2017). Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347

Shao, K., Zhu, Y., Tang, Z., & Zhao, D. (2020). Cooperative multi-agent deep reinforcement learning with counterfactual reward. In 2020 International joint conference on neural networks (IJCNN), pp. 1–8. IEEE.

Shi, H., Lin, Z., Hwang, K. S., Yang, S., & Chen, J. (2018). An adaptive strategy selection method with reinforcement learning for robotic soccer games. IEEE Access, 6, 8376–8386.

Sun, L., Zhai, J., & Qin, W. (2019). Crowd navigation in an unknown and dynamic environment based on deep reinforcement learning. IEEE Access, 7, 109544–109554.

Thorndike, E. L. (1998). Animal intelligence: An experimental study of the associate processes in animals. American Psychologist, 53(10), 1125.

Wang, Y., He, H., & Sun, C. (2018). Learning to navigate through complex dynamic environment with modular deep reinforcement learning. IEEE Transactions on Games, 10(4), 400–412.

Wang, Z., Wan, Q., Qin, Y., Fan, S., & Xiao, Z. (2021). Research on intelligent algorithm for alerting vehicle impact based on multi-agent deep reinforcement learning. Journal of Ambient Intelligence and Humanized Computing, 12(1), 1337–1347.

Wooldridge, M. (2009). An introduction to multiagent systems. Wiley, London.

Wu, X., Chen, H., Chen, C., Zhong, M., Xie, S., Guo, Y., & Fujita, H. (2020). The autonomous navigation and obstacle avoidance for usvs with anoa deep reinforcement learning method. Knowledge-Based Systems, 196, 105201.

Xiong, H., & Diao, X. (2021). Safety robustness of reinforcement learning policies: A view from robust control. Neurocomputing, 422, 12–21.

Zhang, J., Pan, Y., Yang, H., & Fang, Y. (2019). Scalable deep multi-agent reinforcement learning via observation embedding and parameter noise. IEEE Access, 7, 54615–54622.

Zhao, D., Wang, H., Shao, K., & Zhu, Y. (2016). Deep reinforcement learning with experience replay based on sarsa. In 2016 IEEE symposium series on computational intelligence (SSCI), pp. 1–6. IEEE.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Raza, A., Shah, M.A., Khattak, H.A. et al. Collaborative multi-agents in dynamic industrial internet of things using deep reinforcement learning. Environ Dev Sustain 24, 9481–9499 (2022). https://doi.org/10.1007/s10668-021-01836-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10668-021-01836-9