Abstract

Objectives

To investigate the efficacy of an artificial intelligence (AI) system for the identification of false negatives in chest radiographs that were interpreted as normal by radiologists.

Methods

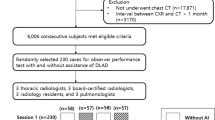

We consecutively collected chest radiographs that were read as normal during 1 month (March 2020) in a single institution. A commercialized AI system was retrospectively applied to these radiographs. Radiographs with abnormal AI results were then re-interpreted by the radiologist who initially read the radiograph (“AI as the advisor” scenario). The reference standards for the true presence of relevant abnormalities in radiographs were defined by majority voting of three thoracic radiologists. The efficacy of the AI system was evaluated by detection yield (proportion of true-positive identification among the entire examination) and false-referral rate (FRR, proportion of false-positive identification among all examinations). Decision curve analyses were performed to evaluate the net benefits of applying the AI system.

Results

A total of 4208 radiographs from 3778 patients (M:F = 1542:2236; median age, 56 years) were included. The AI system identified initially overlooked relevant abnormalities with a detection yield and an FRR of 2.4% and 14.0%, respectively. In the “AI as the advisor” scenario, radiologists detected initially overlooked relevant abnormalities with a detection yield and FRR of 1.2% and 0.97%, respectively. In a decision curve analysis, AI as an advisor scenario exhibited a positive net benefit when the cost-to-benefit ratio was below 1:0.8.

Conclusion

An AI system could identify relevant abnormalities overlooked by radiologists and could enable radiologists to correct their false-negative interpretations by providing feedback to radiologists.

Key Points

• In consecutive chest radiographs with normal interpretations, an artificial intelligence system could identify relevant abnormalities that were initially overlooked by radiologists.

• The artificial intelligence system could enable radiologists to correct their initial false-negative interpretations by providing feedback to radiologists when overlooked abnormalities were present.

Similar content being viewed by others

Abbreviations

- AI:

-

Artificial intelligence

- FRR:

-

False-referral rate

- PPV:

-

Positive predictive value

References

Expert Panel on Thoracic Imaging, Jokerst C, Chung JH et al (2018) ACR Appropriateness criteria((R)) acute respiratory illness in immunocompetent patients. J Am Coll Radiol 15:S240–S251

Expert Panel on Thoracic Imaging, Lee C, Colletti PM et al (2019) ACR appropriateness criteria(R) acute respiratory illness in immunocompromised patients. J Am Coll Radiol 16:S331–S339

Expert Panel on Thoracic Imaging, McComb BL, Ravenel JG et al (2018) ACR Appropriateness criteria((R)) chronic dyspnea-noncardiovascular origin. J Am Coll Radiol 15:S291–S301

Expert Panel on Thoracic Imaging, Olsen KM, Manouchehr-Pour S et al (2020) ACR appropriateness criteria(R) hemoptysis. J Am Coll Radiol 17:S148–S159

Expert Panel on Thoracic Imaging, Ravenel JG, Chung JH et al (2017) ACR Appropriateness criteria((R)) imaging of possible tuberculosis. J Am Coll Radiol 14:S160–S165

Berlin L (2014) Radiologic errors, past, present and future. Diagnosis (Berl) 1:79–84

Donald JJ, Barnard SA (2012) Common patterns in 558 diagnostic radiology errors. J Med Imaging Radiat Oncol 56:173–178

Miyashita N, Kawai Y, Tanaka T et al (2015) Detection failure rate of chest radiography for the identification of nursing and healthcare-associated pneumonia. J Infect Chemother 21:492–496

Hwang EJ, Park CM (2020) Clinical implementation of deep learning in thoracic radiology: potential applications and challenges. Korean J Radiol 21:511–525

Nagendran M, Chen Y, Lovejoy CA et al (2020) Artificial intelligence versus clinicians: systematic review of design, reporting standards, and claims of deep learning studies. BMJ 368:m689

Nam JG, Park S, Hwang EJ et al (2019) Development and validation of deep learning-based automatic detection algorithm for malignant pulmonary nodules on chest radiographs. Radiology 290:218–228

Hwang EJ, Park S, Jin KN et al (2019) Development and validation of a deep learning-based automated detection algorithm for major thoracic diseases on chest radiographs. JAMA Netw Open 2:e191095

Hwang EJ, Park S, Jin KN et al (2019) Development and validation of a deep learning-based automatic detection algorithm for active pulmonary tuberculosis on chest radiographs. Clin Infect Dis 69:739–747

Murphy K, Smits H, Knoops AJG et al (2020) COVID-19 on chest radiographs: a multireader evaluation of an artificial intelligence system. Radiology 296:E166–E172

Sim Y, Chung MJ, Kotter E et al (2020) Deep convolutional neural network-based software improves radiologist detection of malignant lung nodules on chest radiographs. Radiology 294:199–209

Sung J, Park S, Lee SM et al (2021) Added value of deep learning-based detection system for multiple major findings on chest radiographs: a randomized crossover study. Radiology 299:450–459

Itri JN, Tappouni RR, McEachern RO, Pesch AJ, Patel SH (2018) Fundamentals of diagnostic error in imaging. Radiographics 38:1845–1865

Hwang EJ, Hong JH, Lee KH et al (2020) Deep learning algorithm for surveillance of pneumothorax after lung biopsy: a multicenter diagnostic cohort study. Eur Radiol 30:3660–3671

Hwang EJ, Nam JG, Lim WH et al (2019) Deep learning for chest radiograph diagnosis in the emergency department. Radiology 293:573–580

Nam JG, Kim M, Park J et al (2021) Development and validation of a deep learning algorithm detecting 10 common abnormalities on chest radiographs. Eur Respir J 57:2003061

Jang S, Song H, Shin YJ et al (2020) Deep Learning-based automatic detection algorithm for reducing overlooked lung cancers on chest radiographs. Radiology 296:652–661

Nam JG, Hwang EJ, Kim DS et al (2020) Undetected lung cancer at posteroanterior chest radiography: potential role of a deep learning-based detection algorithm. Radiol Cardiothorac Imaging 2:e190222

Hwang EJ, Lee JS, Lee JH et al (2021) Deep learning for detection of pulmonary metastasis on chest radiographs. Radiology. https://doi.org/10.1148/radiol.2021210578:210578

Degnan AJ, Ghobadi EH, Hardy P et al (2019) Perceptual and interpretive error in diagnostic radiology-causes and potential solutions. Acad Radiol 26:833–845

Waite S, Scott J, Gale B, Fuchs T, Kolla S, Reede D (2017) Interpretive error in radiology. AJR Am J Roentgenol 208:739–749

Bruno MA, Walker EA, Abujudeh HH (2015) Understanding and confronting our mistakes: the epidemiology of error in radiology and strategies for error reduction. Radiographics 35:1668–1676

Van Calster B, Wynants L, Verbeek JFM et al (2018) Reporting and interpreting decision curve analysis: a guide for investigators. Eur Urol 74:796–804

Fitzgerald M, Saville BR, Lewis RJ (2015) Decision curve analysis. JAMA 313:409–410

Hwang EJ, Kim H, Lee JH, Goo JM, Park CM (2020) Automated identification of chest radiographs with referable abnormality with deep learning: need for recalibration. Eur Radiol 30:6902–6912

Acknowledgements

The present study was supported by the Seoul National University Hospital Research Fund (grant number: 03-2021-0270).

Funding

This study has received funding by from the Seoul National University Hospital Research Fund (grant number: 03-2021-0270).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Guarantor

The scientific guarantor of this publication is Chang Min Park.

Conflict of interest

Eui Jin Hwang received a research grant from Lunit Inc. outside the present study. Hyungjin Kim received a research grant from Lunit Inc. outside the present study and holds stock of MedicalIP. Soon Ho Yoon works in MedicalIP as an unpaid chief medical officer and holds stock options of Medical IP. Ju Gang Nam received a research grant from Vuno, outside the present study. Chang Min Park received a research grant from Lunit Inc. outside the present study and holds stock of Promedius and stock options of Lunit Inc. and Coreline Soft.

Statistics and biometry

No complex statistical methods were necessary for this paper.

Informed consent

Written informed consent was waived by the Institutional Review Board.

Ethical approval

Institutional Review Board approval was obtained.

Methodology

• retrospective

• diagnostic or prognostic study

• performed at one institution

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Hwang, E.J., Park, J., Hong, W. et al. Artificial intelligence system for identification of false-negative interpretations in chest radiographs. Eur Radiol 32, 4468–4478 (2022). https://doi.org/10.1007/s00330-022-08593-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00330-022-08593-x