Abstract

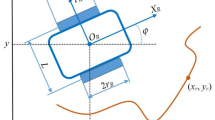

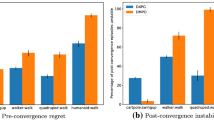

Adaptability to the environment is crucial for mobile robots, because the circumstances, including the body of the robot, may change. A robot with a large number of degrees of freedom possesses the potential to adapt to such circumstances, but it is difficult to design a good controller for such a robot. We previously proposed a reinforcement learning (RL) method called the CPG actor-critic method, and applied it to the automatic acquisition of vermicular locomotion of a looper-like robot through computer simulations. In this study, we developed a looper-like robot and applied our RL method to the control of this robot. Experimental results demonstrate fast acquisition of a vermicular forward motion, supporting the real applicability of our method.

Similar content being viewed by others

References

Peters J, Vijayakumar S, Schaal S (2003) Reinforcement learning for humanoid robotics. 3rd IEEE International Conference on Humanoid Robotics, Germany

Taga G, Yamaguchi Y, Shimizu H (1991) Self-organized control of bipedal locomotion by neural oscillators in unpredictable environment. Biol Cybern 65:147–159

Fukuoka Y, Kimura H, Cohen AH (2003) Adaptive dynamic walking of a quadruped robot on irregular terrain based on biological concepts. Int J Robotics Res 22:187–202

Nakamura Y, Mori T, Ishii S (2004) International conference on parallel problem solving from nature. (PPSN VII), LNCS 3242, Springer Berlin, Heidelberg, pp 972–981

Nakamura Y, Mori T, Sato M, et al. (2007) Reinforcement learning for a biped robot based on a CPG actor-critic method. Neural Networks 20(6):723–735

Fukunaga S, Nakamura Y, Aso K, et al. (2004) Reinforcement learning for a snake-like robot controlled by a central pattern generator. IEEE Conference on Robotics, Automation and Mechatronics, pp 909–914

Nakamura Y, Mori T, Ishii S (2006) Natural policy gradient reinforcement learning method for a looper-like robot. 11th International Symposium on Artificial Life and Robotics (AROB11), 2006 Beppu, Oita, Japan

Konda VR, Tsitsiklis JN (2003) Actor-critic algorithms. SIAM J Control Optimization 42:1143–1146

Sutton RS, McAllester D, Singh S et al. (2000) Policy gradient method for reinforcement learning with function approximation. Adv Neural Inf Process Syst 12:1057–1063

Author information

Authors and Affiliations

Corresponding author

About this article

Cite this article

Makino, K., Nakamura, Y., Shibata, T. et al. Adaptive control of a looper-like robot based on the CPG-actor-critic method. Artif Life Robotics 12, 129–132 (2008). https://doi.org/10.1007/s10015-007-0453-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10015-007-0453-9