Abstract

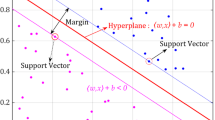

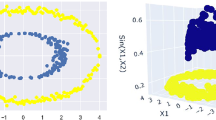

In this paper we propose some methods to build a kernel matrix for classification purposes using Support Vector Machines (SVMs) by fusing Gaussian kernels. The proposed techniques have been successfully evaluated on artificial and real data sets. The new methods outperform the best individual kernel under consideration and they can be used as an alternative to the parameter selection problem in Gaussian kernel methods.

Chapter PDF

Similar content being viewed by others

References

Amari, S., Wu, S.: Improving support vector machine classifiers by modifying kernel functions. Neural Networks 12, 783–789 (1999)

Bousquet, O., Herrmann, D.J.L.: On the complexity of learning the kernel matrix. In: Becker, S., Thurn, S., Obermayer, K. (eds.) Advances in Neural Information Processing Systems, vol. 15, pp. 415–422. The MIT Press, Cambridge (2003)

Chapelle, O., Vapnik, V., Bousquet, O., Mukherjee, S.: Choosing multiple parameters for support vector machines. Machine Learning 46(1/3), 131–159 (2002)

Gower, J.C., Legendre, P.: Metric and euclidean properties of dissimilarity coefficients. Journal of Classification 3, 5–48 (1986)

Keerthi, S.S., Lin, C.: Asymptotic behaviors of support vector machines with gaussian kernel. Neural Computation 15, 1667–1689 (2003)

Lanckriet, G.R.G., Cristianini, N., Barlett, P., El Ghaoui, L., Jordan, M.I.: Learning the kernel matrix with semi-definite programming. Journal of Machine Learning Research 5, 27–72 (2004)

Lee, J.-H., Lin, C.-J.: Automatic model selection for support vector machines. Technical report, National Taiwan University (2000)

Lehmann, E.L.: Nonparametrics: Statistical Methods Based on Ranks. McGraw-Hill, New York (1975)

Mangasarian, O.L., Wolberg, W.H.: Cancer diagnosis via linear programming. SIAM News 23(5), 1–18 (1990)

Moguerza, J.M., Martín de Diego, I., Muñoz, A.: Improving support vector classificacion via the combination of multiple sources of information. In: Fred, A., Caelli, T.M., Duin, R.P.W., Campilho, A.C., de Ridder, D. (eds.) SSPR&SPR 2004. LNCS, vol. 3138, pp. 592–600. Springer, Heidelberg (2004)

Pȩkalska, E., Duin, R.P.W., Günter, S., Bunke, H.: On not making dissimilarities euclidean. In: Fred, A., Caelli, T.M., Duin, R.P.W., Campilho, A.C., de Ridder, D. (eds.) SSPR&SPR 2004. LNCS, vol. 3138, pp. 1145–1154. Springer, Heidelberg (2004)

Pȩkalska, E., Paclík, P., Duin, R.P.W.: A generalized kernel approach to dissimilarity-based classification. Journal of Machine Learning Research, Special Issue on Kernel Methods 2(12), 175–211 (2001)

Schittkowski, K.: Optimal parameter selection in support vector machines. Journal of Industrial and Management Optimization 1(4), 465–476 (2005)

Schölkopf, B., Mika, S., Burges, C.J.C., Müller, K.-R., Knirsch, P., Rätsch, G., Smola, A.J.: Input space vs. feature space in kernel-based methods. IEEE Transactions on Neural Networks (1999)

Silverman, B.: Density Estimation for Statistics and Data Analysis. Chapman and Hall, London (1986)

Vandenberghe, L., Boyd, S.: Semidefinite programming. SIAM Review 38(1), 49–95 (1996)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2006 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Moguerza, J.M., Muñoz, A., de Diego, I.M. (2006). Fusion of Gaussian Kernels Within Support Vector Classification. In: Martínez-Trinidad, J.F., Carrasco Ochoa, J.A., Kittler, J. (eds) Progress in Pattern Recognition, Image Analysis and Applications. CIARP 2006. Lecture Notes in Computer Science, vol 4225. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11892755_98

Download citation

DOI: https://doi.org/10.1007/11892755_98

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-46556-0

Online ISBN: 978-3-540-46557-7

eBook Packages: Computer ScienceComputer Science (R0)